Learning how to monitor AI search visibility starts with choosing the right prompts based on buyer questions — not keyword lists. Track brand mentions, citations, and share of voice across AI search engines and countries. Then turn those findings into execution: content to create, sources to earn, technical fixes to ship. Most teams treat this like a dashboard project, but monitoring should produce decisions and action. Omnia helps you move from AI signals to execution, giving you more than just charts.

If your “monitoring” process is screenshots and vibes, you’re not monitoring. You’re hoping.

AI visibility isn’t a position you can track. It’s a set of inclusion decisions made by models pulling from whatever sources they trust in that moment. That’s why it feels like measuring smoke.

So treat it like an engineering loop. Define prompts from buyer questions, rerun enough to get signal, extract mentions and citations, then turn it into an execution backlog. This guide explains how to do exactly that, with a bonus 15-day monitoring plan so you can start seeing results before you’ve burned a quarter trying to gamify “AI SEO” with nothing to show for it.

What AI search visibility means in 2026

AI search visibility is how often your brand appears in AI-generated answers and how credibly AI platforms position you. Unlike traditional search where you optimize for rankings, AI engines like Google AI Overviews, ChatGPT, Perplexity, and Meta AI generate direct responses that mention only a few brands.

Visibility in AI search encompasses four dimensions:

- Presence in AI answers

- Share of voice

- Citation presence

- Geographical variance

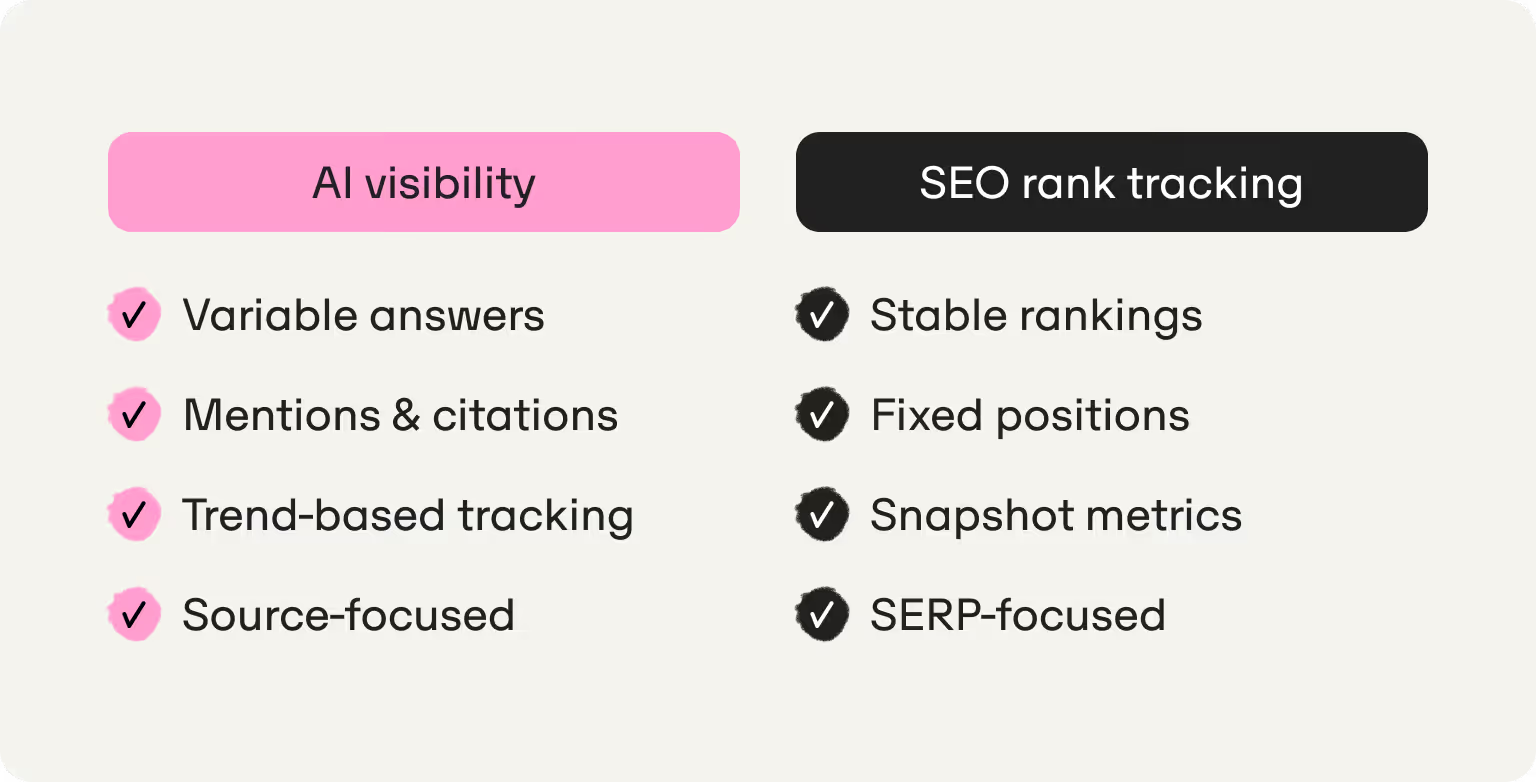

AI visibility vs SEO rank tracking

Traditional search engines return consistent results. A Google search for “best CRM for startups” returns the same rankings today and tomorrow. AI search platforms give variable AI answers with different brand mentions, context, and citations — even if you ask the same question just minutes apart.

This variance is inherent to how AI systems work. Major AI platforms synthesize information from multiple sources using generative models, meaning your brand performance varies across AI search engines and queries.

Search “best CRM for startups” on ChatGPT and Salesforce is the top-ranked pick.

Search the same phrase in a different chat, moments later, and now Salesforce isn’t even in the top two.

Both AI responses pull from different sources to reach these conclusions. It highlights the importance of repetition, baseline measurement, and trend interpretation — not one-time snapshots. Single queries tell you nothing about your actual AI search presence.

Datos and SparkToro also found the domains that dominate AI citations don’t match the top search rankings. Platforms like Canva, Anthropic and Github appear often in AI answers despite not ranking in traditional “top search destinations” — illuminating the difference in intent between AI searchers and traditional search users.

An automated AI visibility tool is one of the best ways to monitor AI brand visibility at scale. These platforms run prompts consistently, extract mentions and citations, and show trends over weeks.

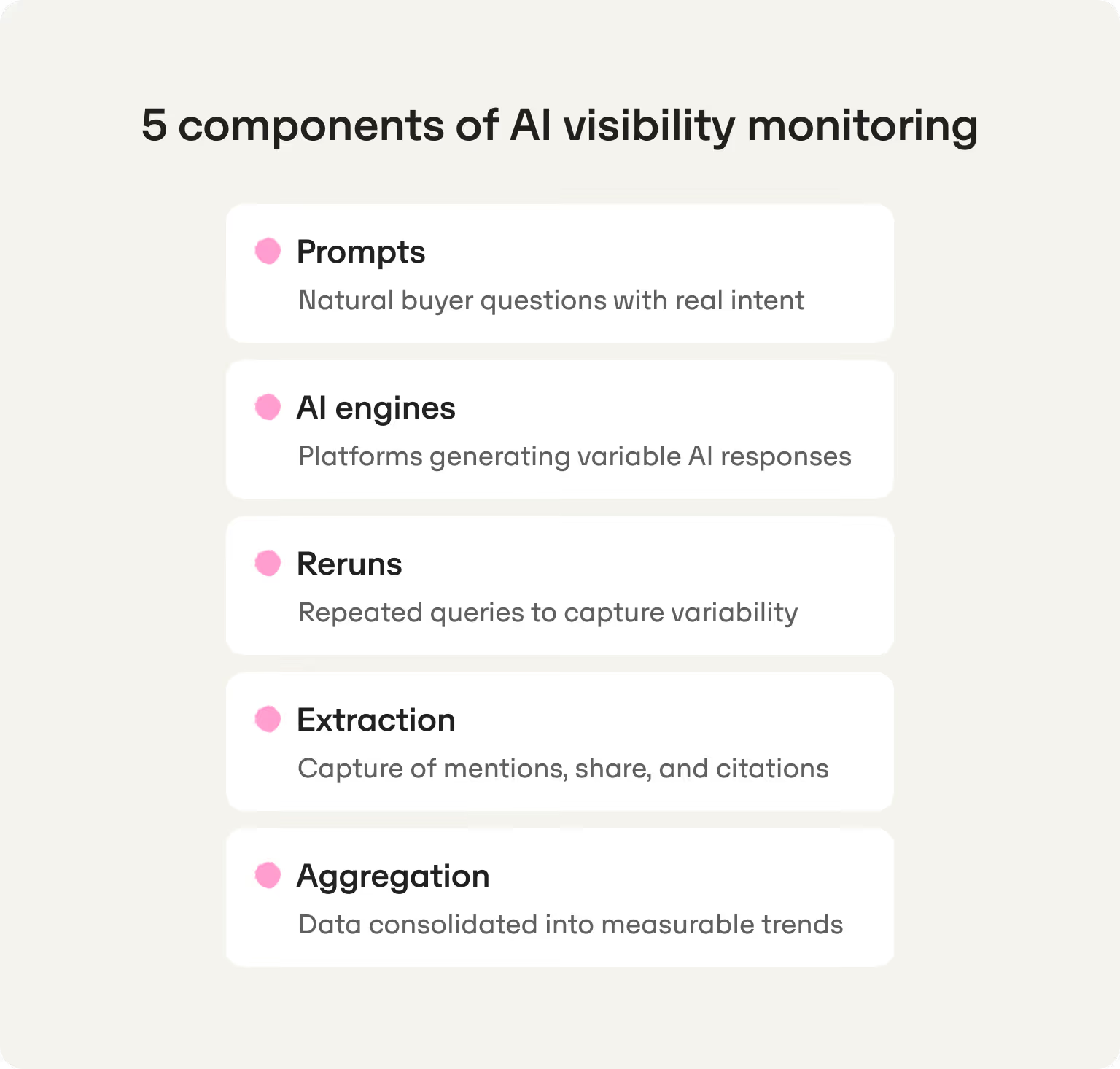

How AI visibility monitoring works

AI visibility monitoring operates through five interconnected components that turn buyer questions into intelligence you can act on:

- Prompts are the natural language questions buyers ask AI platforms.

- AI engines are the platforms you track.

- Reruns mean asking prompts multiple times.

- Extraction identifies brand mentions, share of voice, and citations from AI responses.

- Aggregation turns raw AI visibility data into insights.

Prompt monitoring — why prompts are not keywords

Prompts are complete buyer questions, not keyword fragments. AI search engines respond to questions with context. Instead of optimizing for the keyword string “best CRM for startups” it would be more effective to target something like “what's the best CRM for a five-person sales team with a $500/month budget?”

Your monitoring set should come from real customer language found in sales calls, demo transcripts, support tickets, and community threads. Real customer questions reveal intent and criteria buyers use to evaluate solutions. Generic keyword tool exports miss this context entirely.

Mentions and share of voice — how tools calculate it

A mention is any brand reference in AI-generated responses. If ChatGPT lists five CRM tools and yours appears, you get one mention. If it doesn't mention you, that’s zero.

Share of voice measures visibility relative to competitors. If four brands appear and you're one, you hold 25% share of voice for that prompt. Marketing teams track both metrics. Mentions show inclusion frequency, share of voice shows dominance when you do appear.

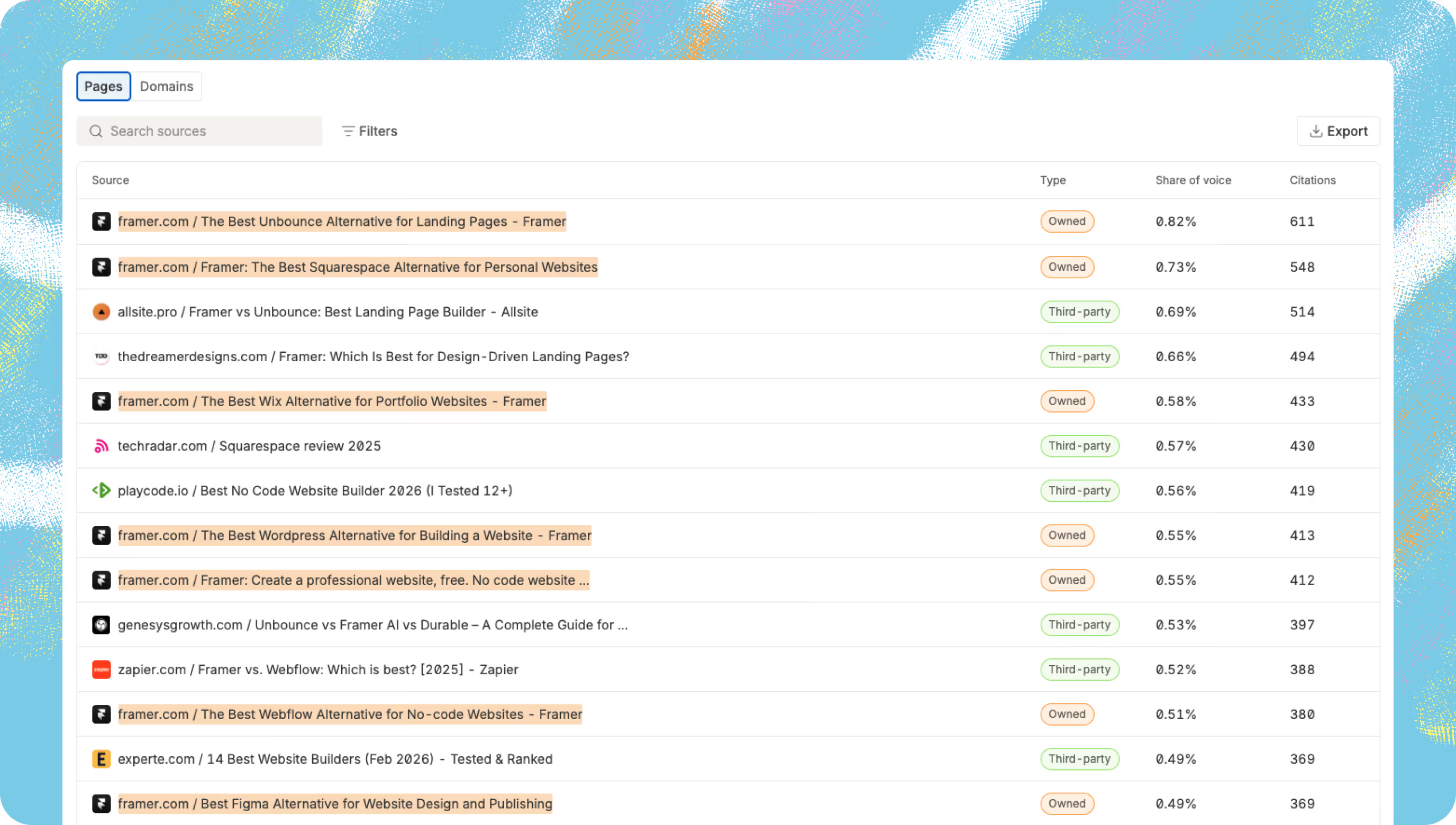

Citation tracking — the action layer starts here

Citations are where monitoring becomes actionable. When Perplexity or Google AI cites a source URL, that's a citation showing exactly where AI-powered search engines pull information from.

Citation tracking reveals what AI relies on and where you’re falling short: competitor comparison pages you lack, third-party review sites you're missing from, AI-optimized content formats (listicles, FAQs, docs) that AI search engines prefer.

Geographical tracking — why country-level monitoring is non-negotiable

AI visibility changes by country. The same prompt in Spain versus Argentina produces different brand mentions and citations because AI platforms prioritize local sources, language differences affect retrieval, and market-specific training data shapes responses.

For startups expanding internationally, geographical tracking is essential. You might dominate UK prompts with 60% share of voice but have zero visibility in Spain for identical buyer questions. The best AI visibility monitoring tools track prompts across multiple countries.

The 4-step system to monitor AI search visibility

Monitoring AI visibility without a system produces dashboards no one acts on. Here's a complete workflow that should move you from tracking to execution.

Step 1 — Build a prompt set that matches real buyer intent

Start with customer language

Building a monitoring set means finding the exact questions buyers ask AI platforms. These seven methods extract trackable prompts from existing customer data, competitor signals, and AI platforms themselves:

- Mine Search Console for long-tail queries (5+ words) using regex: ^(\S+\s+){5,}\S+

- Analyze competitor search ads from Google Ads Transparency Center — screenshot ads, paste into ChatGPT: “Turn this ad copy into questions customers ask AI”

- Export your Google Ads Search Terms and ask ChatGPT: “Create prompts users ask AI from this data”

- Ask AI directly using the formula: [Best X] + [business size] + [constraint] + [use case/comparison]

- Mine Perplexity's related questions after asking about your brand

- Use ChatGPT follow-ups: Turn suggestions like “tailor based on budget?” into “best CRM for startups on low budget?”

- Check Reddit Answers for common questions about your category

Turn questions into prompt clusters

Organize prompts by buyer journey stage:

- Category discovery (“best X for Y”): Buyers know their problem but not solution types.

- Alternatives and comparisons: (“X vs Y” or "alternatives to Z”): Critical for share of voice monitoring

- Integrations (“CRM that integrates with Gmail and Slack”): Buyers need stack compatibility

- Use cases (“best CRM for nonprofits”): Industry or size-specific

- Pricing prompts “how much does X cost”): Address transparency and ROI

- Evaluation prompts (“does X have mobile app"): Late-stage functionality questions

Prioritize prompts you can actually win

Not all prompts are winnable. Focus on long-tail questions where your ICP and positioning give leverage.

If you're a 10-person startup, "best CRM" isn't winnable. You can’t compete with the Salesforce and Hubspot titans of the industry. Instead, target “Best CRM for early-stage SaaS startups under $100/month.” Choose prompts where your constraints become advantages and where comprehensive AI search optimization means owning topics that matter.

Step 2 — Measure baseline visibility (and don't fool yourself)

Baseline measurement means running your prompt set, recording where you appear, tracking share of voice, capturing citations, and doing this consistently so you have a reference point.

To establish baseline, define four parameters:

- Which AI search platforms: Target Google AI Overviews, ChatGPT, and Perplexity. Add Google Gemini or Claude if your ICP uses them.

- Which countries: Start with your primary market. Add secondary markets after establishing baseline in the first.

- Which competitors: Choose 3-5 direct competitors for meaningful share of voice calculations.

- What cadence: Weekly is the standard for active monitoring. Monitor daily if you’re shipping fast and testing. Monthly is too slow for iterative teams.

Manual monitoring (good for a first baseline)

For 10-20 prompts, you can monitor manually. Just open incognito Chrome (or VPN for geo), ask each prompt on each AI platform, then log this information in a spreadsheet:

- Prompt

- Platform

- Date

- Your mention (yes/no)

- Competitors mentioned

- Context

- Citations.

As prompts grow, this becomes too time-consuming. There might be inconsistent execution (fatigue, errors), you don’t see trend visualization, and it’s hard to calculate share of voice across 100+ data points. Manual checks also don’t capture variance — you need reruns for trends.

Manual works for baseline or testing AI visibility as a channel. It's not sustainable for serious search monitoring.

Automated monitoring (what serious teams use)

Automated tools run prompts consistently, extract data automatically, and show you trends. They have key capabilities you can’t afford to miss out on:

- Consistent reruns across all AI search engines you've selected

- Cross-engine coverage from one dashboard

- Geographical views by country without manual VPN switching

- Automatic extraction of brand mentions

- Share of voice calculations

- Trend lines showing performance over weeks.

Learning to monitor brand visibility on AI engines starts with Omnia. The platform runs prompts via browser sessions that mirror real user experiences. That matters because AI platforms can respond differently via APIs than they do in consumer interfaces. Browser-based runs are a closer proxy for what buyers actually see.

Step 3 — Diagnose why you're not showing up (citations + gaps)

Monitoring shows where you stand. Diagnosis turns data into decisions about what to fix and where to focus.

Identify missing coverage

Review prompts where competitors appear but you don't. Filter your monitoring data for zero-mention prompts and low share of voice prompts.

Look at AI-generated answers for these prompts. What do competitors have that you lack? Comparison pages you haven't created? Use case pages missing from your site? Integration documentation for tools you support but don't document? Clearer positioning in homepage messaging?

Missing coverage often signals content gaps or positioning gaps. That means you either lack the page or your page doesn't clearly answer the buyer question.

Find what AI is relying on

When AI engines mention competitors, which URLs and domains do they cite? This citation analysis becomes your action backlog.

Filter citation data by competitor to see patterns: competitor's own site, third-party review sites, listicles and roundups, Reddit or community discussions, news and press coverage.

These cited sources show you exactly what to create and where to publish. If competitors are cited from G2 reviews, strengthen your G2 presence. If roundup posts drive 30% of citations, earn placement in those.

Separate problems into three buckets

Once you've analyzed citations and coverage, sort problems into three key categories:

- Content gap: You don't have the page AI needs. Competitors have detailed comparison pages or use-case guides for target verticals that you haven’t created.

- Authority gap: You have content but AI answer engines cite competitors or third parties instead. This means you lack external signals like backlinks, brand mentions on authoritative sites, and review platform presence.

- Clarity gap: Your content exists but isn't cited. You might be missing site structure, schema markup, or scannable formatting.

Most brands have all three problems. Prioritize based on prompt coverage potential. If you're missing 20 pages, create content first. If pages exist but you have zero external authority, focus on placement.

Step 4 — Turn signals into execution (then re-measure)

Diagnosis is only valuable when it drives action. The point of monitoring is to ship improvements.

Your citation analysis reveals exactly what formats AI engines prefer and which domains they trust. Use this intelligence to prioritize what to create, what to optimize, and where to publish.

For comprehensive guidance on content optimization, placement strategies, and technical implementation, see our playbook on how to improve brand mentions and visibility in ChatGPT.

Re-measure on a weekly cadence

After shipping, rerun prompts weekly to catch real movement as AI engines index your changes. While traditional SEO takes 3-6 months to show results, AI visibility can move significantly faster — with the right platform. INDYA worked with Omnia and went from 16% to 53% visibility in their main topic within just 10 days. This 3.3x increase was achieved after publishing one well-structured listicle with decision frameworks, comparison tables, FAQs, and proper schema. They jumped from 5th to 2nd most-mentioned brand, outcompeting higher-authority competitors who hadn't built for AI yet.

To see if your brand is achieving similar results, track these metrics weekly: visibility rate trends, share of voice by prompt cluster, citation growth, top cited domains, and prompt-level changes showing which content works. This weekly cycle turns monitoring into a feedback loop—ship, measure what moved, iterate.

What to monitor (metrics that actually matter)

Most dashboards show too much. Here's the minimum viable set of metrics for lean teams running AI visibility tracking:

- Visibility rate: Percentage of your prompt set where your brand appears

- Share of voice: Your brand's percentage of total mentions in answer sets

- Citation frequency: How often your owned content (blog, docs, product pages) gets cited versus third-party sources

- Top cited domains: Which domains AI answer engines trust most — both yours and competitors

- Geographical deltas: Visibility rate and share of voice by country

- Prompt coverage by cluster: Which clusters perform well versus lag

Focus on creating useful content, earning citations, and making your site parseable.

A 15-day monitoring plan for startups and scale-ups

Here's an executable plan for teams with limited bandwidth — designed for 10-20 person startups shipping fast. This plan focuses on building AI search visibility monitoring infrastructure so you can start executing visibility improvements immediately.

Week 1 (Days 1-7): Foundation

Days 1-3: Build prompt set, define competitors, select engines/countries

- Build your initial prompt set (20-30 prompts across 3-4 clusters)

- Define your competitor set (3-5 direct competitors)

- Select AI engines to track (minimum: Google AI Overviews, ChatGPT, Perplexity)

- Choose countries for geographical strategy (start with primary market)

- Decide on monitoring cadence (weekly recommended for active teams)

- Document your tracking framework so it's repeatable

Days 4-7: Set up monitoring infrastructure (manual or automated)

- If using manual tracking: Create tracking spreadsheet with columns for prompt, platform, date, mention (yes/no), competitors mentioned, citations, share of voice

- If using automated tools: Configure Omnia or a similar AI search visibility tool with your prompt set, competitors, engines, and countries. Set up automated reruns (daily or weekly)

- Create dashboard views for visibility rate, share of voice, citation analysis

- Establish alert thresholds for significant changes

Week 2 (Days 8-15): Baseline + action

Days 8-10: Run baseline across all platforms and geos

- Run complete prompt set across the AI engines and countries you defined in week 1

- Record baseline metrics: visibility rate by platform and country, share of voice by prompt cluster, citation patterns, competitor overlap analysis

- Compare cross-platform patterns (which engines favor your brand, which don't)

- Document baseline data as your reference point for all future tracking

Days 11-12: Analyze baseline, identify gaps, prioritize fixes

- Analyze baseline data

- Filter for zero-mention prompts and low share of voice prompts

- Extract citation patterns by platform and competitor

- Sort gaps into content gaps, authority gaps, clarity gaps

- Prioritize top 3-5 highest-impact fixes based on prompt coverage potential

- Create an action backlog: specific pages to create, domains to target for placement, technical fixes needed

Days 13-15: Ship 2-3 quick wins, establish weekly tracking cadence

- Ship 1-2 quick wins this week based on your prioritized fixes

- Schedule weekly rerun to track movement

- Establish your ongoing monitoring rhythm: weekly prompt reruns, weekly analysis of deltas, prioritized backlog of improvements, regular shipping of fixes

How Omnia helps you monitor — and act

Omnia exists to help brands win visibility in AI engines by turning real AI signals into action:

- Geo-first monitoring: Track across Google AI Overviews, ChatGPT, and Perplexity with country-level visibility — know which markets need localized content.

- Citation intelligence: See which URLs and domains AI answer engines cite when mentioning brands in your category, showing which sources to target.

- Action layer: Unlike tools that stop at monitoring, Omnia recommends what to create next, based on citations. Turns insights into execution — not another dashboard to ignore.

- Built for startups: Focuses on prompts you can actually win — long-tail buyer questions where positioning gives you leverage. Designed for lean teams moving fast.

Book a demo to see how Omnia tracks brand visibility on AI platforms and surfaces citation opportunities. Start tracking your first prompts this week with a 14-day free trial on Omnia’s Growth plan.

FAQs

How to monitor brand AI visibility?

Start by building a prompt set from real buyer questions, then use automated tools to track your brand across major AI models — measuring visibility rate, share of voice for competitor benchmarking, and citations that influence brand perception. The key is monitoring AI visibility across platforms consistently, then using that data for generative engine optimization (GEO): creating content AI engines cite and earning placements on domains they trust.

What's more important, mentions or citations?

Both are key metrics for AI search optimization, but brands that earn both a mention and a citation are much more likely to resurface in future AI answers. Citations show you which sources AI answer engines trust, turning brand visibility tracking into a backlog of what to create and where to publish.

How long does it take to improve AI visibility once I start tracking?

New content typically takes 2-4 weeks to appear in AI answers, so expect gradual improvement rather than overnight spikes in brand presence. Monitoring brand visibility on AI platforms with consistent reruns gives you AI search insights to measure progress weekly — track visibility rate and share of voice trends to see what's working.

Can I monitor AI visibility manually?

Manual monitoring works for an initial baseline with 10-20 prompts, but it doesn't scale or capture variance in AI mentions across multiple reruns. For scalable brand monitoring, you need automated tools that rerun prompts consistently across Google AI mode, ChatGPT, Perplexity, and other platforms to establish reliable patterns.

How often should I re-run prompts?

Weekly reruns are standard for active brand presence monitoring, giving you enough AI search insights to spot trends without overwhelming your workflow. Daily reruns make sense if you're shipping content fast and testing changes, but monthly is too slow for teams executing systematic AI search optimization.

.avif)