Google AI Overviews cite specific URLs and domains, not just high-ranking pages. That means visibility now means getting cited, not just getting clicks. The fastest wins come from three places: making sure your pages are crawlable and indexed, formatting content so AI can extract a clean answer, and publishing on sources Google already trusts. Measurement shifts too: track citation presence and share of voice, not just organic traffic. If you only do three things this week, audit whether you appear in AI Overviews for your top 30 queries, identify which domains keep getting cited instead of you, and add a tight answer capsule to your highest-priority pages.

Organic traffic is doing something unfamiliar. It is not dropping in the way a penalty would cause, and it is not spiking the way a viral piece might. It is quietly flattening, and for a growing number of queries, the reason is sitting right at the top of the search results page: a Google AI Overview that synthesizes an answer, cites a handful of sources, and sends the user on their way without a click.

If your domain is not in those citations, you are not losing to a competitor who wrote better content. You are losing to a competitor whose content was easier for Google to extract, trust, and summarize.

This guide gives you a step-by-step playbook for changing that. You will learn how to confirm whether you appear in AI Overviews today, how to reverse-engineer which sources keep getting cited and why, and how to structure your pages so Google can pull from them cleanly. No guesswork, no vague "produce quality content" advice. Just a repeatable process you can run by yourself this week and measure the week after.

What it means to be visible in Google AI Overviews

Visibility in Google AI Overviews means one of three things:

- Your URL is cited as a source inside the generated answer

- Your brand is mentioned by name within the synthesized response

- Your content shapes the answer even without explicit attribution

All three matter. None of them are guaranteed by ranking position alone, and none of them are permanent once earned.

What visibility does not mean is worth being equally clear about:

- It does not mean consistent clicks

- It does not mean a stable placement you can set and forget

- It does not mean that ranking on page one automatically makes you a cited source

Brands are being excluded from AI Overviews every day while their organic rankings stay exactly where they were. The signals that earn citation are different from the signals that earn a blue link, and conflating the two is the most common reason teams waste months optimizing for the wrong thing.

The practical implication is simple: if you are not tracking whether your domain appears in AI Overview citations specifically, you do not actually know where you stand in AI search. Traffic reports will not tell you. Rank trackers will not tell you. You need to be looking at the citation layer directly.

How to show up in AI Overviews SEO vs traditional SEO

Traditional SEO has a straightforward value chain:

- You rank a page

- A user searches and sees your result

- They click

The better your position, the more clicks you earn. Optimization is mostly about getting to position one and keeping it there.

AI Overviews break that chain at two points.

First, Google's model synthesizes an answer before the user ever sees a list of results. Second, the sources it cites are not always the top-ranking pages. A page sitting at position four or five can get cited. A page sitting at position one can get skipped entirely.

As we cover in more depth in our guide on how to monitor AI search visibility, the measurement framework has to change before the optimization strategy can follow.

What stays the same:

- Crawlability and indexability are still the floor

- Helpful, well-structured content still wins

- Page experience signals still matter

What changes:

- Content needs to be extractable as a direct, self-contained answer

- It needs to be corroborated by sources Google already trusts

- Structure matters more than keyword density

Ranking in AI Overviews is really a citation problem. And citation is earned through structure, trust, and specificity, not keyword placement alone.

The teams who figure this out earliest will hold a compounding advantage. Understanding how AI visibility platforms approach measurement differently from traditional rank trackers is a useful first step in calibrating your tooling to match the new reality.

How Google AI Overviews work (the parts SEOs can influence)

Here is what Google AI Overviews are actually doing: crawling the web, synthesizing information from multiple sources, and constructing what it judges to be the most complete, trustworthy answer to a query. It is not promoting a winner. It is building a response, then deciding which sources deserve credit for it.

What this means for your pages comes down to three things:

- Google needs to crawl and render your content without friction

- The model needs to trust the source, not just find it

- Your answer needs to be easy to extract, not buried three paragraphs deep

You cannot reach into the model and change how it thinks. But you can control every input it relies on. What it can access. What it trusts. How cleanly it can lift an answer from your page. That is where the leverage is.

The citation layer: why "ranking in AI Overviews" is really "getting cited"

Stop thinking about AI Overviews as a ranking problem. It is a citation problem.

When an AI Overview appears, Google is not handing a trophy to position one. It is assembling a set of sources it trusts enough to build an answer from. Your job is to be in that set, and to stay there across a cluster of related queries.

Here is what the citation layer actually looks like in practice:

- The same handful of domains appear repeatedly across related queries

- Being ranked first organically helps, but it does not guarantee a citation

- URL-level patterns are visible: specific page types and formats get pulled more than others

This is why citation analysis for AI search has become its own discipline, separate from traditional backlink work. Backlinks tell Google's algorithm what to rank. Citations tell the model what to trust and extract. The two overlap, but treating them as the same thing is a mistake that will cost you months of wasted effort.

Getting mentioned in Google AI Overviews consistently comes down to two things: being a repeatedly cited source across related queries, and having clear entity signals so Google knows exactly who you are and what you can be trusted to explain. The rest of this playbook builds toward both.

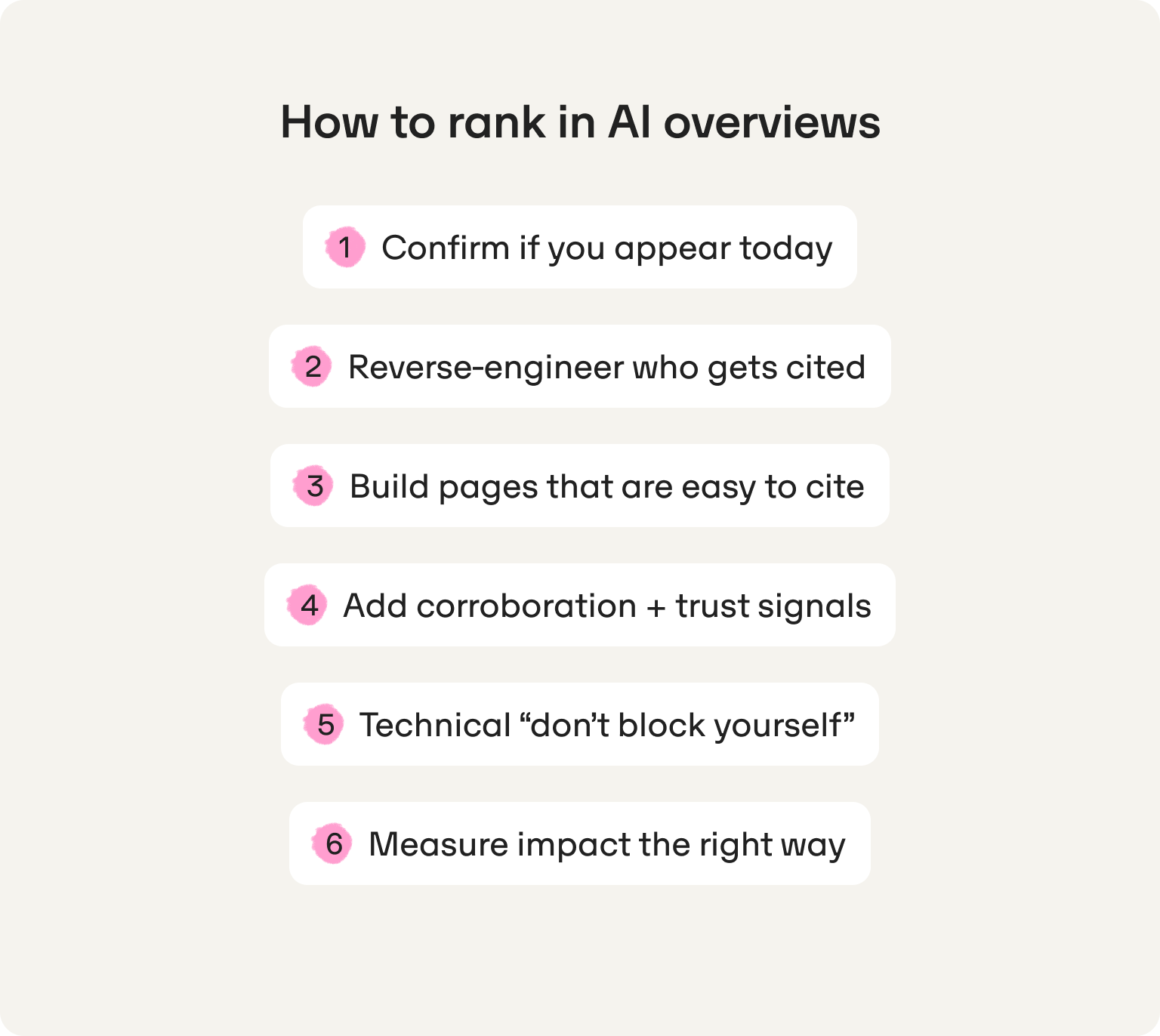

Step-by-step SOP: how to rank in AI Overviews (without guessing)

Most teams approach AI Overview visibility the same way they approached SEO over 15 years ago: scale by publishing more, hope for the best, and check rankings once a month. That approach did not work then and it will not work now.

What follows is a repeatable, ordered workflow. Run it once to establish your baseline. Run it weekly to track progress and catch shifts before competitors do.

Step 1: Confirm if you appear today (and by country)

Before you optimize anything, you need to know where you actually stand. Most teams skip this step and jump straight to content changes. That is how you end up improving pages that were never the problem.

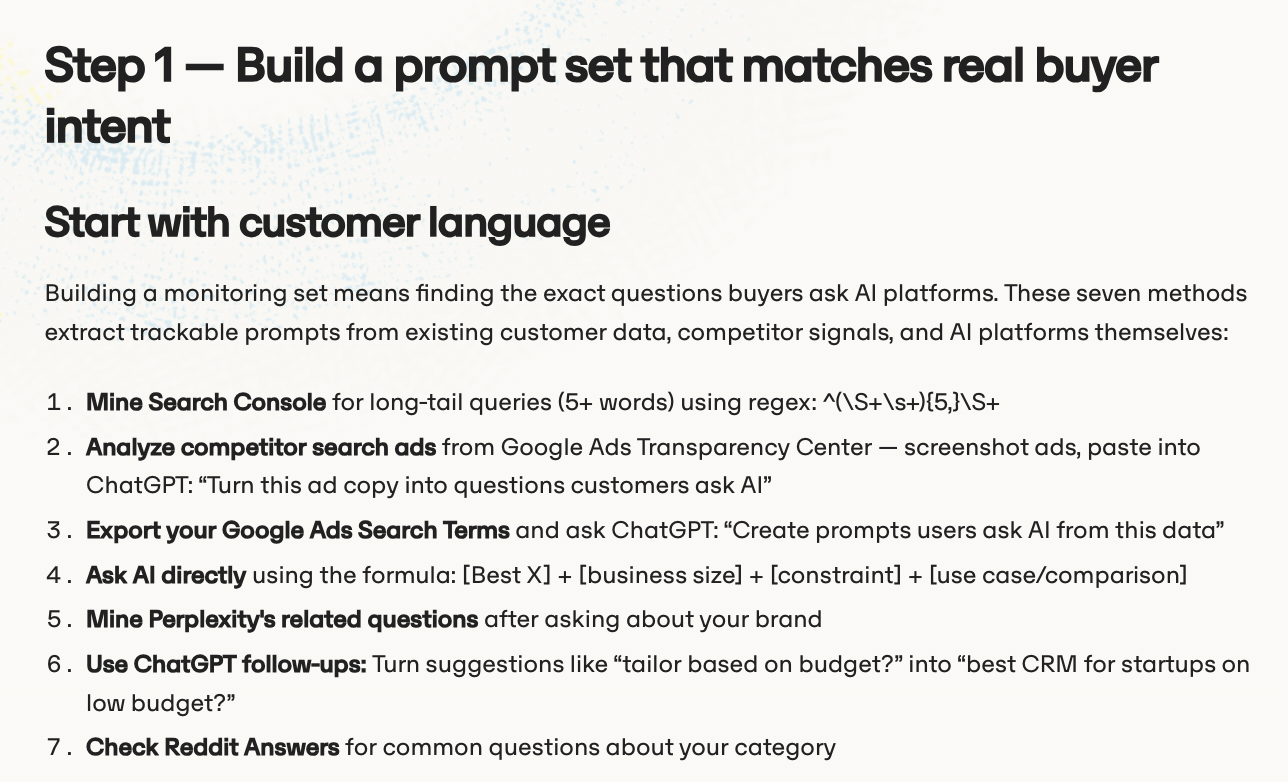

Build your seed query list:

- Start with 30 to 100 high-intent queries covering brand, category, and comparison terms

- Include "best," "compare," "how to," and "what is" variations

- Prioritize queries where you know buyers are making decisions

For each query, manually check and record:

- Does an AI Overview appear at all?

- Is your brand mentioned inside the answer?

- Is your domain cited as a source?

- Which competitor domains are cited instead?

Why country matters: AI Overview behavior varies significantly by market. A query that triggers an AIO in the US may not trigger one in the UK, and the cited sources can differ entirely between the two. If you are targeting multiple markets, you need localized tracking, not a single global snapshot. One set of results does not tell the full story.

Step 2: Reverse-engineer who gets cited (URLs and domains)

Once you know you are missing, the next question is: who is showing up instead, and why?

For each target query where you are not cited, capture:

- Which domains appear consistently across related queries

- Which URL types get pulled: guides, documentation, listicles, news, forums

- Content format patterns: are the cited pages using bullet lists, tables, short definitions, or step-by-step structures?

- Freshness signals: are cited pages recently updated, or are they evergreen pieces with strong authority?

Look for citation patterns, not one-off wins. If the same five domains keep appearing across a cluster of related queries, that tells you something about what Google trusts in your category, not just for one search term.

This is also where ChatGPT rank tracking methodology becomes relevant: the same citation intelligence principles that apply to Google AI Overviews apply across AI engines. Understanding who dominates across multiple platforms gives you a clearer picture of which sources have built genuine authority in your space.

At this stage you are not making changes yet. You’re mapping the citation landscape so that every decision you make next is grounded in what is actually happening, not what you assume should be happening.

Step 3: Build pages that are easy to cite (answer capsules and follow-ups)

This is where most of the content leverage lives. Google's model needs to be able to lift a clean, self-contained answer from your page quickly. If your content buries the answer in context and qualification, it will get skipped for a page that leads with it.

The answer capsule format:

After every H2 or H3, open with a one to two sentence direct answer before you expand. It should be:

- Declarative and unambiguous

- Written in plain language with no synonyms or clever wordplay

- Accurate without caveats that make it harder to extract

Example structure:

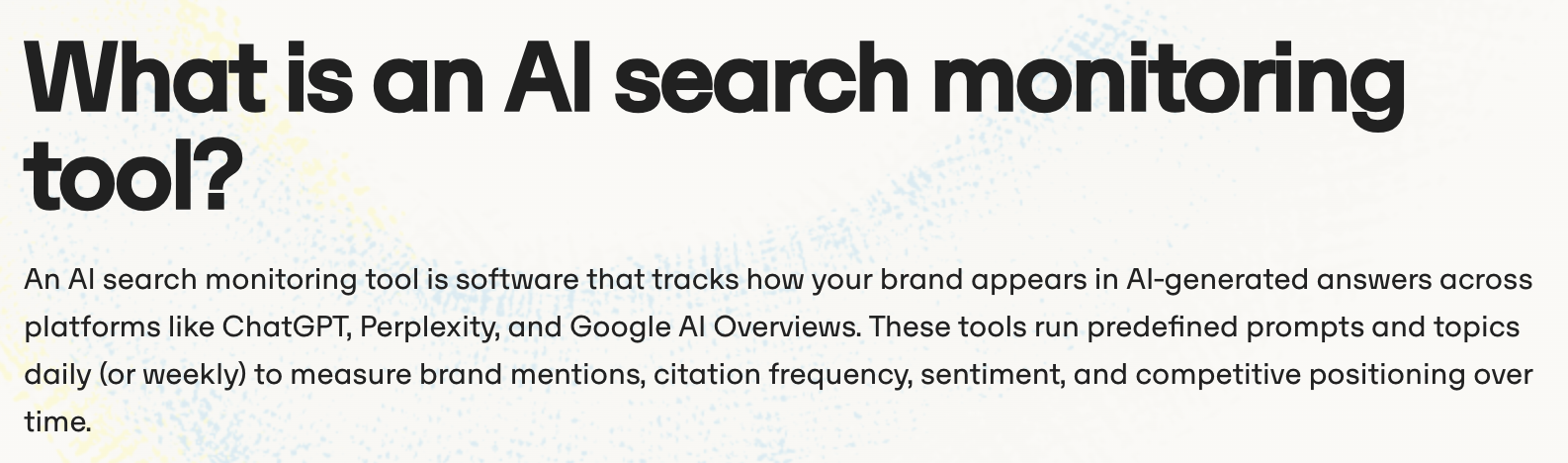

H3: What is an answer capsule?

An answer capsule is a short, self-contained statement placed at the top of a content section that directly answers the implied question of the heading. It is written to be extractable by AI engines without requiring surrounding context to make sense.

[Expansion follows: bullets, steps, examples, tables.]

Add "next question" sections. After covering a topic, anticipate the two or three questions a reader would naturally ask next and address them on the same page. AI models reward content that handles a full line of inquiry, not just a single query in isolation.

Step 4: Add corroboration and trust signals (E-E-A-T, entities, sources)

Structure gets you in the door. Trust signals keep you there.

Google is not just asking "does this page answer the question?" It is asking "should I trust this page enough to cite it in front of millions of users?" The following signals help answer that question in your favor:

Firsthand experience cues:

- Include process screenshots, real workflow examples, and specific constraints you have encountered

- Describe what broke, what worked, and what you would do differently, not just the polished outcome

Author and entity signals:

- Named authors with visible bios, relevant credentials, and links to their broader work

- A clear, consistent About page that explains who you are and what gives you the right to speak on this topic

- Consistent brand naming across every page, no abbreviations or variations that confuse entity recognition

Source citations:

- Link to primary sources: official Google documentation, standards bodies, and original research

- Cite data with clear labels: sample size, date range, and any limitations

Freshness signals:

- Add a visible "last updated" date to pages covering volatile topics

- Include a brief change log note when you make substantive updates, not cosmetic ones

Step 5: Technical "don't block yourself" checklist

None of the content work above matters if Google cannot access and render your pages cleanly. This is the floor, not the ceiling.

Run through this checklist before publishing or updating any AI Overview target page:

Crawl and index:

- Page returns HTTP 200

- Not blocked in robots.txt

- Canonical tag points to the correct URL

- Page is included in your XML sitemap

Rendering:

- Core content is not hidden behind heavy JavaScript without server-side rendering

- Google can render the page fully (test in Google Search Console's URL Inspection tool)

Structure:

- Heading hierarchy is clean: one H1, logical H2 and H3 structure below it

- Semantic HTML is used throughout

Performance:

- Core Web Vitals pass at least at a "needs improvement" threshold

- Page loads and is usable on mobile without layout shifts

Hand this list to your dev team as-is. There is no interpretation needed.

Step 6: Measure impact the right way (visibility does not equal clicks)

If you are measuring the success of this work purely through organic click data, you will undercount your wins and misread your losses.

Track these signals weekly:

- AIO presence rate: For your seed query list, what percentage of queries now trigger an AI Overview that includes your domain as a cited source?

- Citation share of voice: Across your target query cluster, how does your citation frequency compare to the top three competitor domains?

- Brand mention frequency: How often is your brand named inside the generated answer, separate from citation?

- Branded search and direct traffic: As AI visibility grows, branded search tends to follow. Use it as a proxy for assisted awareness.

Set stakeholder expectations clearly. AI Overview visibility builds brand presence and shapes buyer decisions before a click ever happens. The value is real, but it shows up in pipeline and brand lift before it shows up in Google Analytics. Teams that understand this early avoid the trap of pulling back on the strategy right before it starts compounding.

For a deeper look at how to track brand presence across AI engines specifically, our guide on how to improve brand visibility in ChatGPT covers the measurement approach in detail.

Best practices for improving visibility in Google AI Overviews (content that wins citations)

Getting your technical foundation right opens the door. What you publish through that door determines whether Google reaches for your content by default or scrolls past it entirely.

The following practices map directly to how AI Overviews extract and synthesize content. Each one is actionable today.

"Answer-first" sections (built for extraction)

The single biggest structural mistake teams make is burying the answer. An introduction that takes three paragraphs to arrive at the point is fine for a magazine. It is invisible to an AI model looking for something it can extract and cite cleanly.

The rule is simple: put the direct answer in the first 20 to 40 words of every section.

That means:

- Open with a declarative statement, not a question, a statistic, or a scene-setter

- Keep the sentence structure tight and unambiguous

- Use the same terminology consistently throughout the page, no clever synonyms that introduce entity confusion

What this looks like in practice:

Weak opening: "There are many factors that can influence how Google decides to include content in an AI Overview, and understanding them requires looking at the problem from multiple angles..."

Strong opening: "Google AI Overviews cite pages that lead with a direct, extractable answer and are supported by corroborating trust signals. Structure and clarity are the two fastest levers."

The expansion can follow immediately after. Bullets, steps, examples, and context all belong below the capsule, not before it.

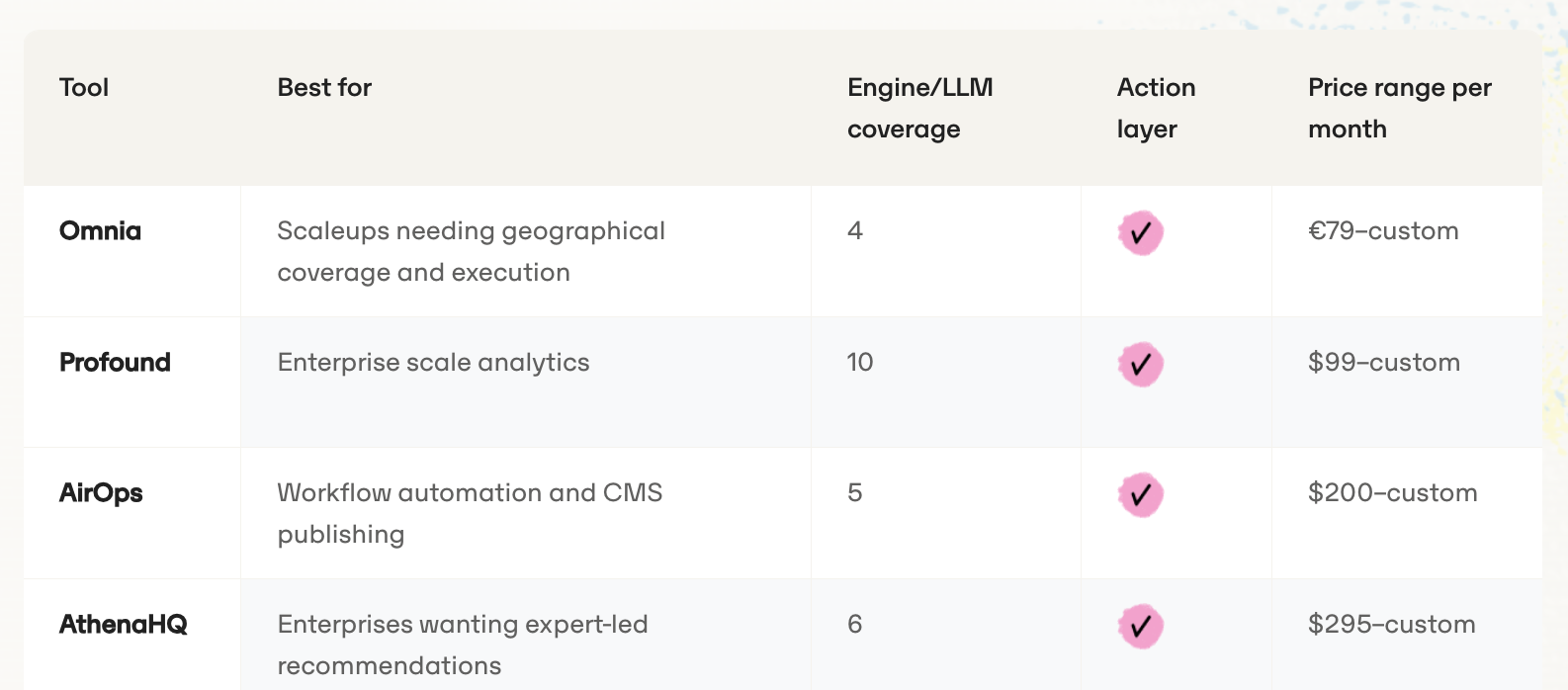

Comparison tables that AI can summarize (and cite)

Comparison content is one of the most consistently cited formats in AI Overviews, particularly for "best," "vs," and "how to choose" queries. The reason is structural: a well-built table gives the model exactly what it needs to synthesize a recommendation without having to interpret narrative prose.

Build comparison tables that follow these rules:

- One table per intent: pricing comparison, feature comparison, and pros/cons each get their own table

- Column names should be simple and descriptive, not clever

- Keep cell content short: five to ten words per cell is enough

- Add two to three sentences of interpretation underneath the table

That last point matters more than most teams realize. A table alone is data. A table followed by a short interpretive paragraph gives the model both a structured asset to reference and a narrative it can potentially quote.

Multimodal support: images and video that reinforce the answer

Original visuals are an underused citation signal. Most competing content in any given category uses stock images or no images at all. That is a gap worth exploiting.

What to include:

- Original screenshots that show a real process, not a polished marketing graphic

- Diagrams that explain a concept the text describes, not decorate it

- Alt text written to describe the actual insight: "Citation share of voice dashboard showing Omnia vs three competitors across 50 queries" beats "dashboard screenshot"

- Video with a full transcript and chapter timestamps so the model can index specific moments

Why this matters for AI Overviews specifically: Google is increasingly able to process and reference multimodal content. Pages that pair a clear written answer with an original visual that reinforces it give the model more to work with, and more reason to treat the source as authoritative.

It also creates a competitor gap that is slow to close. Producing original visuals takes effort. Most teams will not bother.

Update strategy: freshness without "fake freshness"

Updating a page's date without changing its substance does not fool Google. It has not for years. But genuine freshness, adding new examples, refreshing data points, and filling in subtopics that have emerged since the original publish date, is a real citation signal, particularly for queries where the answer evolves over time.

A simple update cadence that works:

- Monthly: Revisit your top AI Overview target pages. Add new examples, replace outdated data, and check whether cited competitors have updated their versions.

- Quarterly: Re-audit your citation patterns. Which new domains have entered the citation set for your target queries? Which subtopics are now appearing in AI Overviews that were not three months ago?

When you update, make it visible:

- Add a "last updated" date at the top of the page

- Include a one-line change note for substantive updates: "Updated March 2026 to include new citation format examples and revised Step 3 workflow"

Cosmetic updates add noise. Substantive updates add authority. The distinction matters and Google can tell the difference.

How to rank in Google AI Overview by page type (choose the right asset)

Different queries cite different content archetypes. Publishing the right type of page for the right intent is not a minor optimization, it is the difference between being in the citation set and being ignored entirely.

Here are the seven page types that earn citations most reliably, and what each one needs to work:

1. Glossary and definition pages

Best for: "what is," "define," and foundational concept queries.

Must-have modules:

- A one to two sentence answer capsule at the top of every definition

- A "related terms" section that builds entity context around the primary term

- Examples that ground the definition in a real use case

2. "Best X for Y" listicles (with methodology)

Best for: comparison and recommendation queries.

Must-have modules:

- A clear methodology section explaining how you evaluated options

- A summary comparison table near the top

- Individual entries with consistent structure: what it is, who it is for, and one concrete limitation

3. How-to guides with steps and screenshots

Best for: process and task-completion queries.

Must-have modules:

- Numbered steps with one clear action per step

- Original screenshots at each stage, not stock imagery

- A "common mistakes" or troubleshooting note at the end

- Estimated time and difficulty level near the top

4. Troubleshooting pages

Best for: "why is X happening" and problem-diagnosis queries.

Must-have modules:

- A direct answer to the problem in the first paragraph

- A structured list of causes ranked by likelihood

- Clear fix instructions for each cause

- A "when to escalate" section for complex or edge-case scenarios

5. Industry benchmarks and original data reports

Best for: "how much," "what percentage," and "industry average" queries.

Must-have modules:

- Clear methodology: sample size, date range, data source, and limitations

- A summary table of headline findings near the top

- Interpretive commentary that explains what the numbers mean, not just what they are

- A "last updated" label that is accurate and visible

6. Product and feature documentation

Best for: technical and integration queries, particularly in B2B SaaS.

Must-have modules:

- Plain-language summaries above every technical block

- Code examples or configuration snippets where relevant

- A changelog or version history section

- Clear links to related documentation pages

7. FAQ pages (standalone or embedded)

Best for: long-tail and conversational queries, "people also ask" adjacents.

Must-have modules:

- Each question as a proper H3 heading

- Answers of two to four sentences: direct, complete, and self-contained

- FAQ schema markup so Google can parse the structure without rendering the full page

- Questions sourced from real search behavior, not internal assumptions

How to rank for AI Overviews when you are not a "famous" brand

Here is the reality most guides skip: you do not need domain authority in the hundreds to earn citations in AI Overviews. You need to be the clearest, most trustworthy answer to a specific set of queries that larger brands have not bothered to address properly.

That is not a consolation prize. It is a genuine strategic opening.

TUIO, an insurtech based in Spain, started with 8.55% share of voice across their target query set. Within a few months they had accumulated 1,844 brand mentions and reached 11.79% global share of voice — outperforming Mapfre and Santalucía on specific query clusters. They did it by building citation authority in the long tail first, covering query clusters where larger competitors had thin or zero coverage. That authority compounded, and AI engines began surfacing TUIO on broader queries as the citation signals accumulated.

Focus on winnable long-tail prompts first. Big competitors dominate broad category queries. They rarely dominate specific, constrained, use-case queries. The data backs this up. These are the prompts where you can build a citation footprint before anyone notices:

- Specific integrations: "how to connect [your tool] with [popular platform]"

- Constrained use cases: "best [category] for [specific team size or industry]"

- Process-specific queries: "how to [specific workflow] without [common pain point]"

Start there. Build citation authority in the long tail, then work outward toward broader terms as your entity signals strengthen.

Publish what you can uniquely prove. Generic content that synthesizes what everyone else has already said will not earn citations. Content that adds something nobody else can replicate will.

For a startup or scale-up, that means:

- Internal benchmarks from your own product data, even small sample sizes clearly labeled

- Before and after workflow examples from real customer scenarios

- Annotated screenshots of your own process with honest commentary

- "What we tried and what broke" notes that show genuine firsthand experience

None of these require a large team or a large budget. They require honesty and specificity, two things most corporate content avoids entirely.

Build a consistent entity footprint. AI models need to understand who you are before they will cite you reliably. That means your brand name, your area of expertise, and your core claims need to appear consistently across:

- Your own site: homepage, About page, author bios, and product pages

- Trusted third-party sources: press mentions, directory listings, partner pages, and review platforms

- Structured data: Organization schema at minimum, Person schema for key authors

Inconsistency confuses entity recognition. If your brand appears under three slightly different names across different pages and platforms, Google struggles to build a confident picture of who you are. Clean that up before you invest heavily in new content.

How to rank on AI Overviews with "citation-worthy" originality

Originality does not mean publishing a landmark industry study every quarter. It means adding something to the conversation that did not exist before you published. Pick two or three of the following formats and build them into your regular content cadence:

- Internal benchmarks: Share aggregated findings from your own data. Be transparent about sample size, date range, and limitations. A clearly labeled small-sample finding is more credible than a vague claim.

- Before and after experiments: Document a change you made, what you expected, what actually happened, and what you would do differently. Templates work well here.

- Annotated screenshots: Take a real example from your own workflow and add commentary that explains what is happening and why it matters.

- "What we tried and what broke" notes: Honest failure documentation is rare and therefore valuable. It signals firsthand experience in a way polished how-to content cannot.

- Mini case studies: A single customer scenario with specific inputs, actions, and outcomes is more citable than a generic success story.

- Curated data comparisons: Pull findings from multiple primary sources, add your own interpretation, and present them in a single structured table that did not exist before.

For example, at Omnia, we try to incorporate insights from our own datasets to confirm findings. Whichever format you decide to add to your content, one thing is certain: every one of these formats adds something observable and specific.

How to improve employer visibility in Google AI Overviews

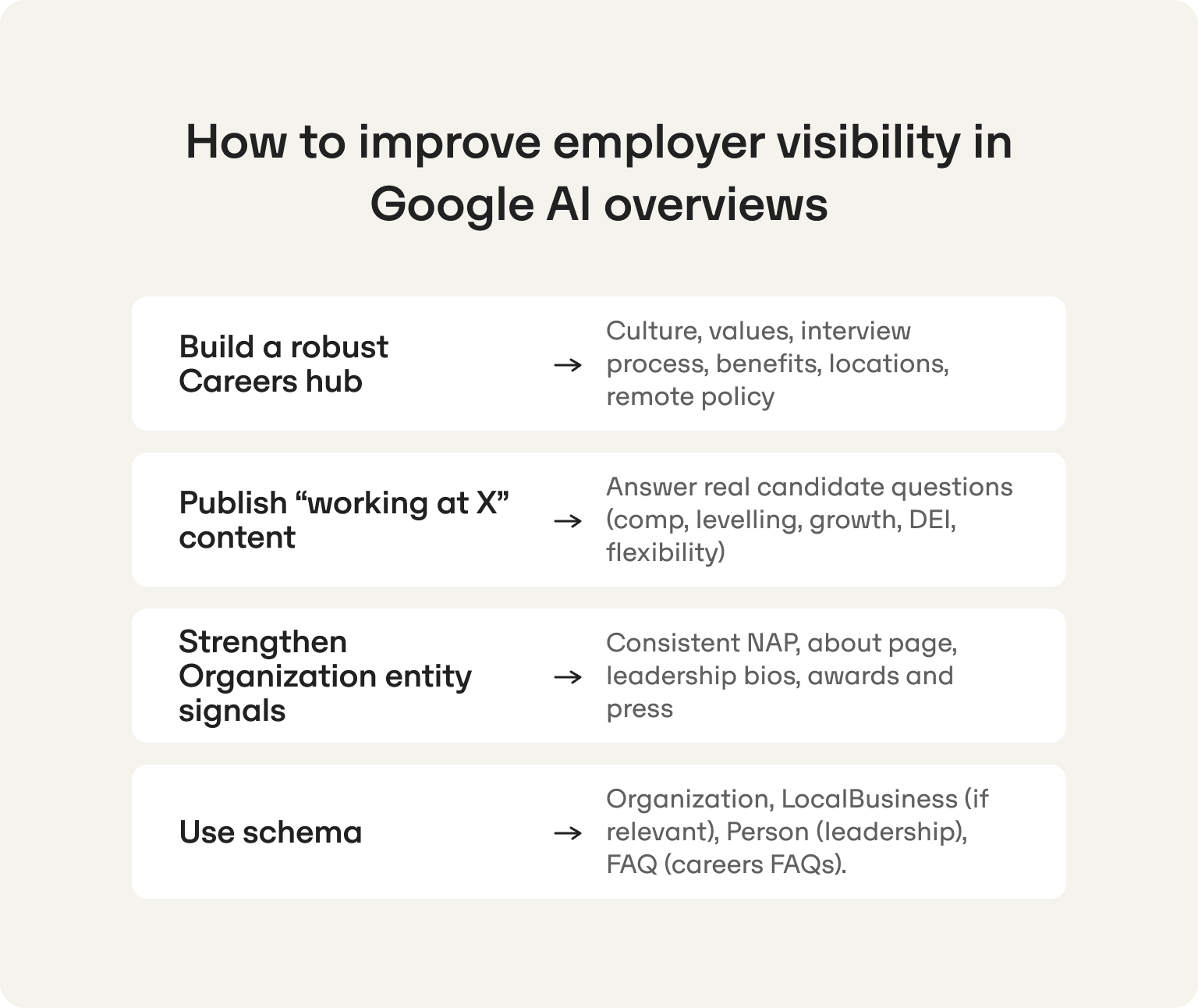

.png)

For a founder-led startup or scale-up with a lean marketing team, employer branding rarely makes the priority list. But consider what happens in the meantime. When a candidate types "what's it like to work at [your company]?" into ChatGPT or Perplexity, the AI synthesizes an answer from whatever it can find — Glassdoor reviews, a two-year-old LinkedIn post, whatever your last employee said on the way out. The companies building resilience against that now are doing it across sources AI actually trusts: structured review profiles, employee thought leadership, and genuine press mentions. You don't get to respond in real time. The answer has already been formed.

Here is why that thinking is worth revisiting.

When a strong candidate, a potential investor, or a key hire asks an AI engine "what is it like to work at [your company]," Google will synthesize an answer from whatever it can find. If you have not published anything worth citing, that answer will be built from Glassdoor reviews, a two-year-old LinkedIn post, and whatever your last employee said on the way out.

You do not need a big budget or a content team to fix that. You need a few well-structured pages and consistent entity signals. Here is what that looks like at a practical, startup-friendly scale.

Start with your About and Careers pages

These are the two pages Google reaches for first when building an employer-brand answer. Most startup About pages are three sentences and a team photo. Most Careers pages are a list of open roles with an ATS link. Neither gives an AI engine anything useful to cite.

A small investment here goes a long way. For each page, ask: if a candidate asked an AI engine the most common question about working here, would this page answer it?

Focus on the questions candidates actually ask:

- What does the team look like and how does it work day to day?

- What is the interview process and how long does it take?

- How does the company approach remote work and flexibility?

- What does growth look like for someone joining at this stage?

You do not need polished corporate copy. You need honest, specific answers that a model can extract and cite confidently.

Publish a "working here" page that does the heavy lifting

One well-structured page that addresses the full candidate journey is more effective than scattering employer-brand content across five different blog posts. Think of it as an answer capsule for your employer brand: direct, structured, and built to be cited.

Cover the following in plain language:

- Culture and values with real examples, not a list of adjectives

- How the team is structured and how decisions get made

- Compensation philosophy and as much transparency as your legal team will allow

- DEI approach with concrete initiatives rather than aspirational statements

- What the first 90 days look like for a new hire

Keep it updated. An accurate page from six months ago is more valuable than a polished page from two years ago.

Build a consistent organization entity footprint

AI models need a clear, consistent picture of your company before they will confidently cite you for employer queries. For a small team, this does not require a PR agency or a brand campaign. It requires consistency across a handful of surfaces:

- Your company name, founding date, location, and description should match exactly across your website, LinkedIn, Crunchbase, and any press mentions

- Leadership bios should be visible, named, and link to credible external profiles

- Any awards, press coverage, or third-party recognition should be referenced on your own site, not just exist on the publication's page

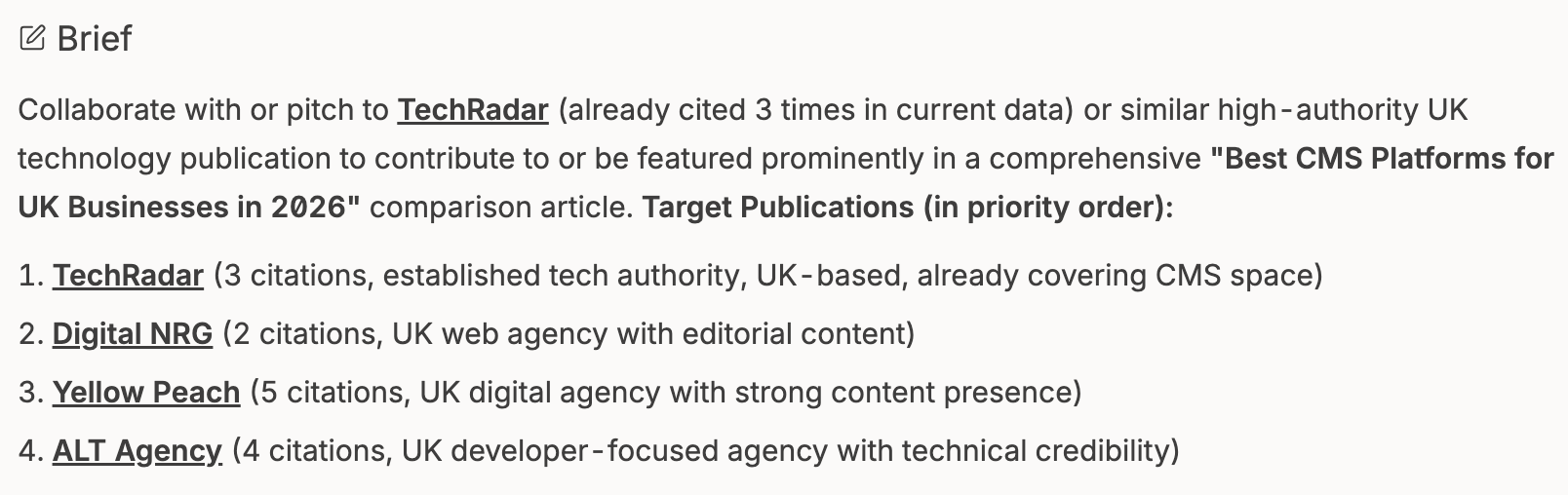

Third-party citations are one of the strongest corroboration signals you can build, but knowing where to start is half the battle. Omnia takes the guesswork out of it by identifying the specific high-authority publications already being cited in your category and surfacing a prioritized outreach list your team can act on immediately.

Rather than cold-pitching publications at random, you are working from real citation data: the sources Google already trusts in your space, ranked by how often they appear in AI answers for your target queries.

Add schema markup without overcomplicating it

Three schema types will cover most employer visibility use cases for a small team:

- Organization schema on your homepage and About page

- Person schema for your founders and key leadership

- FAQ schema on your Careers page for the questions candidates ask most often

This is a one-time technical task that a developer can complete in a few hours. It does not guarantee AI Overview coverage, but it removes ambiguity and makes it significantly easier for Google to summarize your employer story accurately.

Tooling: how to rank on AI Overview at scale (monitoring to actions)

The manual SOP in this guide will get you started. It will not scale.

Once you are tracking more than 50 queries across multiple markets, manual checks become a real time sink for a lean team. But the answer is not always an enterprise platform. For most startups and scale-ups, the right move is to go as far as possible with a disciplined homegrown approach before spending anything.

You should expect to do the following if you want to spread and excel in AI search:

- Having a shared tracking spreadsheet is more powerful than it sounds. Log your seed queries weekly with seven columns: query, country, AIO present, your domain cited, your brand mentioned, competitor domains cited, and date checked. Forty-five minutes a week for four weeks gives you a citation trend picture most paid tools cannot match for your specific query set.

- Competitor citation audits cost nothing. Pick your top three competitors, run their domains against your query list, and look at the specific URLs getting cited. What page types are they? How are they structured? You now have a reverse-engineered content brief for every gap you find.

- Incognito plus VPN approximates localized results well enough to be directionally useful for market-level checks without any additional spend.

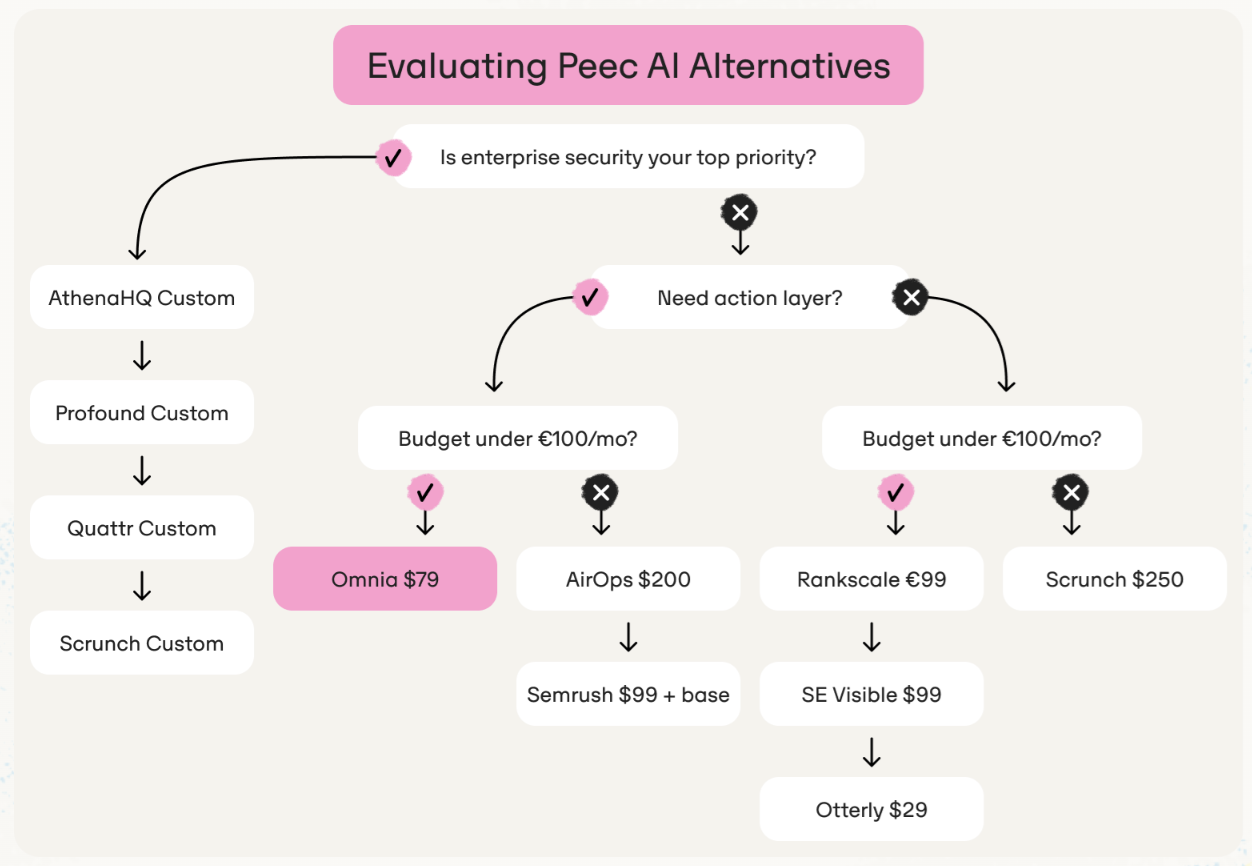

When you outgrow the manual approach, the right tool is not the most feature-rich one. It is the one that skips the dashboard and tells your team exactly what to do next.

Why Omnia is the best fit for teams trying to improve visibility in Google AI Overviews

Most AI visibility tools were built for enterprise teams with the headcount to match. Large query volumes, complex dashboards, and reporting layers that assume someone on your team has the time to sit between the data and the work. For a startup or scale-up with one or two people owning content and SEO, that is the wrong tool for the job.

Omnia was built for the other scenario.

The three questions Omnia's clients asks most often map directly to what the platform does:

"Do we show up today?" Omnia tracks AI Overview presence by country and query set, so you get a localized, accurate picture of where you stand right now, not a global average that obscures the markets you actually care about. Not sure where to start? Run a free AI visibility check and get an instant snapshot of how your brand appears across AI engines today.

"Why are competitors getting recommended instead of us?" Omnia surfaces citation data at URL and domain level, shows which sources Google is pulling from for your target queries, and benchmarks your share of voice against specific competitors. You see exactly who is winning and what their content looks like, without manually checking every query yourself.

"What do we actually do about it?" This is where most tools stop and where Omnia starts. Rather than handing you a report to interpret, Omnia turns citation gaps into concrete actions: content briefs, placement recommendations, and specific fixes your team can execute this week. No analyst required. No dashboard to decode.

The before and after is not abstract. Teams using Omnia move from "we think we might be missing from AI answers" to "here are the seven pages we need to publish and the three existing pages we need to restructure," in days rather than months.

When you are ready to turn those findings into action, sign up for an Omnia account. and put the whole process on autopilot.

FAQs

How to rank in AI Overviews?

Start by identifying queries that already trigger AI Overviews in your category, then make sure your pages are fully crawlable and indexed. Publish extractable answer capsules at the top of every key section, strengthen trust signals with firsthand evidence and named authors, and track citation presence weekly rather than waiting for traffic shifts to tell you something has changed.

How does Google AI Overview work?

Google's model crawls and indexes content across the web, synthesizes information from multiple trusted sources, and constructs what it judges to be the most complete answer to a query. It then cites the sources it drew from, which means inclusion depends on whether your content is accessible, extractable, and trusted enough to be used as a building block in that synthesis. For your content strategy, this means structure and trust signals matter as much as keyword relevance.

How to show up in AI Overviews even if my pages already rank?

Ranking and being cited are not the same thing, and conflating the two is one of the most common reasons teams stall. If your pages rank but are not cited, start by adding tight answer capsules at the top of each section, clarifying your entity signals, and adding firsthand evidence that competitors cannot replicate. Also check that your structured data matches your visible content exactly, as discrepancies between the two are a quiet citation killer.

How to get mentioned in Google AI Overviews (and not just cited)?

Brand mentions inside AI Overview answers tend to follow repeated citation across related queries, combined with strong and consistent entity signals. Make sure your brand name is used consistently across every page on your site, that your About page clearly establishes who you are and what you do, and that named author bios link to credible external profiles. Organization schema and corroboration from trusted third-party sources round out the picture and give Google the confidence to reference your brand by name rather than just pulling from your URLs.

What are the best practices for improving visibility in Google AI Overviews for B2B SaaS?

For B2B SaaS specifically, focus on the page types buyers use when making decisions:

- Integration pages that answer specific "does it work with X" queries

- Use-case pages built around the constraints and workflows of your target customer

- Comparison pages with a clear, transparent methodology

- Product documentation written in plain language with summary capsules above every technical block

- Pricing pages with enough transparency to be citable

- First-party proof: real workflows, annotated screenshots, and specific outcomes rather than generic case study language

Generic category content will not earn citations in a competitive SaaS space. The more specific and provable your content is, the more citable it becomes.

How to rank on AI Overview vs how to rank on AI Overviews: is there a difference?

No meaningful difference in intent. Both refer to the same goal of earning citation presence inside Google's AI-generated answers. What matters more than the phrasing is recognizing that results vary significantly by query type, country, and time of check. Track a consistent query set over weeks rather than obsessing over a single SERP snapshot, which may look entirely different the next day.

Google AI Overviews impact on search: should I still care about clicks?

Yes, but the way you measure value needs to shift. AI Overviews reduce clicks for some queries while increasing brand awareness and assisted conversions for others, so abandoning blue link optimization entirely would be a mistake. Run both in parallel: defend your organic rankings while building citation presence, and use branded search volume and direct traffic as proxies for the assisted awareness AI visibility creates. Set stakeholder expectations early so that visibility gains are recognized before they show up in Google Analytics.

How to improve employer visibility in Google AI Overviews for hiring queries?

Start with your About and Careers pages, as these are the two surfaces Google reaches for first when building an employer brand answer. Make sure they address the questions candidates actually ask AI engines: culture, interview process, flexibility, growth, and compensation philosophy, in plain and structured language. Add Organization schema, Person schema for key leadership, and FAQ schema for common candidate questions. Then build consistency across third-party platforms like LinkedIn, Glassdoor, and Crunchbase so Google has corroborating signals to draw from when constructing its answer.