AI Mode tracking means monitoring whether your brand shows up in Google's AI-generated answers — and understanding why that changes over time. You can't get that picture from Google Search Console alone. GSC is useful context, but it doesn't show you which citations appeared, which competitor replaced you, or when you disappeared from an answer. What actually works is a three-layer stack: GSC for query and page trends, a prompt-based monitoring tool to capture AI Mode answers and citations, and a simple reporting layer to review changes weekly. Good tracking is country-level, citation-level, and trend-aware. Omnia is built to do exactly that, and to turn what it finds into actions your team can actually run.

You're probably already seeing organic traffic flatten or dip. You suspect AI Mode has something to do with it, but you can't prove it yet. That's the gap this guide is designed to close. AI mode tracking is still a new discipline, and most teams are either ignoring it completely or throwing GSC filters at a problem GSC wasn't built to solve. This guide gives you a practical workflow you can implement this week, a clear picture of what GSC can and can't do for AI Mode, and how Omnia goes beyond monitoring and actually helps you act on what you find.

What "AI Mode tracking" actually means (and what it doesn't)

AI Mode tracking refers to the practice of monitoring how often your brand, pages, and content appear inside Google's AI-generated answers, across a defined set of prompts, markets, and time. The reason it matters: AI Mode doesn't return a stable ranked list of URLs. It generates an answer, cites sources, and those sources shift, sometimes between one day and the next, with no alert and no trace in your existing analytics.

Two questions teams want answered most often:

- "Do we show up for this prompt cluster in the UK vs. the US?"

- "Which of our URLs are getting cited, and did that change this week?"

AI Mode tracking is what makes those questions answerable on a consistent basis, not just when someone remembers to check.

How AI Mode differs from organic ranking (why "rankings" are slippery here)

In classic SEO, a rank is a number. Your page is position 3, or it isn't. Google AI Mode doesn't work that way. Instead of serving a deterministic list of URLs, it generates a synthesized answer that pulls from multiple cited sources, sometimes with links, sometimes without. Your "position" in AI Mode is closer to citation prominence than a blue-link rank. You can be the first cited source, one of six, or completely absent, and all three can be true for slightly different phrasings of the same question.

That instability is the thing most brands and the marketing teams behind them aren't prepared for. The same URL can be cited prominently for one prompt and missing entirely from a semantically similar variant. Citation order shifts between answer instances, between countries, and as Google's underlying models update. A competitor can go from citation four to citation one on a high-intent prompt without any SERP movement your existing tools would catch. Understanding the difference between prompts and search queries is a good starting point for getting your head around why this happens.

Country controls matter more than most teams expect. AI Mode localizes answers, so a brand with strong citation presence in US results can be largely invisible in the UK for the same prompt cluster. If your tracking doesn't separate geography, you're blending signals that shouldn't be blended.

AI Mode vs AI Overviews vs "regular" SERP features (quick clarity, no rabbit holes)

These three surfaces get conflated constantly, and mixing them up leads to wasted effort and misleading reports. Here's a fast way to tell them apart:

If you see a full conversational interface where the user types a question and gets a multi-paragraph generated response with follow-up suggestions, you're in AI Mode, Google's dedicated AI search experience.

If you see a generated summary box sitting above the standard blue links on a normal Google results page, you're in AI Overviews, which surfaces inside standard search.

If you see standard blue links with no AI-generated content, you're in the regular SERP, where classic rank tracking applies.

Google AI Mode tracking: what you can measure today (and where teams get stuck)

The honest answer is that AI Mode measurement is still catching up to the reality of how teams need to use it. Before you build your tracking stack, it's worth being clear about what's actually measurable, what's partially measurable, and what isn't, so you don't spend hours trying to force tools to report something they simply don't expose.

What you can measure:

- Whether your brand or domain appears in a captured AI Mode answer for a given prompt

- Which URLs are cited, and from which domains

- Whether competitors appear in answers where you don't

- How those patterns change over time, if you're capturing answers consistently

What's partially measurable:

- Traffic impact from AI Mode citations (directional at best, not clean attribution)

- Prompt-level visibility trends (only as good as your prompt set)

What you can't reliably measure today:

- AI Mode-specific clicks inside GSC (not currently broken out by surface)

- The full universe of prompts where your brand may or may not appear

Common failure modes teams run into:

- "We added annotations in GA4 and still can't isolate AI Mode clicks" — GA4 doesn't receive surface-level data from Google at this granularity

- "We can't tell which AI answer cited us" — GSC Performance data doesn't expose citation-level detail from AI Mode answers

- "We don't know when we disappeared" — without consistent answer capture, disappearances are invisible until someone manually checks

How to track AI Mode in GSC (and the hard limitations)

GSC is a useful supporting layer for AI Mode tracking, but it has real limits you need to understand before investing too much time in it. Here's what to do inside GSC, and what not to expect from it.

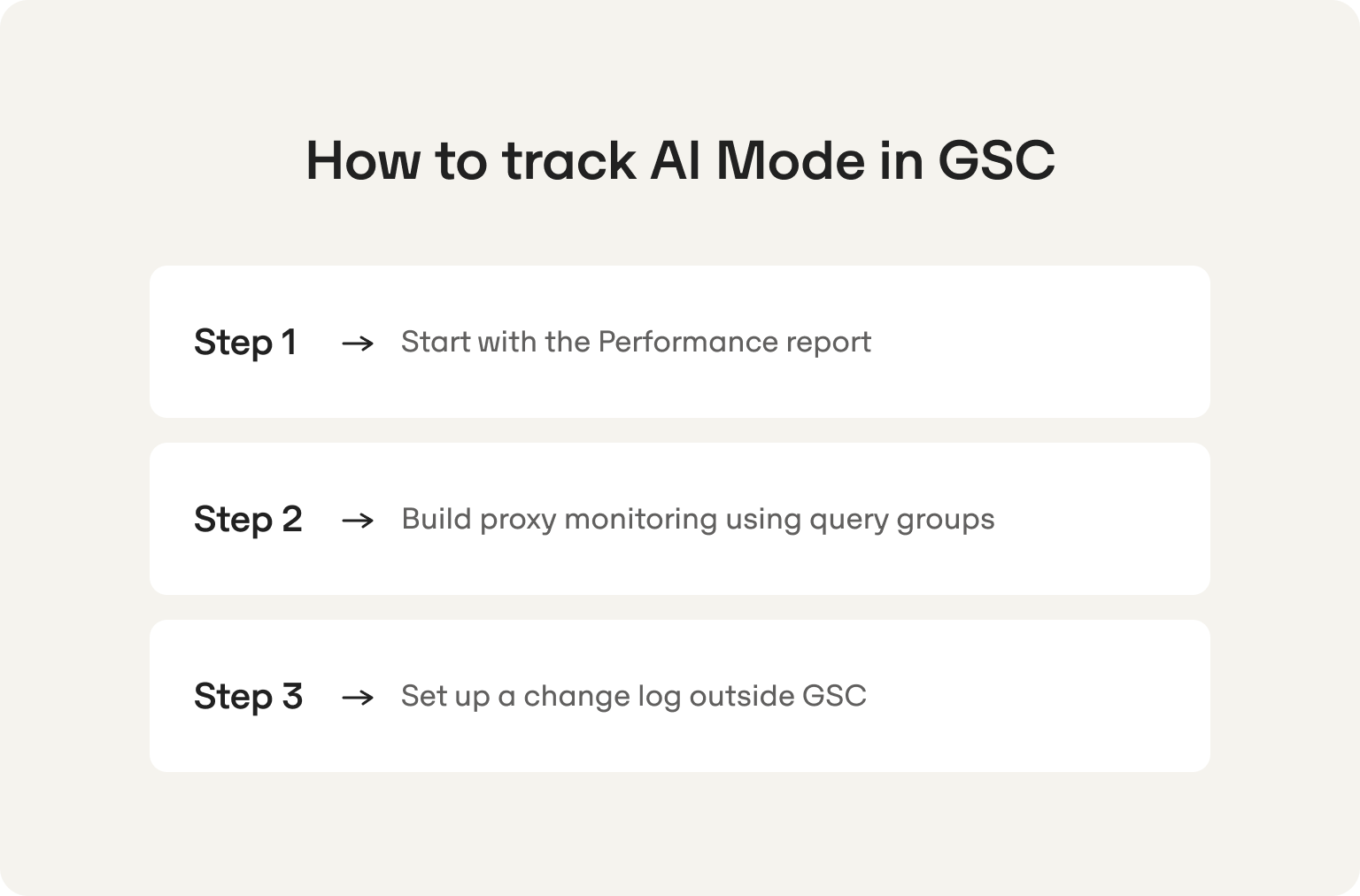

Step 1: Start with the Performance report

Go to Search results, then Performance. Compare two date ranges (for example, last 28 days vs. the prior 28 days) and look for query clusters where impressions or clicks are declining. Pages that are losing clicks despite holding impressions are worth flagging. That pattern can indicate AI Mode is intercepting demand before users click through.

Step 2: Build proxy monitoring using query groups

GSC doesn't have an AI Mode filter. What you can do is segment branded queries separately from non-branded category queries and review trends in each group consistently. A drop in non-branded impression share for informational queries, combined with flat or growing search volume externally, is a signal worth investigating. Create saved filters for your main query clusters so you're reviewing the same segments every week, not starting from scratch each time.

Step 3: Set up a change log outside GSC

GSC doesn't annotate for model updates, AI Mode rollout expansions, or algorithm changes. Keep a simple shared changelog, a Google Sheet works fine, that records: dates when you made site changes, dates of known Google updates, and dates when your prompt monitoring tool flags a visibility shift. Correlating those three data streams is how you start to distinguish cause from coincidence.

One thing worth stating clearly: GSC does not currently provide a dedicated reporting dimension for AI Mode appearances or citations. It is not possible to cleanly isolate AI Mode traffic, citation appearances, or inclusion and exclusion events using GSC alone. Use it for query and page trend context, and pair it with a purpose-built AI search monitoring tool to get the full picture.

How to track AI Mode in Google Search Console for brand vs. non-brand queries

When GSC is your supporting layer, segmenting by query type is the most useful thing you can do with it. Different query types surface different risks, and treating them the same means missing signals that matter.

Branded queries are your early warning system. A drop in branded impression share without a paid or organic explanation can mean AI Mode is generating answers about your brand that absorb the click before it happens. Non-brand category queries are your demand capture signal, the prompts where buyers are forming preferences, and where AI Mode most actively shapes who gets recommended. If you want a deeper look at how to monitor AI search visibility beyond GSC, that guide covers the broader monitoring picture.

How to track AI Mode (properly): a repeatable workflow serious teams use

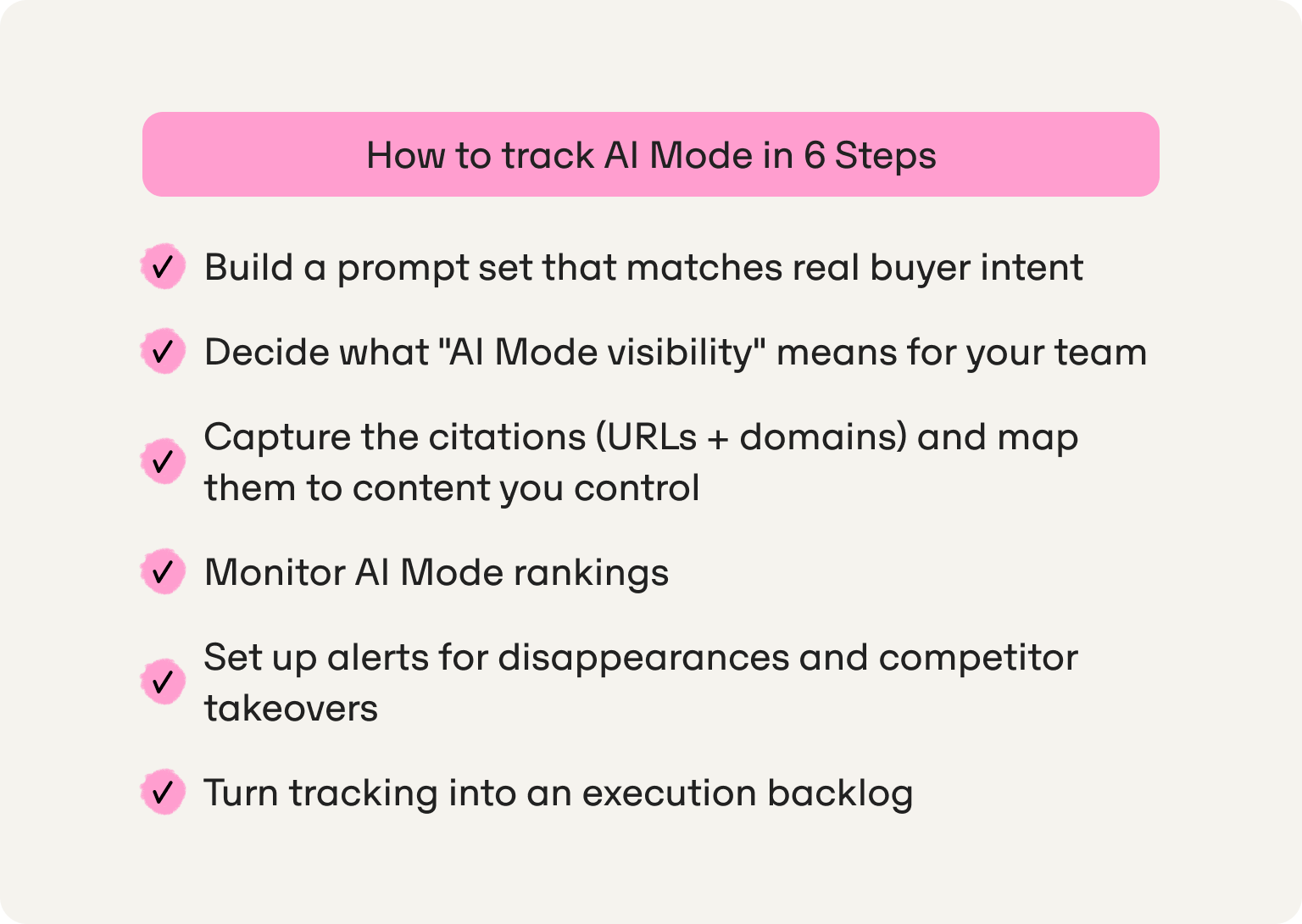

Manual spot-checking doesn't scale. What follows is a six-step workflow that gives lean marketing teams consistent, actionable AI Mode intelligence without requiring a dedicated analyst or a weekly panic session.

Step 1 — Build a prompt set that matches real buyer intent (not vanity prompts)

Your prompt set is the foundation of everything else in this workflow. If it's built around prompts nobody actually types, your tracking data tells you nothing useful. Start by organizing prompts into clusters that map to funnel stages:

- Awareness: "what is [category]," "how does [problem] happen," "why is [pain point] getting worse"

- Consideration: "best [tool type] for [use case]," "how to choose [solution]," "[solution] for [company size]"

- Comparison: "[YourBrand] vs [Competitor]," "alternatives to [Competitor]," "[category] tools compared"

- Integration/technical: "how to connect [tool] with [platform]," "[tool] API," "[tool] for [specific workflow]"

- Pricing/conversion: "[tool] pricing," "how much does [solution] cost," "is [tool] worth it"

For each cluster, create country and language variants where relevant. A prompt that generates strong AI Mode answers in US English can behave completely differently in UK English, and tracking them together obscures both signals.

A reasonable starting volume is 50 to 150 prompts for a focused ICP. Weekly captures are the right baseline cadence for most lean teams. Daily is useful for high-volatility clusters or during active competitive campaigns, but weekly is manageable and consistent. The goal is a rhythm you'll actually stick to, not a comprehensive sweep you run once and abandon. Understanding the difference between prompts and search queries is worth the five minutes before you start building your list.

Here are 10 example prompts across clusters to give you a starting shape:

Awareness:

- "Why is organic search traffic declining for B2B SaaS?"

- "How do AI search engines decide what to recommend?"

- "What is generative engine optimization?"

Consideration: 4. "Best AI visibility tools for startups" 5. "How to improve brand visibility in ChatGPT and Google AI Mode" 6. "AI search monitoring tools for small marketing teams"

Comparison: 7. "Best alternatives to [well-known SEO platform] for AI search" 8. "Which AI visibility tool is best for a two-person marketing team?"

Integration/technical: 9. "How to connect AI visibility data with Google Search Console" 10. "AI search monitoring tools with API access"

Step 2 — Decide what "AI Mode visibility" means for your team (definitions you'll track)

Before you start capturing data, align your team on what you're actually measuring. These definitions are worth copying into an internal tracking doc so everyone uses the same language and nobody argues about whether a number is good or bad without agreeing on what it measures first.

Visibility rate: The percentage of your tracked prompts where your brand appears in the AI Mode answer, either as a named mention or a cited source. This is your headline metric for AI mode visibility.

Linked citation rate: The percentage of tracked prompts where your domain is cited with a clickable link. A mention without a citation is a weaker signal and doesn't drive traffic.

Citation prominence: Where your citation appears relative to others in the answer. Being the first cited source carries more weight than being buried fifth in a list.

Share-of-voice: Your visibility rate compared to named competitors across the same prompt set. If you appear in 40% of tracked prompts and your main competitor appears in 65%, that gap is your strategic priority.

Volatility / change rate: How often AI Mode answers materially change week-over-week for the same prompt. High volatility means the answer space is contested and your tracking cadence probably needs to tighten.

For a deeper grounding in how AI citations actually work and what influences them, that's worth reading before you start interpreting your linked citation rate numbers.

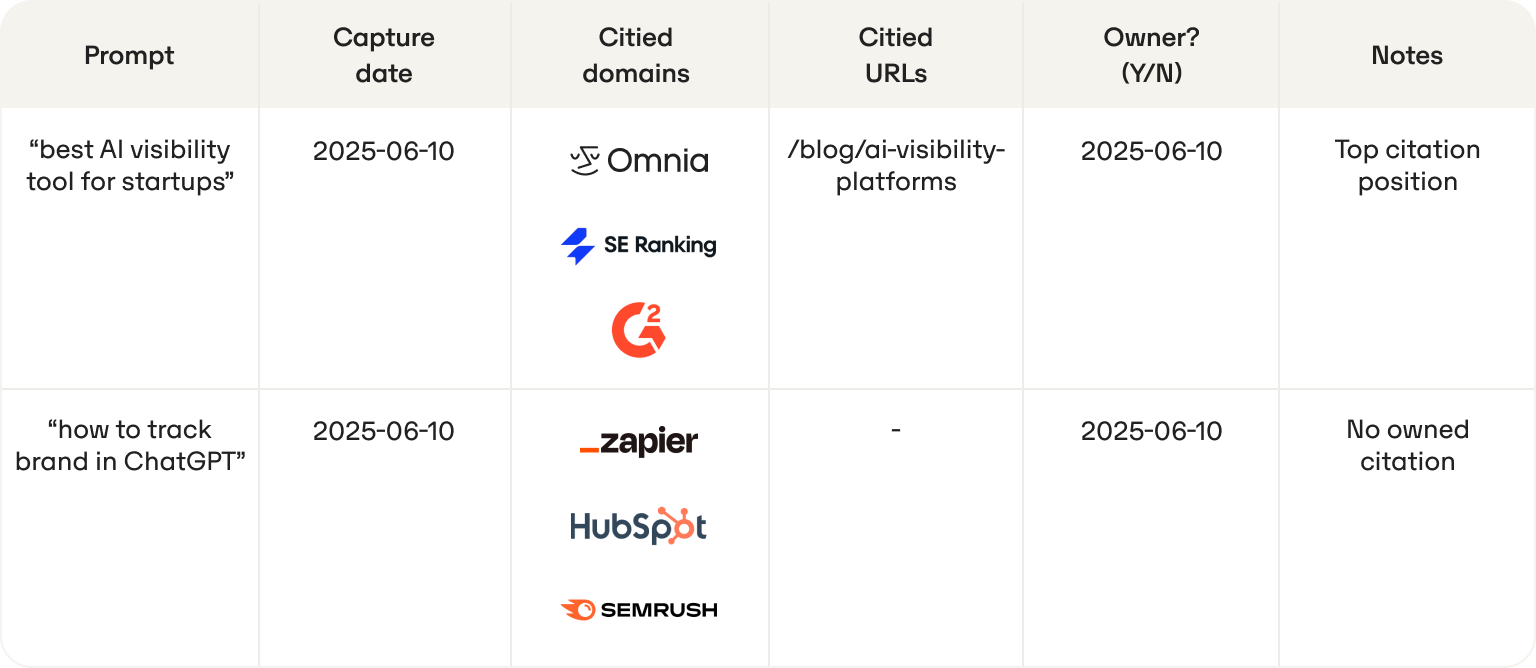

Step 3 — Capture the citations (URLs + domains) and map them to content you control

Citations are the ranking layer in AI Mode. Knowing that you appear in an answer is useful. Knowing exactly which URL was cited, and from which domain, tells you what's working and what to replicate or fix. Build a simple citation map and update it weekly:

When a URL you own drops out of citations, that's a content issue to investigate. When a third-party domain consistently appears in citations you don't control, that's a distribution signal. It tells you where AI Mode is looking for credible sources in your category, and where you need a presence.

At the domain level, the fix is usually about whether the domains where you publish or get mentioned carry the right authority signals for AI engines. At the URL level, the fix is more structural: does the cited page actually answer the prompt directly, with clear facts and a format AI Mode can extract from? That's what AI-ready content design is built around, and it's often the faster lever.

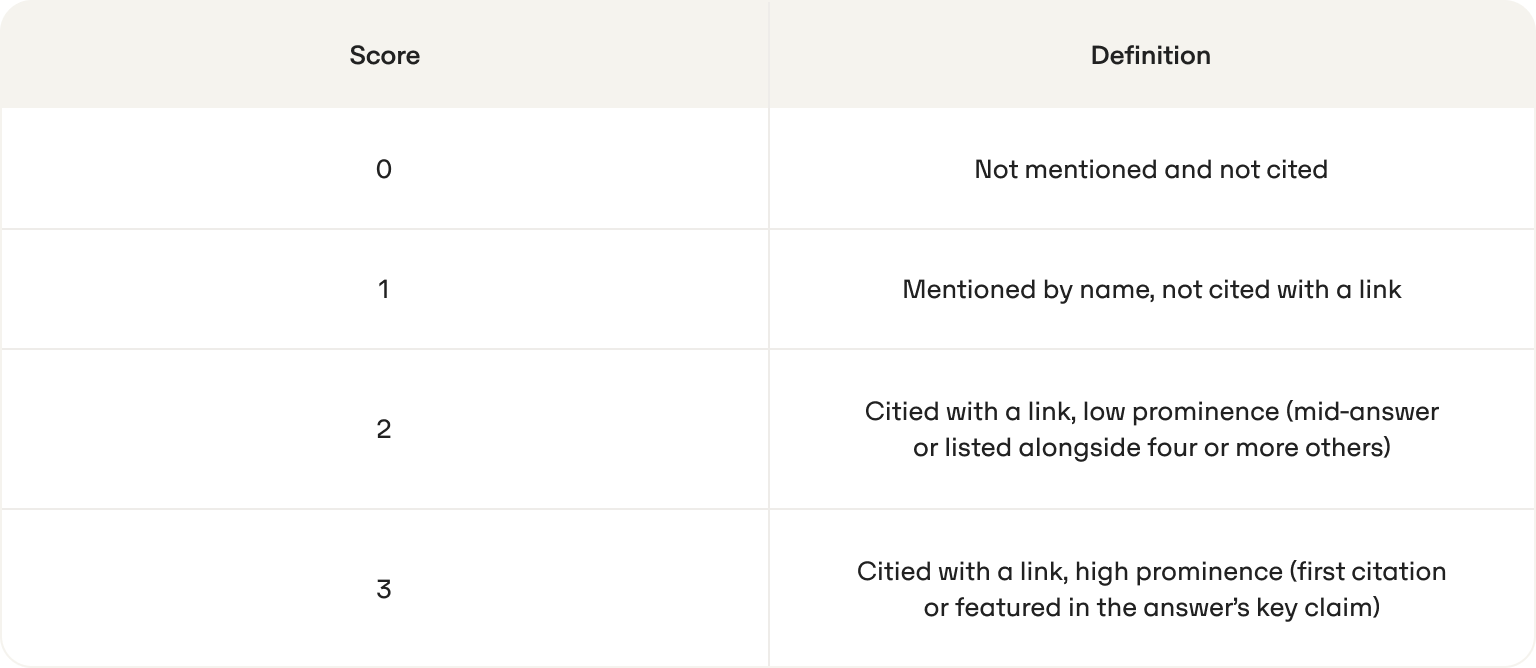

Step 4 — Monitor AI Mode rankings (what "ranking" means in AI Mode)

There's no position one in AI Mode the way there is in organic search. Learning how to monitor AI Mode rankings means building a consistent proxy system rather than waiting for a single number that doesn't exist.

What teams can track instead of a numeric rank:

- Citation order: Are you the first cited source, the third, or the last?

- Inclusion vs. exclusion: Do you appear at all in this prompt's answer?

- Competitor outranking via citations: Is a competitor cited where you aren't?

- Prominence shift: Did you move from a top citation to a buried one week-over-week?

A simple rubric makes this trackable without overcomplicating it:

Track this score by prompt and by week. A sustained drop from 3 to 1 on a high-intent prompt cluster is a higher-priority signal than a one-week fluctuation on a peripheral awareness prompt. For a broader look at the tools that operationalize this kind of tracking, the best AI Mode rank tracker comparison is a useful reference.

Step 5 — Set up alerts for disappearances and competitor takeovers

Reactive monitoring means checking visibility when someone thinks to ask. That's not a system, it's luck. The teams that respond fastest to AI Mode shifts have alert conditions defined before a problem happens.

Alert conditions worth setting up:

- Visibility rate drops meaningfully across a prompt cluster in a single week. One prompt fluctuating is normal. A cluster dropping together is a signal.

- A competitor replaces you as a primary cited source on a prompt where you previously held a top citation position.

- A new third-party domain becomes dominant across a cluster. This signals AI Mode has found a new trusted source in your category that you're not part of yet.

- Your brand is described inaccurately in a generated answer. This is a reputation risk that needs both a content response and likely a structural fix.

This is where purpose-built tracking tools earn their place. Omnia flags visibility changes as models and outputs evolve, so your team doesn't need to manually re-run prompts every week to catch a disappearance. The alert comes to you; the investigation can start immediately.

Step 6 — Turn tracking into an execution backlog (what to do when visibility drops)

Tracking is only valuable if it changes what your team does next. The difference between a dashboard and an operational tool is whether the data creates work. Use this backlog template to translate AI Mode signals into prioritized actions:

AI Mode visibility tracking: the minimum viable stack (GSC + monitoring tool + reporting)

Most lean marketing teams don't need a complex infrastructure to run serious AI Mode visibility tracking. Three layers is enough, as long as each layer is doing the right job and not being asked to do someone else's.

Layer 1 — GSC: Query and page trend context. Use it to flag impression and click changes by query cluster, maintain your changelog, and spot patterns that are worth investigating. Don't try to extract AI Mode-specific attribution from it. It's not there.

Layer 2 — Prompt-based AI monitoring tool: This is where your actual AI Mode tracking lives. You need something that captures AI Mode answers on a consistent schedule, extracts citations at the URL and domain level, tracks competitor presence across your prompt set, and alerts you when answers change. This is not a job for manual checking or a general-purpose SEO platform retrofitted with an AI tab. For a comparison of what's available, the AI search analytics tools guide and the AI visibility platforms roundup are both worth a look before you commit to something.

Layer 3 — Reporting layer: A Looker Studio dashboard or a structured Google Sheet reviewed on a weekly cadence. This is where your team looks at visibility rate, citation trends, share-of-voice vs. competitors, and the execution backlog from Step 6. Keep it simple enough that the review actually happens every week, not just when someone has time.

Who owns what:

The stack only works if ownership is clear. Citation analysis sitting in nobody's inbox is the fastest way to turn a good monitoring setup into an expensive dashboard nobody looks at.

Why Omnia is the best fit for marketers doing Google AI Mode tracking

Most AI visibility tools were built to show you data. Omnia was built to tell you what to do with it. For the marketers this guide is written for, that distinction is the whole game.

Lean teams at startups and scale-ups don't have six people to interpret a dashboard and translate findings into briefs. They need a tool that closes that gap without adding headcount. Omnia tracks AI Mode answers at the country level, so you can see whether your brand appears in UK results vs. US results for the same prompt and act on the difference rather than averaging it away.

Citation intelligence goes to the URL and domain level: not just that a competitor was cited, but exactly which page got cited and on which domain, so your content team knows what to create or fix without a lengthy investigation.

Share-of-voice tracking runs across your full prompt set against named competitors, giving you a clear view of where you're winning the AI recommendation layer and where you're losing ground. When visibility shifts, Omnia flags the change rather than waiting for your team to catch it during a manual review. That's the difference between responding to a problem in days and discovering it three weeks later when someone notices the traffic dip. You can see how that plays out in practice with a look at how to monitor AI search visibility and what a proper citation analysis setup looks like via our best citation analysis options guide.

The action layer is what separates Omnia from monitoring-only tools. Findings translate directly into content recommendations, briefs, and workflow outputs. The gap between "our visibility dropped on this prompt cluster" and "here's the content we need, and where it needs to go" shrinks from weeks to hours. That's what insights that trigger execution actually look like in practice, not a dashboard you have to interpret yourself.

Start for free and sign up for an Omnia Growth Plan 14-day trial.

FAQs

How quickly can we see impact after improving AI Mode visibility?

In most cases, you can see early shifts within days, not months. If you're updating an already cited page or fixing positioning issues, changes in citations can reflect the same week once the content is reprocessed. New content typically appears in AI Mode answers within about a week of indexing, assuming it directly matches prompt intent and is structured clearly. The real bottleneck is rarely Google, it is how fast your team identifies the gap and ships the fix. That is why consistent weekly tracking and a tight execution loop matter more than trying to predict timelines upfront.

How many prompts should we realistically track to get meaningful insights?

For most lean marketing teams, 50 to 150 well chosen prompts is enough to get a reliable signal without creating operational overhead. The key is not volume, but relevance. Prompts should map to real buyer intent across awareness, consideration, and comparison stages. Tracking too few prompts gives you a distorted view, while tracking too many creates noise and slows execution. A focused set, reviewed weekly, is what allows you to spot trends, competitor movement, and citation gaps you can actually act on.

What’s the difference between “showing up” and actually winning in AI Mode?

Showing up means your brand is mentioned or cited in an answer. Winning means you are a prominent, trusted source, typically one of the first cited, consistently present across high intent prompts, and not contradicted by competitors in the same answer. Many teams overestimate their performance because they appear occasionally but lack prominence or consistency. The real goal is to increase citation quality and share of voice, not just presence.

How do we know whether a drop is caused by AI Mode or something else?

You will not get a single definitive signal, so you need to triangulate. If GSC shows stable impressions but declining clicks, and your AI Mode tracking shows lost citations or competitor takeovers on the same prompt cluster, that is a strong indicator AI Mode is intercepting demand. If both visibility and rankings drop together, it may be a broader SEO issue. The key is combining GSC trend data with prompt level citation tracking and a changelog. Without that three layer view, you are guessing rather than diagnosing.

.png)