The competitive field inside Google AI Overviews is already consolidating. Roughly nine citation slots per answer, stable since Q4 2025, distributed across a fixed set of domains most brand teams have never mapped. Understanding who holds those slots in your category, what kind of presence they have, and whether that position is structural or still contestable is the competitive intelligence problem this article solves. Monitoring it continuously requires a defined query set, a weekly cadence, and a system for reading competitive shifts over time.

Knowing your competitor appeared in a Google AI Overview is not competitive intelligence. It is a single data point with no baseline, no pattern, and no path to a decision.

Most brand and competitive intelligence teams are running the same process: someone flags a competitor mention in a sales call or a leadership meeting, a few people run manual checks, a screenshot circulates, and the conversation moves on. Nothing is recorded. Nothing is tracked over time. And the question that actually matters — whether that competitor is building consistent authority across the queries your buyers are using to evaluate options — goes completely unanswered.

That question is not the same one the SEO team is asking. The SEO team wants to know whether their content is being cited and whether that citation is driving traffic. The competitive intelligence question is different: who does Google AI treat as the authority in your category, across which queries, with what kind of presence, and whether that position is already locked in or still contestable. This article is built around the second question.

Based on Omnia's proprietary citation database of 42M+ citations tracked across four engines, brand visibility varies by 20x or more across AI engines for the same domain. A competitor who looks authoritative in AI Overviews may be nearly invisible in ChatGPT for the same queries. A brand that appears competitive in a single manual check may be losing ground across an entire prompt cluster it has never thought to monitor. The competitive picture inside AI Overviews is a moving field, and the brands gaining ground on it are doing so systematically.

The AI Overview competitive field is already consolidating in your category

Most brand teams discover their AI Overview competitive problem the same way they discover any competitive problem: late. A sales call surfaces a competitor name. A founder asks why a rival is showing up in AI search. Someone does a manual check, sees a competitor mentioned, and the conversation shifts to "we should look into this."

What that conversation almost never surfaces is the structural reality underneath the observation. Based on Omnia's citation data, AI Overviews cite roughly nine unique domains per answer and that figure has been stable since Q4 2025. Nine slots per query. Fixed in number. The competitive field in AI Overviews is not open-ended; it is a constrained surface, and the domains occupying those slots are not rotating randomly. They are building presence systematically across query clusters, week over week.

The degree to which that field is already consolidated varies by category and that variation is the most strategically important thing a brand team can know before deciding how urgently to act. Research from Kevin Indig analyzing citation patterns in ChatGPT found that roughly 30 domains control 67% of citations per topic in product comparison verticals. His dataset is ChatGPT-specific and the precise figures do not transfer directly to AI Overviews, but the underlying dynamic holds across engines: citation authority concentrates around a small set of domains per topic, and the degree of concentration reflects category maturity.

In B2B SaaS specifically, Indig found that the top 10% of domains hold only 16% of citations — a genuinely fragmented competitive field where a focused content response can still establish a foothold. In more mature or technically narrow categories, the same 10% of domains can hold nearly 60% of citations. The path in is considerably harder.

That engine-specific and category-specific variation is also why competitive AI visibility cannot be assessed from a single engine or a single manual check. According to Omnia's citation data, brand visibility varies by 20x or more across engines for the same domain. A competitor dominant in AI Overviews may be nearly invisible as a named brand in ChatGPT for the same queries. Monitoring one engine produces one behavioral system's view of the competitive landscape — and that view may bear little resemblance to what buyers using a different engine are seeing.

The strategic question this section raises is a simple one: is the competitive field in your category open or already consolidating? A brand team that cannot answer it cannot make a rational decision about how urgently to act or where to focus first. The rest of this article builds the system that answers it.

Most of your competitors' AI Overview citations are invisible to standard monitoring

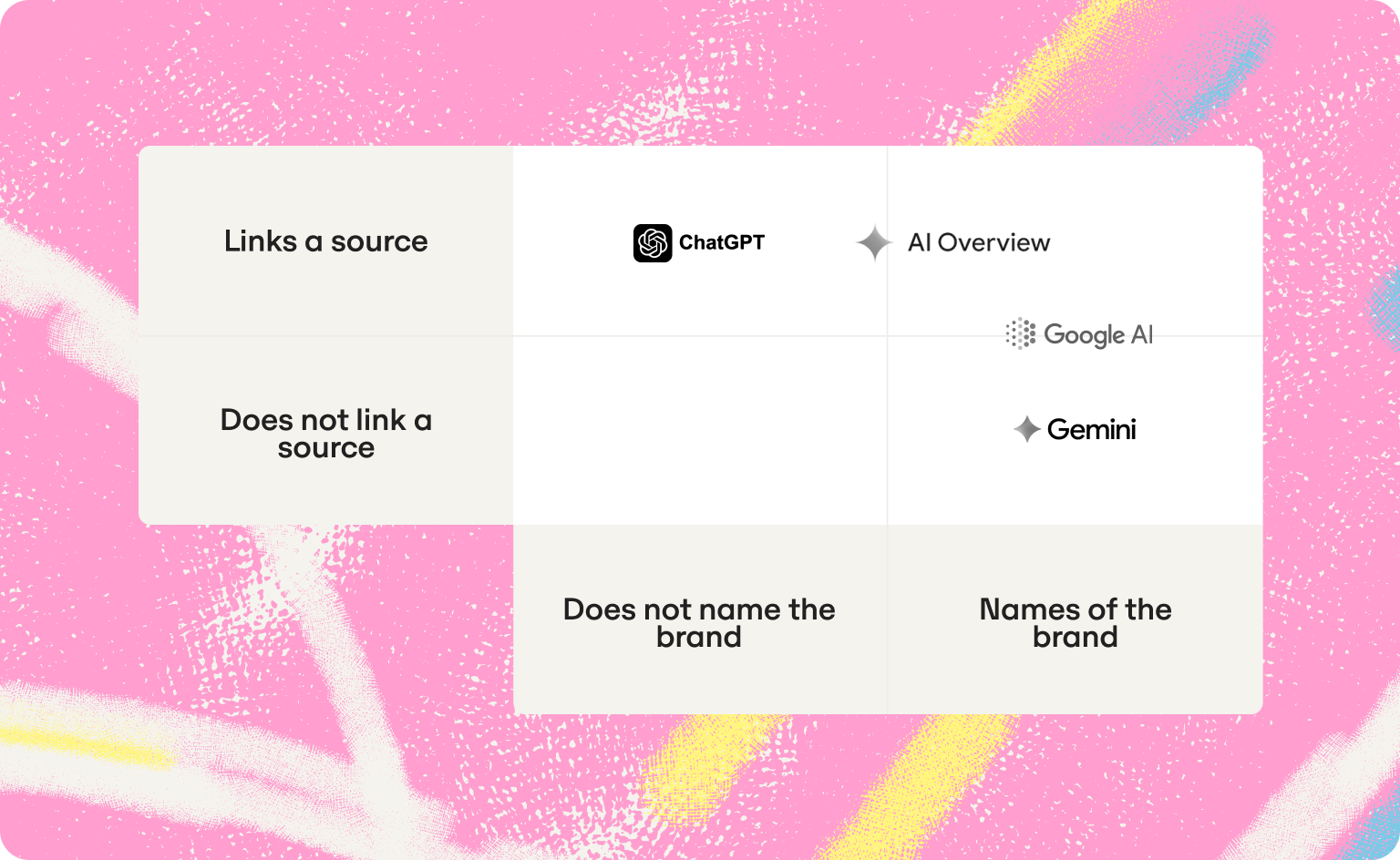

When an AI engine answers a question using a brand's content, it typically cites the domain with a source link. What it does not do, 62% of the time, is say the brand's name. The source link is there. The brand mention is absent. This is the ghost citation problem — and it fundamentally changes how competitive AI Overview presence should be read.

.png)

Additional research from Kevin Indig published in April 2026, analyzed 3,981 domains across 115 prompts, 14 countries, and four AI engines found that 74.9% of tracked domains received source citations, but only 38.3% were mentioned by name in the generated text. The gap between those two numbers represents the competitive intelligence blind spot that most monitoring approaches miss entirely. A competitor can be shaping the answers your buyers receive in AI Overviews without ever appearing in a mention-based monitoring report.

The examples from Indig's research make the scale of this concrete. Medium.com was cited 16 times across three engines for the same prompts and named zero times. Wikipedia was cited 27 times and named in only two answers, both for the same conversational query. These are not edge cases — they are the standard behavior for content aggregators and information sources that AI engines treat as anonymous source material.

For a brand manager or marketing lead responsible for brand narrative and competitive positioning, the practical implication is direct. A competitor can be influencing buyer research through AI citations without ever triggering a text-level mention that appears in a standard monitoring report.

The competitive threat is structurally invisible to any approach that only tracks whether a brand name appears in generated text. Understanding owned vs. earned mentions — and which layer a competitor is operating in is the first step toward reading their AI Overview presence accurately.

How to read a competitor's AI Overview presence as a competitive profile

Not all competitor presence in AI Overviews represents the same competitive threat. A brand team that treats every appearance as equivalent is responding to the wrong signal — and allocating content resources against a problem that may be far less urgent than the one they are missing.

Reading a competitor's AI Overview presence accurately means answering four diagnostic questions before deciding how to respond.

Are they named, cited, or both — and on which engines?

A competitor named in generated text has brand recognition in that engine for that query. A competitor cited as a source has content authority. A competitor with both has consolidated brand authority — the hardest position to displace. A competitor cited but never named is a ghost citation: their content is shaping the answer and their brand is not being built in the process.

The engine dimension matters here specifically. A competitor who is named in 80% of Gemini answers for a query cluster but is a ghost citation in ChatGPT has a very different competitive profile than one who is both named and cited consistently across all four engines. Which engines your buyers actually use for vendor research determines which of those profiles is more urgent. Track citation share per engine, not as an aggregate.

How stable is their presence across weeks?

A competitor appearing consistently across the same queries for six or more consecutive weeks has built model confidence in their content for that topic. Displacing them requires a structural content and authority response over months, not weeks. A competitor appearing intermittently holds a contested slot — one that a targeted content or distribution move can realistically win in a shorter timeframe. Citation stability is the signal that separates structural competitive threats from temporary ones. Track answer positioning over time, not as a point-in-time snapshot.

Which query types do they dominate?

A competitor consistently present on comparison queries ("brand X vs brand Y") occupies a different competitive position than one dominating problem queries ("how to solve X") or research queries ("best tool for X use case"). Comparison query dominance means buyers in active evaluation are encountering that competitor as the reference point. Problem query dominance means buyers earlier in the funnel are being directed toward that competitor's framing of the problem before they reach a vendor shortlist. These require different content responses. The query type breakdown is what converts a mention frequency number into a strategic insight.

Is their citation going to their own pages or through third parties?

A competitor cited through a G2 review, a press mention, or an industry aggregator is appearing in AI Overviews via a third-party source. The traffic from that citation goes to the third party, not to the competitor. Their brand association in AI answers is being built, but their own pages are not accumulating the citation pattern that compounds over time. A competitor cited directly through their own pages is building a different and more durable competitive position. This is the owned vs. earned mentions distinction applied to competitive profiling — and it changes the urgency of the response.

Three competitive profiles emerge consistently from this diagnostic:

How to set up continuous monitoring for brand and competitor mentions in AI Overviews

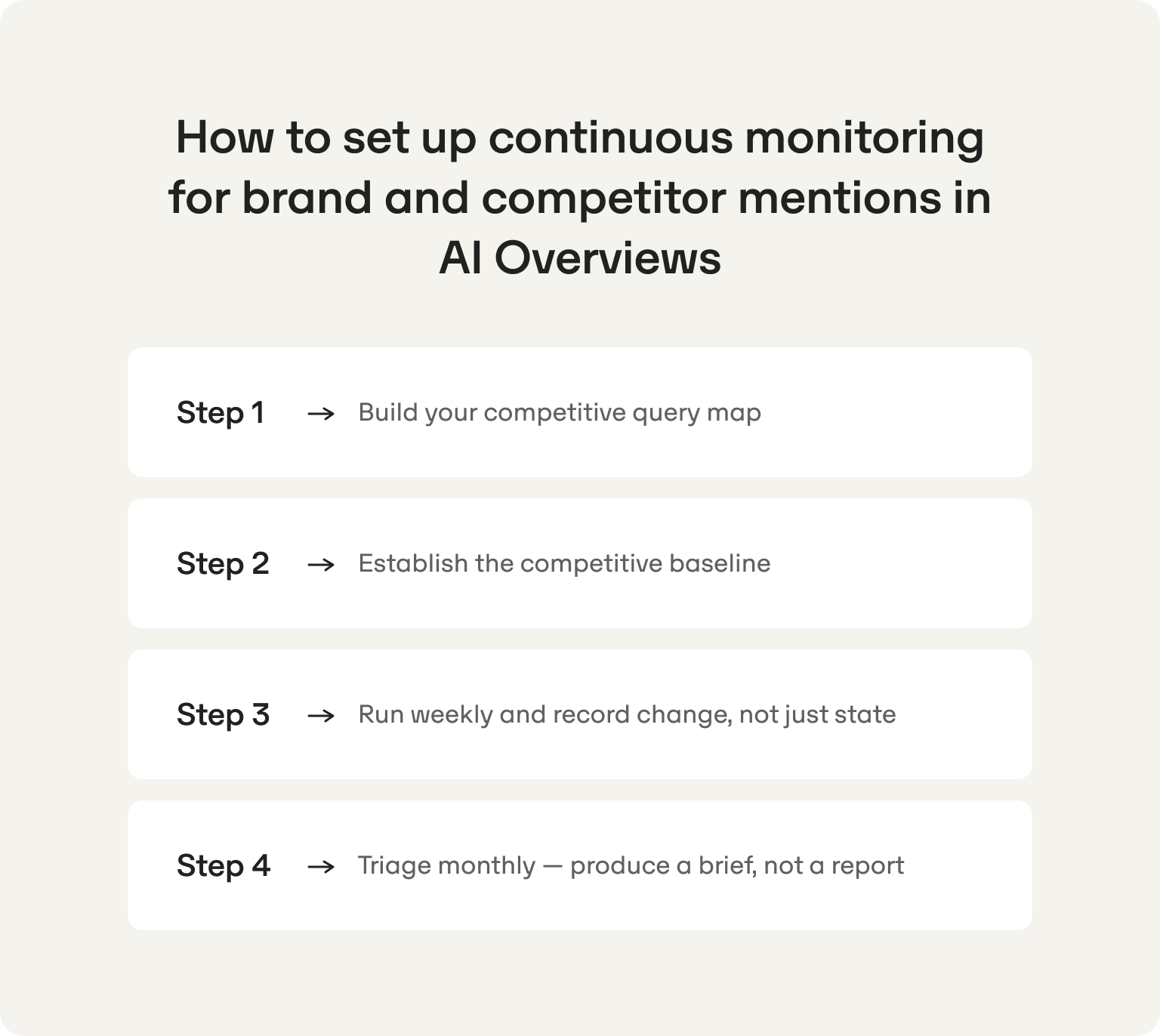

The competitive profiling framework in the previous section requires data to operate. That data comes from a monitoring system — built once, run weekly, and designed to produce a competitive brief every month rather than a report nobody acts on.

For a one or two-person marketing team at a VC-backed startup, the system needs to be the minimum viable version: structured enough to produce a competitive picture, lean enough to run in one hour per week without displacing everything else.

Four steps. Each produces an output the next one requires.

Step 1: Build your competitive query map

The query set defines the ceiling of what the monitoring system can tell you about the competitive field. For this audience, the selection criterion is buyer decision moments — the queries a buyer asks when evaluating vendors — not keyword volume or organic ranking strength. For more on how prompt research differs from keyword research in building this set, that distinction determines the quality of the competitive data the system produces.

Thirty queries is the minimum for a lean team. Four types cover the competitive field:

- Comparison queries: "[your brand] vs [competitor]"

- Category queries: "best [product type] for [use case]"

- Problem queries: "how to [solve the pain your product addresses]"

- Research queries: what a buyer asks before reaching a vendor shortlist

Competitor set: four to six named competitors. The selection criterion is which names appear in sales conversations and lost deal feedback — not internal perceptions of who the main competitors are.

Before running a single check, document the full scope in a shared sheet: query, query type, each competitor as a separate column (text mention: Y/N), citation layer (URL if linked). Without that structure from day one, weekly checks produce observations, not a dataset that can be read over time.

Step 2: Establish the competitive baseline

The baseline is the zero-point against which every future shift is measured. Run it once, at a fixed moment, before any content changes are made. For each query, record:

A team with a baseline can report competitive movement. A team without one can only describe a current state. Those are not equivalent positions when the founder asks what changed last month.

Step 3: Run weekly and record change, not just state

One hour per week. For each query, flag what changed since the prior week:

- New competitor named in generated text

- Existing competitor displaced

- Brand moved from cited to text-mention only, or dropped entirely

- First-mention position changed

- Ghost citation pattern appeared or resolved for a competitor

The change flag converts the weekly log from a presence report into a competitive signal. Six weeks of consistent flagging produces enough data to answer the questions that matter: which competitors are consolidating, which query clusters are contested, and where the content response should go first.

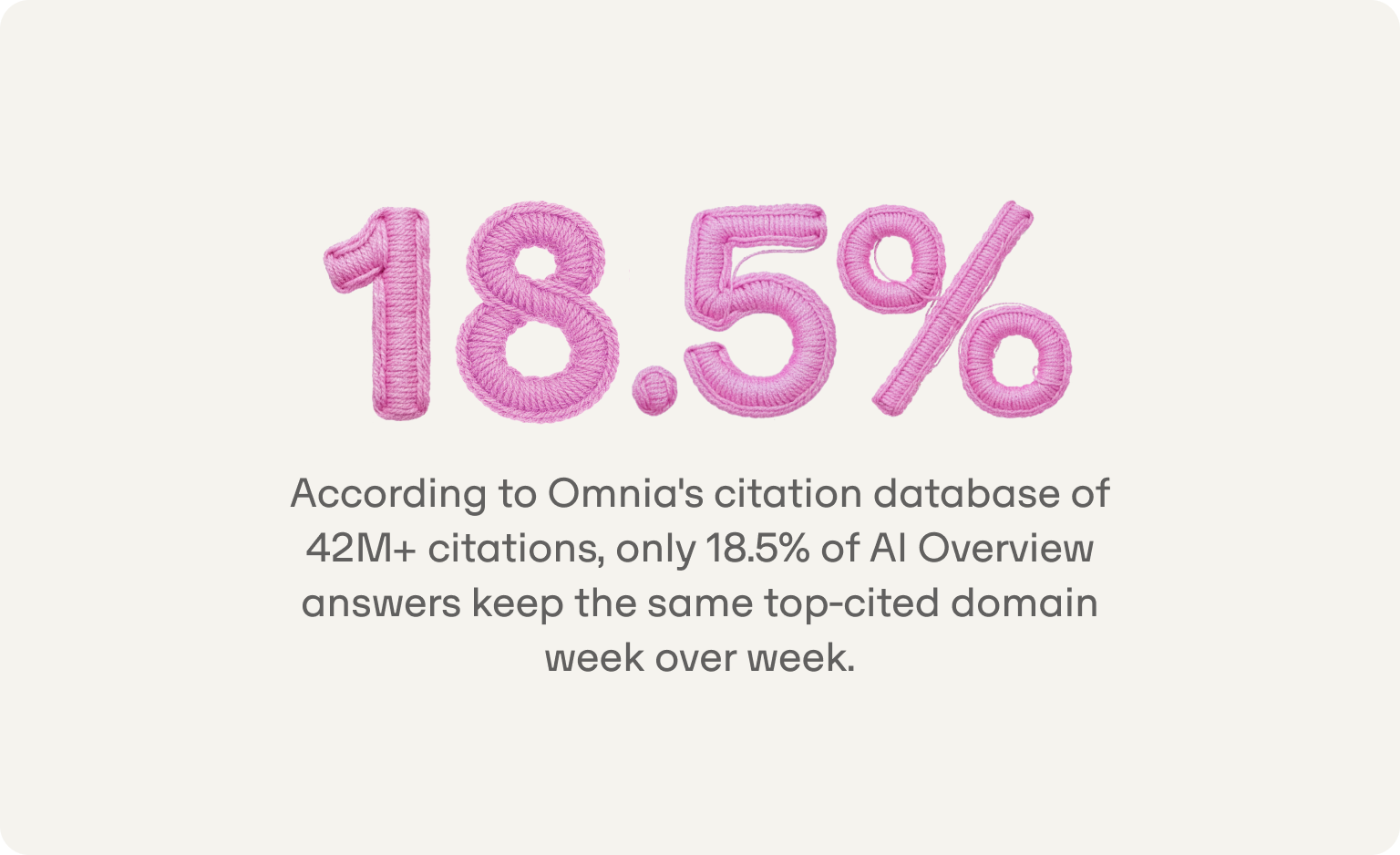

Weekly is the minimum viable cadence for this audience specifically. According to Omnia's citation database of 42M+ citations, only 18.5% of AI Overview answers keep the same top-cited domain week over week. A monthly review cadence means watching consolidation that already happened, not catching it while a response is still possible.

Step 4: Triage monthly — produce a brief, not a report

At the end of each month, apply the three competitor profiles from the diagnostic section to each query cluster:

The output of the monthly triage is three to five query clusters with a specific action type attached to each. For a one or two-person marketing team, that list is the content brief for the month, built from competitive data.

Why AI Overview competitive monitoring belongs in your brand intelligence stack

A brand manager or marketing lead at a VC-funded tech startup already runs monitoring systems. Social listening tracks what buyers and press say about the brand. Review monitoring tracks what customers say on G2 and Capterra. Win/loss analysis tracks what buyers say when they choose a competitor. Each system monitors a surface where competitive perceptions form and brand authority is won or lost.

AI Overviews are now that surface for a growing share of high-intent buyers before they visit a website, speak to sales, or read a review. The gap in most brand intelligence stacks is the absence of any system for monitoring what is being said — and which competitors are being named and cited — on that surface. Three arguments explain why closing that gap is becoming urgent.

The consolidation problem

Citation authority in AI Overviews consolidates over time. The more consistently a competitor is cited and named for a query cluster, the more the model reinforces that association, and the harder it becomes to displace. A brand team not monitoring this process cannot detect it until the consolidation is already structural. By the time sales is hearing "we saw Competitor X recommended in AI search," the competitor has built months of citation momentum across the exact query types your buyers use to evaluate vendors.

After eight weeks of consistent monitoring, the system should tell you:

- Which competitors are gaining ground week over week across your tracked query set

- Which query clusters one competitor dominates consistently — the structural competitive gaps requiring a cluster-level content response

- Which parts of the competitive field are still fragmented and open to a first-mover content move

That is what separates catching consolidation while a response is still feasible from responding after it has locked in.

The positioning intelligence problem

How a competitor appears in AI Overviews — which query types they are named in, which use cases they are associated with, which comparisons they anchor — is positioning intelligence that no competitor website audit, ad monitoring tool, or social listening platform surfaces. AI Overview monitoring is the only way to see how the AI layer has already interpreted and framed the competitive landscape in a category. Knowing that a competitor is being framed as the category default on comparison queries while your brand appears primarily on problem queries tells you something about competitive positioning that no other monitoring system can show.

After eight weeks of consistent monitoring, the system should tell you:

- Whether a competitor's AI Overview presence is built on ghost citations — content used, brand name absent — or on named and cited authority actively building brand association in buyer-facing answers

- Which query types each competitor is associated with, and whether those associations match the positioning battle that matters most for your category

- Where your brand appears in the competitive framing AI Overviews have already established — and where it is absent entirely

Those are different threats requiring different responses, and the distinction is only visible with a dated competitive record.

The reporting problem

Brand investment is under pressure to be measurable. The traditional brand measurement story — share of voice in earned media, aided awareness, brand recall is a format founders and CMOs already understand. AI Overview mention share is the same concept applied to the surface that is replacing search as the first point of market contact for buyers. A marketing lead who can show mention frequency rate relative to two key competitors, tracked over eight weeks, with a narrative about which content actions drove improvement, has a brand measurement story leadership can act on.

After eight weeks of consistent monitoring, a founder or CMO check-in should take two minutes and cover five points:

- Which competitors are gaining or losing ground in AI Overviews this month

- Which query clusters are structurally lost versus still contestable

- Whether the ghost citation pattern among competitors represents a consolidating threat or a weak position

- Whether overall competitive position has improved, held, or deteriorated since the baseline

- Where the content investment this month goes, and why

That is a competitive intelligence function. A collection of screenshots and a gut feeling is not.

Three questions fall outside what this system is designed to answer:

- Why a competitor is winning a specific query cluster. The monitoring system surfaces the gap. Diagnosing the content decisions and authority patterns behind it requires reviewing the specific cited pages, the domains they appear on, and the content structures AI models prefer — a separate content analysis step.

- Whether AI Overviews are affecting organic traffic. That question requires Search Console CTR analysis against confirmed AI Overview presence, covered in full in the companion article on tracking rankings and visibility in Google AI Overviews.

- Which single engine to prioritize. The monitoring data shows where the brand is winning and losing across each engine. The prioritization decision depends on where buyers in the specific category conduct vendor research — a strategic call the data informs, not one it makes.

Omnia is built to make this function operational for a lean team. It produces the continuous competitive mention record that makes consolidation visible before it locks in, the engine-specific data that functions as positioning intelligence, and the monthly triage output that converts a competitive gap into a content brief the team can act on this week.

Book a demo to see how Omnia maps the competitive mention field in your category, or get started and run your first competitive citation audit today.

FAQs

What is a ghost citation in AI Overviews, and why does it matter for competitive monitoring?

A ghost citation occurs when an AI engine uses a brand's content as a source — linking to the domain — but never mentions the brand name in the generated text. Research from Kevin Indig analyzing 3,981 domains across four AI engines found that 62% of all citations are ghost citations: the source link is present, the brand name is absent. For competitive monitoring, this matters because a competitor can be shaping buyer-facing AI answers in your category without ever appearing in a monitoring report that only tracks text mentions. Standard mention-based tracking misses the ghost citation layer entirely, which means it systematically undercounts certain types of competitive presence and overcounts others.

Can I track competitor mentions in AI Overviews with Google Search Console or standard SEO tools?

Search Console does not show competitive data of any kind, and has no segment or report that surfaces how competitors are mentioned or cited in AI Overviews. Standard rank trackers measure organic index position, which is a separate surface from AI Overview citation presence. A brand can hold position one in organic results and be absent from the AI Overview above it for the same query — and a competitor ranked below them can be the cited source. Tracking competitive AI Overview mentions requires a purpose-built system that monitors text-level mentions and source citations separately across a defined query set, with a dated record of how each shifts week over week.

How is monitoring competitor mentions in AI Overviews different from social listening or review monitoring?

Social listening and review monitoring capture what people say about brands on surfaces where human-generated content is the input. AI Overview competitive monitoring captures how AI models characterize and cite brands in response to buyer queries — a surface where the input is AI-generated and the output shapes buyer perceptions before they visit a website or read a review. The competitive intelligence produced is different in kind: instead of tracking sentiment and mentions across social platforms, this system tracks which competitors are being framed as category authorities in the specific query types your buyers use during vendor evaluation. The two systems are complementary, not redundant.

How many queries do I need to monitor for a meaningful competitive picture?

Thirty queries is the minimum viable set for a lean startup marketing team. That set should span four query types: comparison queries, category queries, problem queries, and research queries — all selected based on buyer decision moments rather than keyword volume. Prioritize queries where AI Overviews appear consistently (80% or more of checks) over those where they appear intermittently, since intermittent queries produce unreliable competitive data. A 30-query set tracked consistently every week produces more actionable competitive intelligence than 100 queries tracked monthly with no consistent structure.

What does it mean if a competitor is cited frequently but never named in AI Overview text?

A competitor in this position is operating as a ghost citation — their content is being used as source material by the AI engine, but their brand is not being built in the process. Based on Indig's research, this is the norm for content aggregators and information sources that AI engines treat as anonymous reference material. For competitive profiling, a ghost citation competitor is a lower immediate priority than one who is both consistently named and cited, because their cited presence is not generating brand association in buyer-facing answers. That said, a ghost citation pattern that is consolidating — appearing across more queries week over week — warrants monitoring, as it can shift to named presence if the competitor builds broader category authority.

How do I know whether a shift in competitive mentions reflects a Google update or a competitor gaining ground?

Apply the cluster test to your weekly monitoring record. A Google-side platform change affects many queries simultaneously and typically spans different competitors and different topic areas at the same time — it looks like a broad shift across the full query set. A competitive consolidation affects a specific cluster of related queries consistently, and benefits the same competitor across multiple consecutive weeks. Without a dated historical record of competitive mention data, both patterns look identical: a shift with no clear cause. With six or more weeks of consistent weekly tracking, the two causes produce visibly different patterns in the data.

How do I present AI Overview competitive data to a founder or CMO who hasn't heard of GEO?

Frame it in brand measurement language they already use. AI Overview mention share works the same way share of voice does in traditional brand measurement — it tells you what proportion of the relevant competitive surface your brand occupies relative to named competitors. Lead with the comparison: "In the 30 queries buyers use to evaluate tools like ours, Competitor X appears in 67% of AI Overview answers. We appear in 35%. On comparison queries specifically, they appear in every tracked answer and we appear in none." That is a competitive positioning finding, not a technical SEO briefing. Follow with the three to five query clusters where a content response would have the highest impact, and the specific action type for each. A founder or CMO who understands competitive positioning will understand the problem immediately — the terminology is the only unfamiliar part.

.png)