Checking whether your brand name appears in a Perplexity answer is not the same as understanding your Perplexity presence. The signal that actually predicts competitive standing is citation position: according to Omnia's citation database, positions 1 through 6 each account for 11–12% of all Perplexity citations, while position 9 accounts for 3% and position 10 and above less than 0.1%. This article builds the minimum viable tracking system for a lean team to monitor both layers continuously, identify where competitors hold source authority in their category, and produce a weekly action list rather than a monitoring report.

Most brand and content teams check their Perplexity presence the same way: run a few queries, scan the generated text for the company name, and note whether it appears. That process has a name. It is called spot-checking. It is not monitoring.

Perplexity generates answers by pulling from a source layer that is visible, numbered, and trackable in a way no other AI engine currently matches. Google AI Overviews collapse their citations. ChatGPT surfaces almost none. Perplexity shows its sources inline, every time, in ranked order. That transparency is the opportunity most teams are sitting on without a system to use it.

There is a second problem that compounds the first. A brand can appear in Perplexity answers in two distinct ways: as a named entity in the generated text, and as a cited domain in the source list. These are independent outcomes. A brand cited at position two that never gets named in the answer text has a different gap to close than a brand named without being cited at all. Teams that track only one layer misdiagnose why they are losing ground to competitors.

This article is specifically about Perplexity. If your question is about Google AI Overviews, who holds category authority across Google's AI systems, or how to build a competitive intelligence picture around AI Overviews citation behavior, the companion article on tracking brand and competitor mentions in AI Overviews covers that system. The question this article answers is narrower and earlier: is Perplexity treating your brand as a credible source in your category, and if not, which layer of your presence is missing?

Perplexity's citation model makes brand visibility structurally different from other AI engines

Perplexity is the only major AI engine that shows its sources inline, numbered, and consistently present on almost every answer it generates. That is not a minor product detail. It is the reason brand monitoring in Perplexity is a tractable problem when monitoring in other engines is not.

Google AI Overviews surface citations selectively, collapsing them behind a link count that tells you nothing about source hierarchy. ChatGPT surfaces almost none by default. Perplexity shows the source layer every time, in ranked order, with the domain visible. The audit trail exists. Most brand teams just have no system for reading it.

Before building that system, there is one distinction the methodology depends on. A brand can appear in a Perplexity answer in two structurally different ways: as a named entity in the generated text, or as a cited domain in the numbered source list. These are independent outcomes, and they are not interchangeable signals. A brand named in the answer text because Perplexity knows it well enough to reference it is not the same as a brand whose pages Perplexity is actively pulling from as source material. The first is recognition. The second is source authority. Losing ground in one does not mean losing ground in both, and gaining in one does not fix a gap in the other.

This distinction also explains why Perplexity requires a different tracking approach than ChatGPT, even though both are AI answer engines. According to Omnia's citation database tracking 42M+ citations across four AI engines, Perplexity averages 7.5 domains per answer. ChatGPT averages 4.1.

Perplexity offers nearly twice the citation opportunity of ChatGPT. But a wider source pool does not mean a more forgiving one. It means the competitive field is larger, the source mix is different, and the tracking methodology has to reflect Perplexity's specific mechanics rather than borrowing from how teams approach Google or ChatGPT. Getting cited by ChatGPT with its four average slots is an exceptionally strong authority signal precisely because the table is so small. Getting into Perplexity's source layer is more accessible — but only for brands that understand what Perplexity selects from and why.

Three source behaviors show up consistently across research-intent queries in Perplexity and determine whether a brand has a realistic path to citation.

Freshness weighting

Perplexity weights recently updated content more heavily than Google AI Overviews does. A page that has not been touched in 18 months competes at a structural disadvantage against a competitor who updates theirs quarterly. This creates a different content maintenance cadence requirement than most brand teams are running.

Query specificity

Perplexity tends to cite sources that directly answer the question asked, not sources that cover the broader topic area. A well-optimized category page that covers the general subject performs worse than a tightly scoped piece built around a specific query. Categorical content is less reliable than query-specific content, and the gap between the two widens as the query gets more precise.

Source redundancy

Per topic cluster, Perplexity pulls from a small, relatively stable set of domains. Once a competitor establishes presence in that source set, displacing them by optimizing the same content type is unlikely to work. The path in is usually a different query variant or a different content format that Perplexity has not already assigned to a dominant source.

The question this article is built to answer is not "does Perplexity know our brand?" Most brands at the startup and scaleup stage are known. The question is whether Perplexity's LLM source selection process is pulling your pages into answers for the queries your buyers are actually running — and if not, which layer of your presence is missing.

What most brand teams get wrong when they check their Perplexity mentions

The three mistakes below are not edge cases. They are the default approach for almost every brand and content team that starts paying attention to Perplexity. Each one produces a plausible-looking result that is wrong in a specific way.

Checking your brand name instead of your source domain

The standard process is to run a few queries, scan the generated text for the company name, and record a mention if it appears. This process misses the citation layer entirely. A brand can be named in Perplexity's generated text without a single page from its domain appearing in the source list — and a brand can be cited at position one in the source list without its name ever appearing in the answer. Text mentions are a lagging signal. They reflect what Perplexity has already learned about a brand's category position. Citation presence is the leading signal. It reflects what Perplexity is actively pulling from to construct its answers right now. Teams that monitor only for text mentions are reading yesterday's data.

Checking one query instead of a query cluster

Perplexity's responses vary significantly by phrasing, even across queries with identical buyer intent. A brand cited in "best project management tools for remote startups" may not appear in "project management software for small teams" even though both queries describe the same evaluation moment. A single query check produces a single data point. A cluster of 20–30 phrasing variants across awareness, comparison, and validation intent types produces a map of where source authority actually exists — and more importantly, where it is absent. One data point is not a baseline. It is literally a coin flip.

Treating citation as binary

Cited or not cited is the wrong frame, and it produces the wrong diagnosis. The relevant dimensions are: cited and named, cited but unnamed, named without citation, and absent entirely. Each state has a different cause. A brand cited at position two that never gets named is facing a content framing problem — Perplexity trusts the source but is not treating the brand as the definitive answer to the query. A brand named without citation is running on reputation rather than source authority, which means a competitor with better-structured content can displace it without the brand ever seeing it coming. A brand that is simply absent has a source placement problem, not a content quality problem. Applying the wrong fix to the wrong diagnosis wastes months.

Most Perplexity monitoring tools on the market today track text mentions and stop there. That is not a monitoring system. It is a notification service. Before building the full tracking system in the sections below, it is worth knowing where your brand currently stands. Omnia's free AI ranking checker generates a report showing how your brand appears across AI engines, including Perplexity, in under two minutes. It is a useful baseline before any structured monitoring begins and it makes the gaps in the sections that follow considerably easier to diagnose.

How to read your Perplexity presence as a source intelligence picture

Most brand teams approach Perplexity with a single question: are we showing up? That question is not wrong, but it is too blunt to produce a useful answer. Showing up at position eight on a query that only four people run per month is not the same competitive outcome as showing up at position two on a query that drives evaluation decisions in your category. Before any tracking system is worth building, there is a diagnostic layer that needs to come first — a way of reading what your current Perplexity presence is actually telling you, rather than just recording whether it exists.

Four questions structure that diagnostic. Each one narrows the problem from "we have a Perplexity visibility issue" to a specific gap with a specific fix.

Question 1: Which of your domains does Perplexity cite, and for which query types?

The answer locates where source authority already exists so the team can extend it rather than starting from scratch. If Perplexity cites your blog consistently for comparison queries but not for how-to queries, the gap is structural — a content format problem, not a domain authority problem. If it cites a third-party review site that mentions your brand rather than your own pages, the gap is ownership. Knowing which domain is being cited, and on which intent type, determines whether the next action is to create content, optimize existing content, or pursue placement on sources Perplexity already trusts. Running the same fix across all query types without this distinction wastes the effort.

Question 2: Are you cited, named, or both?

These are independent signals and they point to different problems. A brand cited at position two without its name appearing in the generated text has a framing gap — Perplexity is treating the page as useful source material but not as the definitive answer to the query. A brand named frequently in generated text without a corresponding domain citation is running on accumulated brand recognition rather than active source authority, which is a fragile position. A competitor with better-structured, more query-specific content can displace that brand from the answer without the brand team ever noticing, because the name may keep appearing in text for weeks after the citation has moved elsewhere. The distinction between owned and earned mentions matters here: owned citations — your domain in the source list — are the durable layer. Named mentions without citation are borrowed equity.

Question 3: What position are your citations appearing at, and does that position hold week over week?

This is where Perplexity's tracking system becomes categorically different from every other AI engine. Google AI Overviews, AI Mode, and ChatGPT do not expose numbered citation positions. Perplexity does — and the position distribution makes the stakes concrete.

According to Omnia's citation data, the position breakdown for all Perplexity citations looks like this:

Positions one through six each account for roughly 11–12% of all citations. Position seven drops to 9%. Position eight: 6.5%. Position nine: 3%. Position 10 and above accounts for less than 0.1% of all Perplexity citations combined. Appearing at position eight or nine is not meaningfully different from not appearing at all — and no other AI engine gives a brand team the data to know which situation they are in. Position stability matters as much as position itself. A brand holding position two across 80% of tracking runs for a given query cluster has embedded source authority. A brand oscillating between positions two and seven week over week has marginal presence that a competitor can displace with a single well-structured content update.

Question 4: Are your citations going to your own pages or through third-party sources?

If Perplexity consistently cites review aggregators, industry publications, or comparison sites that mention your brand rather than your own domain, your Perplexity presence is borrowed. It depends on a third party maintaining content that happens to reference you favorably. That third party can update, restructure, or deprioritize your brand at any time — and the citation moves with it. Competitive AI visibility built on third-party citations is not a strategic position. It is a dependency.

The three source profiles that emerge from this four-question diagnostic are worth naming explicitly, because each one implies a different type of response:

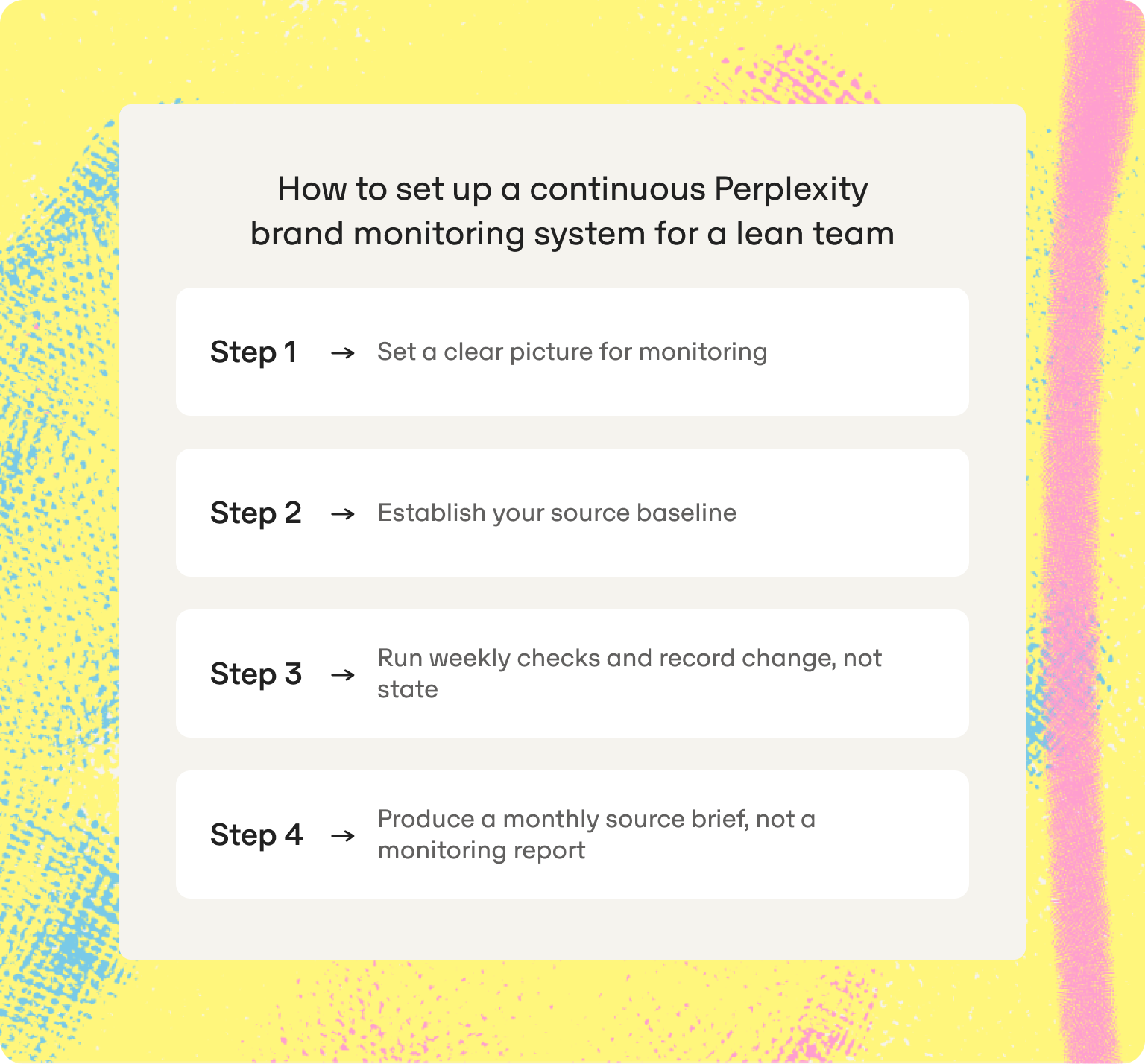

How to set up a continuous Perplexity brand monitoring system for a lean team

The diagnostic above tells you where you stand. The system below tells you how to track whether that changes — and why. It is built for a one or two-person marketing team at a VC-backed startup with no dedicated GEO resource and roughly two hours per week available for this work. Each step produces an output the next step requires. They cannot be usefully reordered.

Step 1: Set a clear picture for monitoring

Start with 20–30 queries distributed across three intent types that represent the buyer decision moments in your category. The table below shows the structure and example phrasing for each type — adapt the bracketed placeholders to your specific category.

Step 2: Establish your source baseline

Run every query in the tracking set on the same day and record all six data points. This is your T0 — the picture of where you stand before any content or placement action is taken. Do not interpret it yet. A baseline is only meaningful when there is a T1 to compare it against, and any interpretation before that comparison is speculation dressed as analysis.

What the baseline does immediately is produce a prioritized problem set. Queries where your domain is not cited and your brand is not named are visibility gaps — answer inclusion criteria are not being met, and the fix is source placement. Queries where your domain is cited but your brand is not named are framing gaps — the content is trusted but not positioned as the definitive answer. Queries where third-party sources carry your brand are dependency risks attributed to the citation is not yours to control. Sorting every query in the tracking set into one of these three categories gives the team a ranked action list before a single piece of content has been written or updated.

Step 3: Run weekly checks and record change, not state

One hour per week. Run the same query set. Record the six data points again. The variable being tracked is not whether your brand appears — it is whether that changed since last week, and in which direction.

Flag five specific change types each week: new citation (your domain appeared where it did not the prior week), lost citation (your domain disappeared from a query where it appeared), position shift (your citation moved up or down by two or more positions), competitor gain (a competitor domain or brand name appeared for the first time on a given query), and source shift (Perplexity changed which third-party domains it cited on a given query cluster). These five change types are the signals. Everything else is state, and state without change is not actionable.

The reason weekly is the minimum viable cadence for this audience is practical, not theoretical. Perplexity's source pool per answer has been contracting — down from a peak of 11.8 domains per answer in November 2025 to 7.5 in April 2026, according to Omnia's citation database. A contracting source pool means the brands already embedded in Perplexity's trusted source layer are harder to displace, and the brands outside it are competing for fewer available slots each month. A team checking monthly is reading data that is already four weeks stale. By the time they notice a competitor has gained position, the window to respond with new content has likely passed.

Step 4: Produce a monthly source brief, not a monitoring report

At the end of each month, apply the three source profiles from the diagnostic section to each query cluster in the tracking set. Assign each cluster to owned authority, earned presence, or borrowed presence based on the past four weeks of data. Then identify the three to five query clusters where the profile has degraded or where the gap to a competitor is widest.

From those clusters, produce a ranked list of content and placement actions: which queries need new owned content built around conversational intent, which need an existing page updated for freshness, and which need placement on a third-party domain that Perplexity already cites in that cluster. This list is the brief that goes to whoever owns content production. It is not a chart of citation counts over time. It is a ranked set of decisions with a specific rationale attached to each one.

That distinction matters more than it sounds. A monitoring report tells the team what happened. A source brief tells the team what to do next. The former sits in a shared folder. The latter drives the content calendar for the following month. For a one or two-person team without a dedicated GEO resource, the only monitoring system worth running is the one that ends with an action list because without that output, the two hours per week spent tracking produces nothing the business can use.

Why Perplexity monitoring belongs in your brand intelligence stack — and how Omnia runs it at scale

The four-step system above works. For a team running it manually across 20–30 queries for a single brand, the weekly time cost is manageable and the output is genuinely useful. The moment a competitor set enters the picture — tracking three to five competitors across the same query map, recording their citation positions, flagging when they gain or lose ground — the manual approach breaks. Logging six data points per query per competitor per week across 25 queries and four competitors is 600 data points a week. No one does it consistently. The baseline degrades, the change signals become noise, and the monthly source brief turns back into a monitoring report that nobody acts on.

Perplexity is not a social channel and it is not a traditional search engine. It is a research engine. The people using it are in active evaluation mode — comparing tools, validating shortlists, stress-testing decisions they have already provisionally made. That makes citation presence in Perplexity a brand signal that sits closer to purchase intent than almost any other channel a lean marketing team is currently tracking. Social listening tells a team when people talk about their brand. Review monitoring tells them what buyers say when they evaluate it. Perplexity monitoring tells them whether the AI engine their buyers are actively using for vendor research considers their brand a credible enough source to cite — and at what position. That is a different layer of intelligence, and it belongs in the same stack as the other two.

Omnia is built for the point where manual tracking stops being a methodology and starts being a liability. Its specific capabilities for Perplexity brand monitoring:

- Continuous citation tracking at the source level. Omnia monitors Perplexity answers across a brand's full query set daily, recording text-level mentions and source-level citations as separate data layers. The two are never conflated, which means the diagnostic from the previous section is always current rather than a point-in-time snapshot.

- Citation position tracking. Because Perplexity is the only AI engine with a trackable position hierarchy, Omnia records citation position across every query in the tracking set — not just presence or absence. A position-one citation and a position-nine citation are reported separately, because they are not the same competitive outcome.

- Named vs. cited separation. Omnia flags when a brand is cited but not named, and when it is named but not cited, so the team always knows which type of gap they are solving for rather than applying a single content fix to two structurally different problems.

- Competitor source profiling. Omnia tracks which domains Perplexity cites for competitor-relevant queries, surfacing when a competitor gains or loses citation share

- Sentiment analysis via MCP connector. For teams who need to go beyond citation presence into how Perplexity frames their brand in generated text, Omnia's MCP connector surfaces sentiment signals directly inside the AI assistant workflow — so the team can see not just whether they are cited, but how the answer positions them relative to competitors.

- Action layer output. Omnia's output is not a chart of mention counts over time. It is a prioritized content brief: which query clusters need new content, which need a freshness update, and which need third-party placement on a domain Perplexity already trusts in that cluster.

What this system does not cover: organic traffic attribution from Perplexity referrals, or whether a citation shift reflects a Perplexity model update rather than a competitor content action. Both are worth tracking — they just require a different instrument. For a one or two-person marketing team at a VC-backed startup, the priority is knowing where you stand in Perplexity's source layer today, whether that is changing, and what to publish next. That is what this system is built to tell you — and it is the same system a growing number of agency partners are already running at scale on behalf of their clients.

Start tracking your Perplexity brand presence with Omnia and sign up free for your 14-day Growth plan free trial today.

FAQs

What's the difference between a brand mention and a brand citation in Perplexity — and why does it matter for monitoring?

A mention is when your brand name appears in Perplexity's generated answer text. A citation is when Perplexity includes your domain as a numbered source in its reference list. These are independent outcomes — a brand can be mentioned without being cited, and cited without being mentioned — and they point to different problems. Text mentions reflect what Perplexity has already learned about a brand's category position. Source citations reflect what Perplexity is actively pulling from to construct its answers right now. Monitoring only for mentions is reading a lagging signal while ignoring the leading one. The cited inclusion rate of your domain across a defined query set is the metric that predicts future mention frequency, not the other way around.

Can I track my Perplexity mentions with social listening tools or Google Search Console?

No. Social listening tools crawl social platforms and some web content — they do not query AI engines or read AI-generated answers. Google Search Console reports on traffic from Google properties only. Standard SEO tools built around AI Overviews are designed around Google's specific citation behavior, which does not transfer to Perplexity's source model. Tracking Perplexity brand mentions and citations requires a tool that queries Perplexity directly at scale, logs source citations and text mentions as separate data layers, records citation position, and tracks all of it against a fixed query set over time. No general-purpose SEO or social tool currently does all four.

How is tracking brand mentions in Perplexity different from tracking them in Google AI Overviews?

The mechanics are different enough to require separate systems. Google AI Overviews collapse their citations and generate answers for a subset of queries, making the source layer difficult to audit consistently. Perplexity shows citations inline on almost every answer, in numbered order, making the source layer directly trackable. The strategic question is also different. The companion article on tracking brand and competitor mentions in AI Overviews is built around mapping competitive field consolidation — who Google AI treats as the category authority and whether that position is still contestable. This article is built around source intelligence — whether Perplexity is actively pulling from your pages, at what position, and why competitors appear instead when they do.

How many queries do I need in my Perplexity tracking set for meaningful results?

20 queries is the practical minimum for a single brand tracking its own presence across the four intent types. Below that threshold, there is not enough variation to distinguish source authority from query-specific luck — a brand that appears on three out of five queries has a very different position than one that appears on 15 out of 20, and the smaller set cannot tell the difference reliably. A set of 30–40 queries is the range where a competitor set of three to four names can be added while keeping the weekly manual check under two hours. Above 50 queries per brand, the manual approach becomes unsustainable and a dedicated perplexity brand monitoring platform is the more reliable option.

What does it mean if Perplexity cites my domain consistently but never names my brand in the answer text?

It means Perplexity trusts your content as source material but is not treating your brand as the definitive answer to the query — only as a contributing reference. This is a framing gap, not a domain authority problem. Perplexity tends to name brands explicitly in generated text when the query is comparative ("which tool is better," "what are the alternatives") or when a page is structured as the direct, complete answer to a specific question rather than a useful resource on a broader topic. The fix is usually content restructuring rather than new content creation: pages that open with a direct answer to the query, use the brand name in that answer, and are built around canonical answer design principles rather than general topic coverage.

How do I know whether a shift in my Perplexity citation count reflects a competitor action or a Perplexity model update?

Look at the pattern across the query set, not the individual data point. If citations drop across the entire tracking set simultaneously — across multiple intent types and multiple query clusters — the likely cause is a Perplexity index or model update. Your content did not change, but Perplexity's source weighting did. If citations drop on a specific query cluster while a competitor gains ground on those same queries in the same week, the likely cause is a competitor content or placement action. Running a competitor set in parallel with your own brand tracking is what makes this distinction readable. Without the competitor data, both explanations look identical from the inside.

How do I present Perplexity monitoring data to a founder or CMO who has not heard of GEO?

Skip the methodology entirely. Lead with the business question: your buyers are using Perplexity to research vendors in this category, and here is whether your brand shows up when they do — and what your closest competitors are doing that you are not. Frame it as competitive intelligence, not as a new channel requiring investment. The three source profiles from the diagnostic section — owned authority, earned presence, borrowed presence — map cleanly onto language a founder or CMO already uses: where we own the position, where we are visible but fragile, and where a competitor is taking the reference we should hold. The monthly source brief format works for this audience because it ends with a ranked action list. A ranked list is a decision. A monitoring report is a document.