AI citation tracking is the practice of monitoring which sources ChatGPT, Perplexity, Google AI Overviews, and other AI engines actually pull from when answering prompts in your category. Not "does our brand come up" in a vague sense, but specifically: which URLs get cited, how often, and against which competitors. It matters right now because your buyers are already using AI answers to shortlist vendors before they ever visit a website. If you are not in those answers, you are losing demand you cannot see. This week, the most useful thing you can do is run 20 prompts that reflect real buyer intent across two engines, log whether your site gets cited, and note which competitor keeps showing up instead. That simple audit is your starting point. The rest of this article shows you exactly how to build on it.

At some point in the last year, you probably opened ChatGPT, typed something about your category, and watched a competitor get recommended. No explanation. No obvious reason why them and not you. You might have tried it a few more times, from different angles, and got similarly inconsistent results. Then you went back to whatever was on fire that day, because there was no clear action to take.

That experience is the exact problem AI citation tracking is built to solve. It gives you a repeatable way to answer the questions that actually matter: where do you stand in AI answers today, who is outranking you and on which prompts, and what do you change first. This is not another layer of SEO theory. It is a practical system for a channel that is already shaping how buyers find products like yours.

What is AI citation tracking and why does it matter for founders and lean teams?

You opened ChatGPT, typed something about your category, and a competitor came up. Not you. You tried a different phrasing. Still not you. You closed the tab because there was nothing obvious to do with that information.

That moment matters more than it feels like it does. G2's 2025 Buyer Behavior Report, surveying 1,169 B2B decision-makers, found that generative AI chatbots are now the single biggest influence on vendor shortlists, ranking above vendor websites, market research firms, and independent content.

Buyers are building shortlists in AI answers before they ever visit your website. Being absent from those answers is a distribution problem, not a content quality problem. And it is one you cannot fix if you cannot see it.

That is what citation tracking gives you: a clear picture of where you stand in AI answers today, who is occupying that space instead of you, and which content moves to make first. If you want a broader view of the tooling landscape before going further, this breakdown of AI visibility platforms is a useful reference. But the core idea here is simple: citation tracking is not a reporting exercise. It is how you turn an invisible problem into a prioritised to-do list.

Opening ChatGPT in an incognito tab and running a few searches feels like research. It is not. AI answers shift by engine, by country, by day, and by prompt wording. Screenshot-based spot checks give you false confidence. A system catches what a spot check misses.

AI citation tracking definition (plain English, no fluff)

AI citation tracking refers to the practice of monitoring which sources AI engines pull from when answering prompts in your category, how often your site appears among those sources, and how that compares against competitors over time.

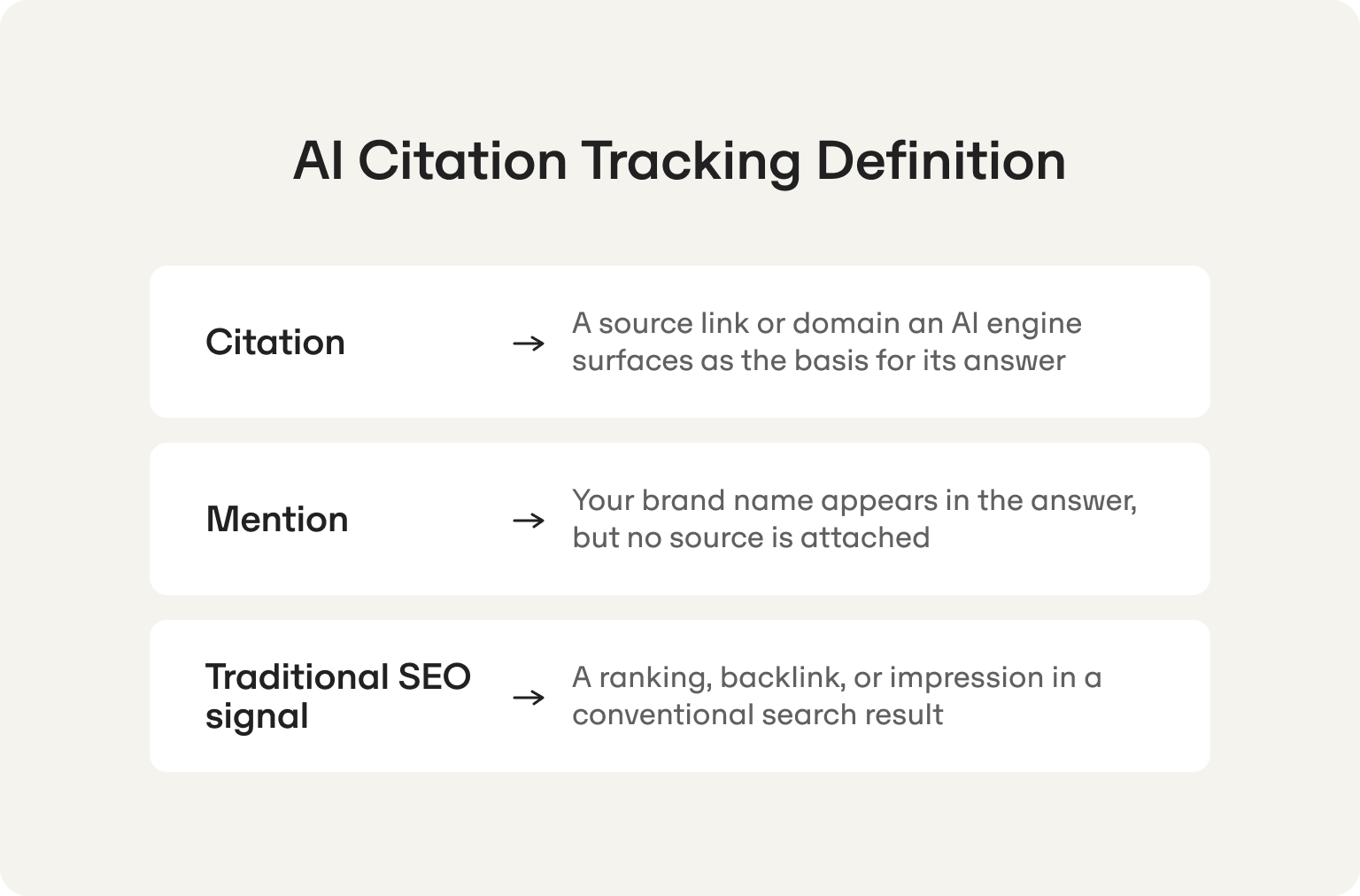

Three terms worth keeping distinct, because teams conflate them constantly:

- Citation: A source URL that an AI engine surfaces as the basis for its answer. In Perplexity, these appear as numbered references in a sidebar. In ChatGPT with browsing enabled, they show as inline footnotes. A citation means the engine actively used that page to construct its response. You can read a fuller breakdown of how this works in Omnia's knowledge base on AI citations.

- Mention: Your brand name appears in the answer body, but no source URL is attached. The engine knows you exist, likely from training data, but is not treating your content as a source. A mention without a citation is a different problem with a different fix.

- Traditional SEO signal: A ranking position, a backlink count, or an impression in a conventional search result. These live in Google Search Console. They measure a system with a published algorithm. AI citations do not work that way.

Quick illustration: you run Perplexity and ask "What are the best GEO tools for B2B startups?" Four source URLs appear in the sidebar, drawn from a third-party comparison article and a couple of vendor knowledge-base pages. That is citation behavior. The answer body also says "tools like [your brand] are designed for lean teams" without linking anywhere. That is a mention. Your domain authority and backlink profile had limited direct influence on either outcome.

Understanding AI citation tracking: how it differs from backlinks and PR mentions

Backlinks are stable. They accumulate over time and reflect how the broader web has chosen to reference your content. Domain authority is a real asset, and it was built to serve a system where ranking is the output.

AI citations work differently. Every time an AI engine answers a prompt, it runs a fresh retrieval process, weighs sources against that specific query, and decides what to surface. That same well-ranking domain does not automatically get cited. The same page that earns citations for one prompt phrasing may not appear for a slightly different one. This is also why prompts and search queries behave so differently as discovery surfaces, and why treating them as equivalent leads teams into the wrong conclusions.

PR coverage creates a similar false floor. Earned media in respected publications can contribute indirectly to AI citations, if those publications are sources AI engines already trust. But a press mention does not guarantee a citation. The chain of influence is indirect and the outcome is not predictable. Teams that assume their PR programm covers their AI visibility are regularly surprised to find they are invisible in the answers their buyers read most.

The practical consequence: your citation profile and your SEO profile need separate tracking with separate methodologies. The confusion sets in when teams assume one dashboard covers both. It does not. Citation behavior changes by prompt, by engine, by country, and by week. A system built to track static rankings will not catch that movement, which means it will not catch the content gaps causing it.

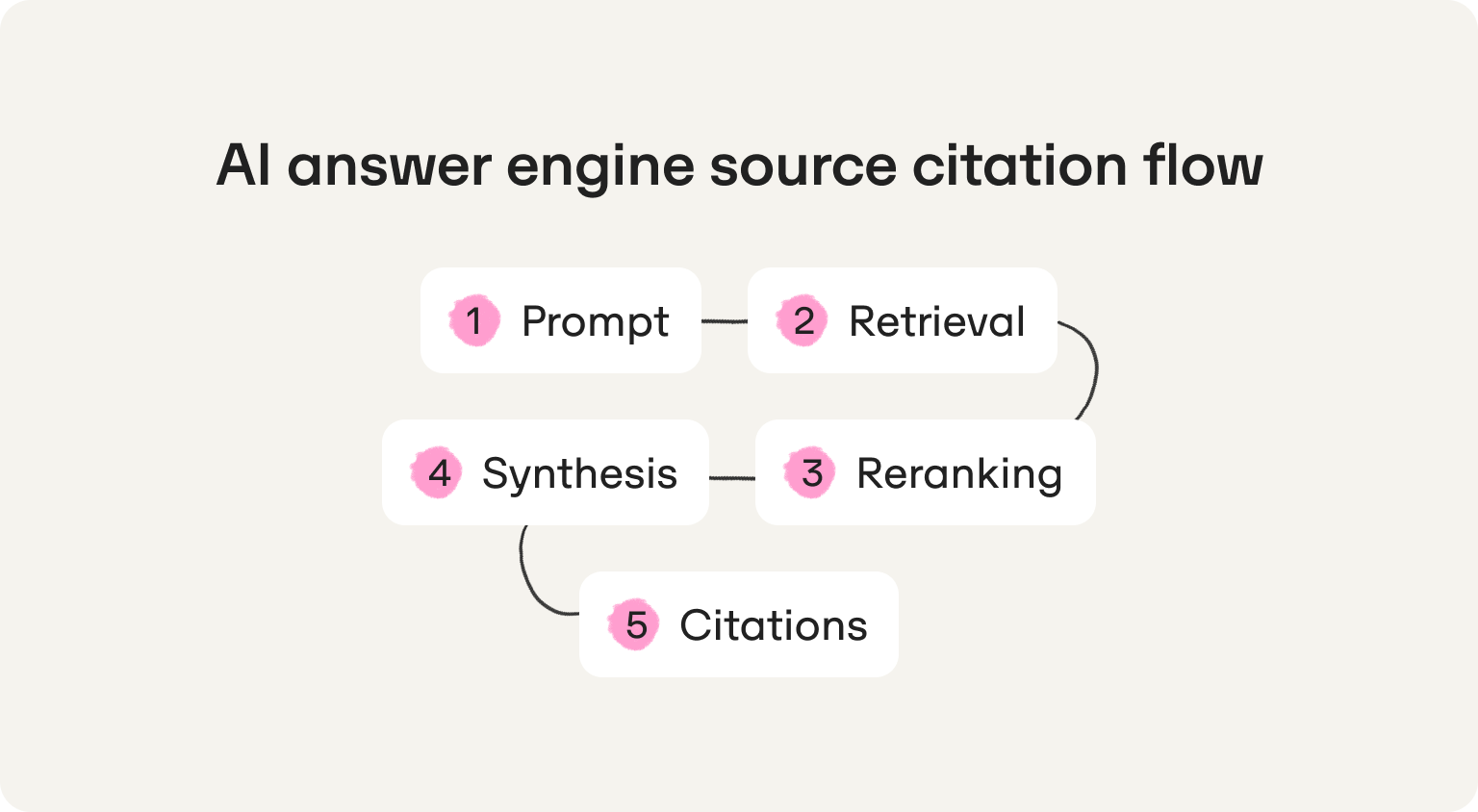

The source citation flow: prompt → retrieval → synthesis → citations

When you submit a prompt to an AI engine, roughly four things happen before a cited answer reaches you:

- 1. Retrieval: The engine queries a source pool. Depending on the engine and its current configuration, that pool might be a live web index, a curated set of trusted domains, the model's training data, or some combination of all three. What gets pulled at this stage depends on how well a source's content matches the semantic intent of the prompt, not just its keywords. Retrieval is where geography starts to matter: engines weight local sources differently depending on the country setting of the query.

- 2. Reranking: The retrieved sources do not all carry equal weight. A reranking layer scores them against the specific prompt, taking into account signals like content structure, topical authority, recency, and how cleanly the page answers the question being asked. This step is model-specific and not publicly documented. It is also where the same domain can perform very differently across two similar prompts.

- 3. Synthesis: The engine generates a response by drawing on the top-ranked sources. Grounding is the term for this process: the model anchors its answer to specific retrieved content rather than generating freely from training data alone. The quality and structure of your content directly influences how much of it survives into the synthesised answer.

- 4. Citations: The sources that contributed meaningfully to the synthesised answer get surfaced as citations. In some engines these are explicit URLs. In others they are domain references. In others still, they are inline footnotes. The citation is the visible output of everything that happened in the three steps before it.

Variability enters at every stage. A different prompt wording changes what gets retrieved. A model update changes how reranking weighs recency. A content refresh on a competitor's page can shift whose material grounds the synthesis. This is why a single spot check tells you almost nothing useful on its own.

How ChatGPT, Perplexity, and Google AI Overviews display citations differently

Knowing which engine you are tracking matters, because they behave differently in ways that directly affect how you interpret your data.

ChatGPT

Citations appear as inline superscript footnotes when web browsing is active. Without browsing enabled, ChatGPT draws primarily from training data and surfaces no citations at all. That distinction is important: a search run in ChatGPT without browsing is measuring something closer to brand familiarity than live citation behavior. For tracking purposes, always run prompts with browsing enabled and note the model version, as citation behavior shifts between releases.

Perplexity

The most citation-transparent of the three. Sources appear as a numbered list in a sidebar, visible alongside the answer, and the answer body references them by number inline. Perplexity is conducting live web retrieval on every query, which makes it one of the most useful engines to track for understanding which domains AI currently trusts in your category. Typically surfaces four to six sources per answer, though this varies by prompt complexity.

Google AI Overviews

Citations appear as expandable source chips beneath the generated answer. Google's retrieval draws heavily on its existing search index, which means pages that rank well in conventional search have a higher baseline probability of appearing here than in ChatGPT or Perplexity. That said, high rankings do not guarantee inclusion. Content structure and extractability matter independently of ranking position. Coverage also varies significantly by country, query type, and whether AI Overviews are triggered at all for a given prompt.

For a more detailed walkthrough of how to monitor AI search visibility across these engines, including which prompt types tend to trigger overviews and which do not, that guide covers the operational detail this section intentionally skips.

AI search citation tracking: what you can and can't measure reliably

Before you build a measurement system, it helps to know where the floor is. AI citation tracking is genuinely useful. It is also genuinely variable, and teams that ignore that variability end up reporting noise as signal and making content decisions based on a single bad data point.

The good news: variability does not make tracking useless. It makes methodology important. Understand the five main sources of inconsistency and you can build around them rather than be misled by them.

Location and country

The same prompt run in Spain and the UK can return different cited sources, different competitors, and sometimes a different answer structure entirely. If your buyers are in specific markets, tracking from a US IP address is not tracking your actual competitive landscape. Country-level data is not a nice-to-have. For a business focused on specific geographies, it is the only data that reflects reality. This is directly relevant to how Omnia approaches prompt research across markets.

Personalization

AI engines, particularly ChatGPT, factor in session history and account context. A logged-in user who has previously discussed your category will sometimes see different answers than a fresh session. For tracking purposes, always use clean sessions and note whether you are logged in or out.

Time and model updates

AI engines update their models, their retrieval indexes, and their reranking logic on a rolling basis. A citation pattern that holds for three weeks can shift after a quiet model update you never saw announced. This is not a reason to stop tracking. It is a reason to log model versions alongside your data and annotate known update dates in your change log.

Query wording

"Best GEO tool for startups" and "top generative engine optimisation platforms for early-stage companies" are semantically close but will not always return the same cited sources. Small variations in phrasing change what gets retrieved. This is why prompt clusters matter more than individual prompts, and why you need coverage across multiple phrasings of the same intent.

Engine-specific behavior

As covered in the previous section, ChatGPT, Perplexity, and Google AI Overviews retrieve, rerank, and display citations differently. A visibility rate measured only in Perplexity is not a visibility rate. It is a Perplexity visibility rate. Keep engine data separate.

Why manual spot-checking fails (and when it's still useful)

Manual checking is not worthless. It is just the wrong tool once your tracking needs grow past a handful of prompts.

Here is the honest split:

Manual is fine when you are:

- Running a one-time audit to establish a rough baseline

- Checking a single competitor's presence on a specific prompt

- Validating a hypothesis before committing to a full tracking setup

- Explaining citation behavior to a sceptical colleague who needs to see it to believe it

You need a system when you are:

- Tracking more than ten prompts regularly

- Comparing visibility across more than one engine or country

- Trying to connect content changes to visibility shifts over time

- Reporting citation performance to investors, a board, or leadership

- Attempting to calculate share of voice against named competitors

Five signals that it is time to stop using screenshots and move to a monitoring system:

- You have run the same prompt three times and got three different answers, with no way to know which is representative

- You are copying results into a spreadsheet by hand and it is already taking more than an hour a week

- A competitor appeared in an answer and you have no idea whether that is new or has been happening for months

- You published new content and want to know whether it changed anything, but have no pre-publication baseline to compare against

- Someone asked you how your AI visibility compares to a specific competitor and you genuinely could not answer

What a good starting baseline looks like for a startup or scaleup

You do not need a sophisticated setup to start. You need a consistent one.

A minimal viable baseline for a startup or scaleup looks like this:

- 20 to 50 prompts, organised into clusters by intent type (more on building these in the next section)

- 2 to 3 engines: Perplexity as your primary, ChatGPT with browsing enabled as your secondary, Google AI Overviews where relevant to your category

- 2 countries: align these to where your buyers actually are. For Omnia's core ICP, that means running in the UK and Spain as separate tracked environments, not pooled together

- Weekly sampling cadence: daily is overkill at this stage and introduces more noise than signal. Weekly gives you enough data points to detect real trends within a month

A useful baseline spreadsheet row looks like this:

Four weeks of this, run consistently, gives you a pattern. A pattern gives you something to act on. That is the entire point.

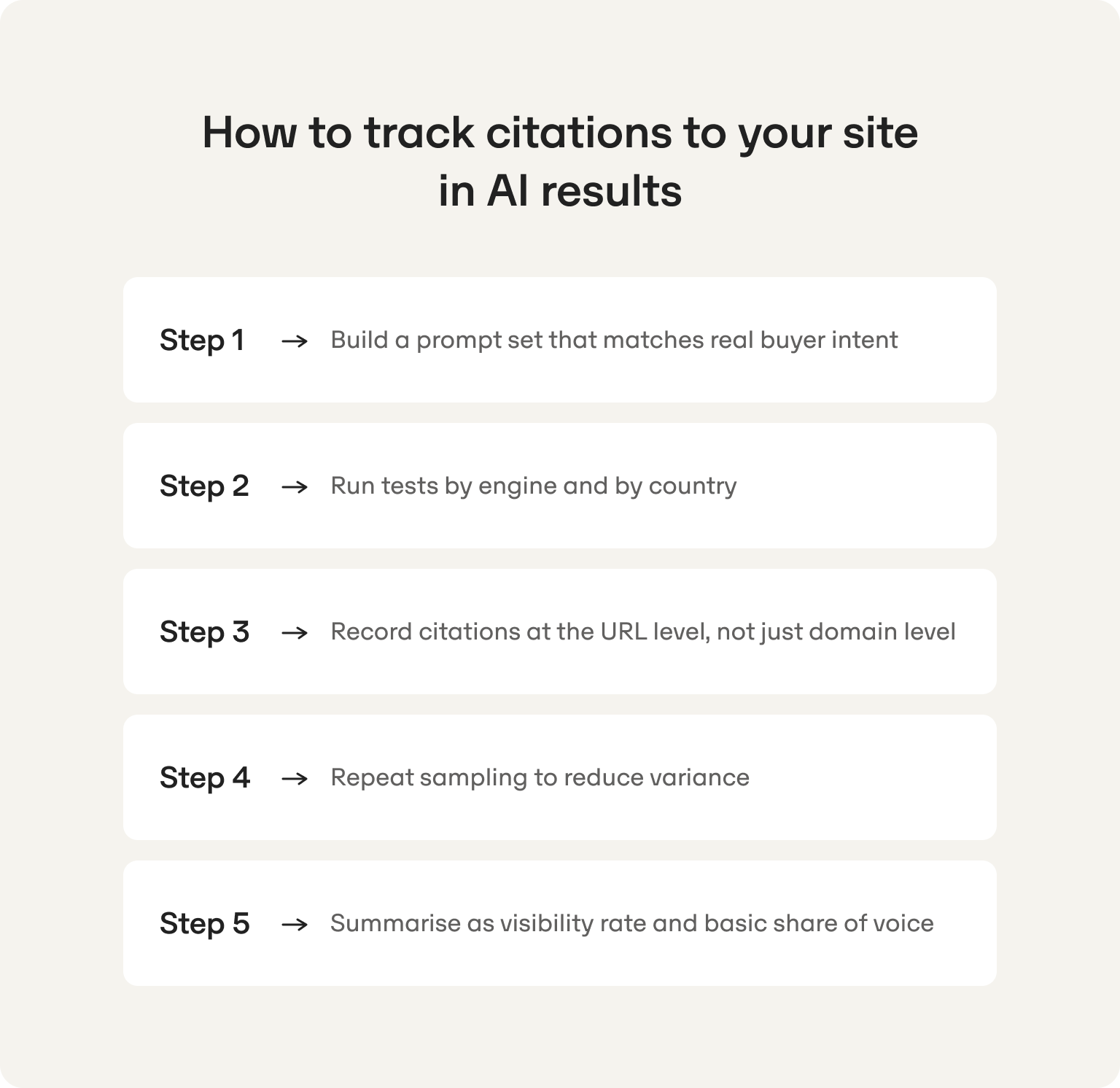

How to track citations to your site in AI results (manual workflow you can run this week)

This is a working system, not a theoretical framework. A founder or a one-person marketing team can run every step below without any paid software and come away with a real answer to the question: do we show up in AI answers, and where?

Set aside two hours the first time. After that, the weekly maintenance is under an hour.

Step 1 - Build a prompt set that matches real buyer intent

The prompts you track need to reflect how your actual buyers search, not how you wish they would describe your product. The most useful way to organise them is by cluster: groups of prompts that share the same underlying intent.

Four clusters cover the majority of relevant buyer behavior for a B2B SaaS company.

Cluster 1: Category prompts

The buyer is trying to understand the landscape.

- "What is the best [category] tool for startups?"

- "Top [category] platforms for small marketing teams"

- "Best [category] software in 2026"

- "Which [category] tools do B2B companies use?"

- "What should I look for in a [category] platform?"

Cluster 2: Alternatives prompts

The buyer already knows a competitor and is looking for other options.

- "Alternatives to [Competitor A]"

- "Best [Competitor A] alternatives for startups"

- "[Competitor A] vs [Competitor B]"

- "What do people use instead of [Competitor A]?"

- "Is there a cheaper alternative to [Competitor A]?"

Cluster 3: Best for prompts

The buyer is trying to match a tool to a specific context.

- "Best [category] tool for a one-person marketing team"

- "Best [category] platform for VC-funded startups"

- "[Category] tools for companies without an SEO team"

- "What [category] tool works best for B2B tech companies?"

- "Best [category] software for teams in the UK"

Cluster 4: Integration and how-to prompts

The buyer is further along and thinking about implementation.

- "How do I [core use case] with [category] software?"

- "Does [category] tool integrate with [common tool]?"

- "How to get started with [category] as a small team"

- "How long does [category] take to show results?"

- "Can [category] software replace hiring an SEO person?"

Start with five prompts per cluster, twenty total. Add more as you learn which clusters are most competitive for your category.

Step 2 - Run tests by engine and by country

Location is not a setting you can ignore. AI engines weight local sources, surface local competitors, and sometimes return structurally different answers depending on the country of the query. If your buyers are in the UK and Spain, running all your tests from a US IP address gives you data that does not reflect your actual competitive environment.

How to control for location:

- Perplexity: change the language and region setting in preferences before each country-specific session

- ChatGPT: use a VPN set to the target country, or note clearly that location could not be controlled and treat results as directional only

- Google AI Overviews: use a browser with location set to the target country, or use Google's country-specific domains (google.co.uk, google.es)

For each prompt run, log the following:

You are not looking for a single answer. You are building a pattern across runs, engines, and countries. One data point is anecdote. Thirty data points is a picture.

Step 3 - Record citations at the URL level, not just domain level

This distinction matters more than it might seem. Knowing that your domain gets cited tells you AI trusts your site. Knowing which specific URL gets cited tells you what type of content AI rewards, and that is what informs your content strategy.

A blog post being cited means AI finds long-form editorial content useful for that prompt type. A comparison page being cited means the engine is pulling structured decision-support content. A pricing page being cited is rare but significant: it means the engine is treating your own pages as a credible source for commercial prompts.

Track citations at full URL level from the start. The column schema for your spreadsheet:

Date | Engine | Country | Prompt | Cluster | Your site cited (Y/N) | Cited URL | Competitor cited | Competitor URL | Notes

This schema also sets you up cleanly for the share of voice calculation in Step 5.

Step 4 - Repeat sampling to reduce variance

Run each prompt at least three times over five to seven days before drawing any conclusions. AI answers are variable enough that a single run can mislead you in either direction: you might appear in one answer and be absent from the next three, or miss a competitor who is consistently present except on the day you happened to check.

The deduplication rule: count unique cited URLs per prompt run, not total raw citations. If the same URL appears in both the sidebar and the answer body in Perplexity, that counts as one citation for that run.

A practical example: if your site is cited in two out of three runs for a given prompt, you have a 67% citation rate for that prompt. That is a meaningful signal worth paying attention to. If you are cited in one out of three, that is a weak signal, possibly noise. Below 33% across multiple prompts in the same cluster, you have a visibility gap in that cluster that warrants action.

Step 5 - Summarise as visibility rate and basic share of voice

Two metrics you can calculate directly from your spreadsheet, no additional tools required:

Visibility rate: The percentage of prompt runs where your site was cited at least once.

Formula: (runs where your site was cited / total runs) x 100

Example: your site appeared in 14 out of 30 prompt runs this week. Visibility rate = 47%.

Break this down by engine and by country. A 47% overall rate that is 70% in Perplexity and 20% in ChatGPT tells you something specific about where your content is and is not landing.

Competitor share of voice For each competitor, how many prompt runs did they appear in versus total runs?

Moving from manual audit to ongoing AI citation monitoring

The manual workflow above is the right starting point. It builds intuition, costs nothing, and gives you a real baseline faster than any onboarding process.

But it has a ceiling. Once you are tracking more than a handful of prompts, trying to detect trends over time, or reporting to a board, the manual approach starts to break down. Not because the methodology is wrong, but because the volume and consistency required to make it reliable becomes its own full-time job.

AI citation performance tracking: cadence, alerting, and change logs

A weekly operational routine that works for a lean team:

Monday: run your prompt set

Same prompts, same engines, same country settings as last week. Consistency in inputs is what makes week-over-week comparison meaningful. If you change a prompt wording, treat it as a new prompt rather than a continuation of the old one.

Tuesday: compare against last week's baseline

Note any new citations your site earned, any citations you lost, and any shifts in competitor presence. A competitor who was not showing up last week and is now appearing in four prompts is a signal worth investigating. A citation you held for six weeks that disappeared this week warrants a look at whether a competitor published something new.

Wednesday: log content changes

Record any pages you published, updated, or removed since the last tracking run. This is the step most teams skip, and it is the step that makes everything else useful. Without a content change log sitting next to your citation data, you cannot connect your actions to your results. You are just watching numbers move.

Ongoing: annotate known engine updates

When a new ChatGPT model ships, note it. When Google updates its AI Overviews behavior, note it. These external events can shift citation patterns in ways that have nothing to do with your content, and you need to be able to distinguish between "we lost citations because a competitor outpublished us" and "we lost citations because the engine's retrieval logic changed."

The change log is what separates a team that learns from a team that guesses. It is also what makes source trust signals legible over time: you start to see which content attributes correlate with sustained citation presence and which do not.

AI citations tracking vs AI mention tracking: why you need both

Being cited and being mentioned are not the same signal. Tracking only one gives you half the picture.

Here is what each scenario actually means in practice:

- Cited but not mentioned: Your URL appears as a source but your brand name does not appear in the answer body. AI trusts your content but has not connected it clearly to your brand entity. This is an entity clarity issue: your content is doing work but your brand is not getting credit for it. The fix is improving how clearly and consistently your brand name is associated with your content across your site and across the web.

- Mentioned but not cited: Your brand name appears in the answer but no source URL is attached. AI knows you exist, likely from training data or general web familiarity, but is not treating your pages as a source worth citing. This is a content extractability issue: your brand has awareness but your content is not structured in a way that AI engines pull from confidently. The fix is improving content structure, not brand awareness.

- Neither cited nor mentioned: You are absent. Both a brand gap and a content gap. This is the most common starting position for companies who have not yet thought about how to improve brand visibility in ChatGPT and similar engines, and it is the clearest signal that citation tracking needs to become a priority.

- Both cited and mentioned: The target state. AI is using your content as a source and crediting your brand in the answer. This is what compound visibility looks like: your content earns the citation, your brand gets the association, and buyers reading that answer encounter both.

Tracking mentions alongside citations takes minimal additional effort in your spreadsheet. Add two columns: "Brand mentioned (Y/N)" and "Mention context (brief note)." The pattern that emerges across those columns over several weeks will tell you which gap to close first.

Key metrics for AI citation tracking (specifically for SaaS teams)

Data without a decision attached to it is just noise. Every metric below has a "so what" built in. If you cannot answer what you would do differently based on a number going up or down, the number does not belong in your reporting.

Core KPI set for SaaS teams tracking AI citations

- Citation visibility rate: Percentage of prompt runs where your site was cited, broken down by engine and country. This is your headline number. A flat or declining rate by engine tells you exactly where to focus.

- Prompt coverage: How many of your target prompts are you actually tracking versus how many you identified as relevant? A 90% visibility rate across ten prompts sounds strong until you realise you are missing the thirty prompts your competitors are winning.

- Citation share of voice: Your citations as a percentage of all citations recorded across your full prompt set. This is the number that tells you how much of the available AI real estate you own in your category.

- Competitor citation share of voice: The same calculation, run for each named competitor and ranked. Updated weekly, this becomes the clearest early warning system you have. A competitor's SOV jumping ten points in a fortnight means something changed on their end worth investigating.

- Cited URL mix: Which page types are being cited: blog posts, comparison pages, knowledge base articles, pricing pages? A cited URL mix that is entirely third-party domains tells you your own content is not extractable enough. A mix weighted toward one page type tells you where to double down.

- Citation prominence: In engines that display ordered sources, are you appearing first or fourth? Being cited is good. Being cited first is meaningfully better, particularly in Perplexity where readers scan the sidebar in order.

- New vs lost citations: Week-over-week delta. This is your most actionable weekly metric. A citation gained tells you what is working. A citation lost tells you where a competitor may have just moved.

- Topic cluster performance: Roll up your visibility rate by prompt cluster rather than by individual prompt. A strong rate in category prompts but a weak rate in alternatives prompts tells you exactly where your content strategy has a gap.

- Mention-to-citation gap: How often is your brand mentioned in answers without being cited as a source? A large gap here points to a content extractability problem, not a brand awareness problem. Two very different fixes.

- Domain diversity in citations: How many distinct domains does AI pull from across your category prompt set? A landscape where three domains dominate most answers is harder to break into than one where ten domains share citations roughly equally. Knowing this shapes how aggressively you need to pursue third-party placements.

Leading vs lagging indicators for AI citation tracking

Not all metrics move at the same speed or tell you the same type of thing.

Leading indicators are actionable now and predict future outcomes:

- Citation visibility rate by engine and country

- Cited URL mix

- Prompt cluster performance

- New vs lost citations week-over-week

Watch these weekly. They tell you whether your content actions are working before the downstream revenue effects show up.

Lagging indicators confirm impact but take longer to surface:

- Referral traffic from AI engines

- Assisted conversions influenced by AI answer exposure

- Pipeline influence from AI-driven demand

Be transparent about this when reporting upward. AI attribution is currently imperfect across most analytics setups. Direct referral traffic from AI answers is underreported because many engines do not pass referral data cleanly. The honest framing for a board update: "Our leading indicators show X. We expect that to translate into Y over the next quarter, and here is the content work we are doing to drive it."

Directional consistency over time is more credible than a single impressive number.

Share of voice worked example (spreadsheet-ready)

[VISUAL 4: Share of voice worked example. 10 prompts, three brands, citation count per brand per prompt, totals, and SOV percentage. Google Sheets formula included. Full schema as specified in brief.]

The formula for your own sheet:

SOV % = (brand citation count / total citations across all brands) x 100

Run this calculation separately by prompt cluster. An aggregate SOV number hides the cluster-level gaps that actually tell you where to act.

How to learn from competitor citations and close the gap

You know a competitor is showing up in answers where you are not. The question is why, and what you do about it today rather than next quarter.

Build a competitor citation teardown table

For every competitor URL that keeps appearing in your tracked prompts, you want to understand what type of content it is and why AI keeps reaching for it. The teardown table makes that systematic.

Patterns that explain why AI engines favour certain pages consistently: definitional content that answers "what is X" directly, step-by-step guides with numbered structure, comparison tables, strong FAQ sections with direct answers, and pages that have been updated recently. These are observable. They are not speculation.

Run this teardown on your top five most-cited competitor URLs. The counter-moves will write themselves.

You can also use Omnia's AI sentiment analysis to go a layer deeper: not just where competitors are cited, but how they are characterised in the answers that cite them. A competitor being cited as "the enterprise option" versus "the startup-friendly choice" is a positioning signal, not just a visibility one.

When aggregators and directories steal your citation slot

This is more common than most teams expect. You run a category prompt and the cited source is not a competitor at all. It is a listicle, a review site, or a directory. G2, Capterra, a "best tools" roundup from a blog you have never heard of.

This happens most frequently on "best [category]" and "alternatives to [competitor]" prompt types, where AI engines default to aggregated third-party content because no single vendor page answers the question neutrally enough.

Three responses, in order of effort:

1. Publish the missing content yourself If AI is citing a third-party roundup for "best GEO tools for startups" because no vendor has a credible, well-structured answer to that specific prompt, write one. You are not going to outrank the aggregator immediately, but a well-structured, regularly updated page on your own domain gives you a long-term citation asset you control.

2. Get onto the domains AI is already citing If a specific directory or review site keeps appearing across your tracked prompts, that domain has already earned AI trust in your category. A listing, a guest contribution, or press coverage on that specific outlet is worth more here than coverage on a high-authority domain that AI is not pulling from.

3. Improve your on-site extractability Add a clear, self-contained answer block to your most relevant existing pages. AI engines are more likely to cite a page that answers the prompt directly in the first two hundred words than one that buries the relevant information three scrolls down. This is the fastest win with the most control, and it costs nothing but an afternoon of editing.

Option three is where to start. Options one and two compound over time.

AI citation optimization: how to increase your chances of being cited

No guarantees here. Anyone promising "rank number one in ChatGPT in thirty days" is selling you something that does not exist. What does exist is a set of content and technical practices that consistently correlate with higher citation frequency across engines. These are the ones with the highest impact-to-effort ratio for a lean team.

Make your content easy to extract (formatting patterns that AI cites more frequently)

Extractability is the degree to which an AI engine can pull a clean, self-contained answer from your page without doing interpretive work.

High extractability pages get cited. Pages that make the engine work hard to find the answer get skipped.

Formatting patterns that improve extractability:

- Definition-first sections: Lead with the clearest possible answer to the question your page is addressing. If your definition of "AI citation tracking" lives in paragraph four, AI engines will often pull from a competitor page that answers in paragraph one. Extractability rewards directness.

- Numbered step-by-step guides: Sequential, structured content is easier to attribute and easier to pull from. A how-to that reads as a numbered list signals to the retrieval layer exactly what type of content it is dealing with.

- Comparison tables: High extractability for "X vs Y" and alternatives prompts. A well-structured table answers a comparative question in a format AI can parse and surface directly.

- FAQ sections with direct answers first: The question format mirrors prompt structure. An FAQ where every answer leads with a direct one-sentence response before expanding is a citation magnet. Bury the answer after three sentences of context and you lose it.

- Headings that match real user prompts: An H2 that reads "What is AI citation tracking?" will outperform one that reads "Understanding the citation landscape" for prompts phrased as questions. Your heading structure is part of your retrieval surface.

Pros and cons blocks: Commonly pulled for purchasing decision prompts. Structured, scannable, and easy for an engine to attribute clearly to a single source.

Technical and trust basics that still matter

Nothing exotic here. These are hygiene factors that remove friction from the citation process:

- Clean crawlability and indexation: if an engine cannot reliably access your pages, it cannot cite them. Check for crawl errors on your most important citation candidates regularly.

- Canonical correctness: duplicate content confuses retrieval. Make sure your canonical tags are clean and your preferred URLs are the ones being indexed.

- A functional About or author page: AI engines weight content from identifiable, credible sources more heavily. An author page with a clear bio and consistent entity naming across your site is a trust signal worth having.

- Consistent entity naming: your brand name, product names, and key topic associations should appear consistently across every page on your site and across your external presence. Inconsistency creates entity ambiguity and reduces citation confidence.

- Clear page purpose: information architecture that makes the intent of each page obvious, through URL structure, title tags, and opening paragraphs, helps reranking layers categorize your content correctly.

Most of this is an afternoon audit, not a development project.

Does content freshness affect AI citation frequency? (How to test it safely)

Yes, freshness appears to influence citation frequency, particularly for prompts where recency is implied: "best tools in 2026," "latest research on X," "current alternatives to Y." Engines conducting live retrieval weight recently updated pages more heavily for these prompt types.

That said, freshness does not mean constantly rewriting pages. Here is a simple experiment you can run without breaking anything:

- Pick five pages that should be earning citations but are not appearing in your tracked prompts

- Update the core answer block on each: sharpen the definition, replace any outdated statistics with the most current available, add one concrete recent example

- Update the published date only if the content genuinely changed in a meaningful way

- Re-run your relevant prompt set weekly for four weeks and note any citation changes against your pre-update baseline

Three things not to do: change URLs on pages that already have any citation presence, rewrite stable evergreen content just to refresh a date stamp, and mistake an uptick in crawl activity for an improvement in citation frequency. Crawls and citations are different events.

Why Omnia is built for founders and lean teams who are serious about AI citation tracking

The framework in this article works. The honest caveat is that running it consistently, across multiple prompts, engines, and countries, while everything else on your plate is also on fire, is where most lean teams stall. Not because the methodology is wrong, but because consistency is the first thing that slips when bandwidth runs out.

Omnia keeps the system running when you cannot. It tracks citation presence by engine and by country, surfaces which exact URLs and domains are being cited for which prompts, and shows how your share of voice compares against named competitors week over week. When something shifts, you see it. When a content gap is the reason, you get a concrete next action rather than a chart to interpret.

If you want to see where you actually stand before committing to anything, the Omnia AI visibility checker generates a free report of your current citation presence across AI engines. It takes a few minutes and gives you a real answer to "do we have a visibility problem and where is it?" without a sales call or a setup process.

Start for free or book a demo if you would like a guided walkthrough first.

FAQs

What is AI citation tracking and why does it matter if I'm already doing SEO?

SEO measures your visibility in a system with a published algorithm. AI citation tracking measures something different: whether AI engines are actively using your content as a source when generating answers for prompts in your category. The two do not reliably predict each other. A strong domain authority does not guarantee citation presence, and a page that earns consistent citations may not rank highly in conventional search. As AI answers increasingly influence how buyers build shortlists before they ever visit a website, citation presence is becoming a distribution channel in its own right, separate from organic search.

How do I track citations to my site in AI results without a paid tool?

Start with the manual workflow covered in this article: build a prompt set of twenty prompts across four clusters, run each prompt across Perplexity and ChatGPT with browsing enabled, log citations at URL level in a spreadsheet, and repeat weekly. It takes under two hours to set up and under an hour a week to maintain at that scale. For a faster starting point, the Omnia AI visibility checker gives you a free report of your current citation presence without any manual work.

What is the difference between AI search citation tracking and AI citation mentions tracking?

A citation is a source URL that an AI engine surfaces as the basis for its answer. A mention is your brand name appearing in the answer body without a source URL attached. Both matter, but they point to different problems. Cited but not mentioned suggests an entity clarity issue. Mentioned but not cited suggests a content extractability issue. Neither suggests both gaps need closing. Tracking both in parallel, with two additional columns in your existing spreadsheet, gives you the full picture.

What are the key metrics for tracking AI-generated citations for a SaaS company?

The metrics that drive decisions rather than fill dashboards: citation visibility rate by engine and country, competitor share of voice, cited URL mix, new versus lost citations week over week, and mention-to-citation gap. Leading indicators like visibility rate and cited URL mix tell you whether your content actions are working. Lagging indicators like referral traffic and assisted conversions confirm impact over a longer horizon. Keep them separate in your reporting.

Why does my competitor show up in AI answers when our product is better?

Because AI engines do not evaluate product quality. They evaluate content extractability, domain trust, content structure, and how clearly a page answers the specific prompt being asked. Your competitor is likely winning on one or more of those dimensions: a better-structured definitional page, more citations from third-party domains AI already trusts, or content that leads with a direct answer rather than burying it. The competitor teardown table in this article gives you a systematic way to identify exactly which of those factors is in play.

How often should I be running AI citation performance tracking?

Weekly for your core prompt set. Daily tracking introduces more noise than signal at startup and scaleup scale and creates unsustainable manual overhead. A consistent weekly cadence across the same prompts, engines, and country settings gives you enough data points within a month to detect real trends and connect them to content actions.

Does content freshness actually impact AI citation frequency?

Yes, particularly for prompts where recency is implied. Engines conducting live web retrieval weight recently updated pages more heavily for time-sensitive queries. The practical move is updating the core answer block on pages that should be earning citations but are not: sharpen the definition, replace outdated statistics, add a current example. Update the published date only if the content genuinely changed. Do not rewrite stable evergreen content purely for a date stamp.

What is the fastest path to starting AI citation optimization?

Three moves in order of speed and control. First, run the free Omnia AI visibility checker to see where you stand today. Second, restructure your three most relevant existing pages to lead with direct answer blocks, add numbered steps or comparison tables where relevant, and ensure your brand entity is named consistently. Third, identify which third-party domains keep appearing in your tracked prompts and prioritise getting your brand onto those specific outlets. You are not building from scratch. You are removing the friction that is stopping AI engines from citing content you already have.