Classic SEO signals are the entry ticket for AI Mode, not the winning strategy. Getting cited requires topic depth that covers the sub-questions Google's query fan-out generates, retrieval-ready formatting that lets AI extract specific answers, and a branded web presence that teaches AI models your authority. Track citation rate per prompt, not SERP position. The rest of this guide shows exactly how to build that.

Your keyword rankings didn't drop. Your technical SEO is clean. Your content scores are strong. However, why is it that your competitors keep showing up in AI Mode answers while your pages aren’t.

That is not a content quality problem. That is a structural mismatch between how classic search ranks pages and how AI Mode retrieves them.

AI Mode does not rank web pages. It synthesizes answers. This difference might sound minor, but this shift overwrites the discourse of how content is built.

This guide is for SEO strategists who already know the fundamentals and need to understand specifically what AI Mode evaluates differently — and how to adjust an existing content operation to match it. No beginner definitions. Just the delta between what you know and what you need to do differently.

One number makes the timing clear. Based on Omnia's citation database tracking 42M+ citations across four AI engines, AI Mode is the only engine actively expanding its citation behavior — up 27% in the past five months. AI Overviews have been stable since Q4 2025. Perplexity is down 36% from its November 2025 peak. ChatGPT has declined 30% from mid-2025. Every other engine is tightening. AI Mode is the only surface where new citation slots are opening. That makes this the window to establish presence before the competitive gap widens.

What AI Mode does that your current playbook doesn't account for

Classic Google takes a query, finds the most relevant pages, and returns a ranked list. Your job as an SEO strategist has been to be the most relevant page for the queries that matter to your business. Every signal (backlinks, keyword optimization, E-E-A-T, structured data, etc.) exists to communicate relevance and authority to that ranking system.

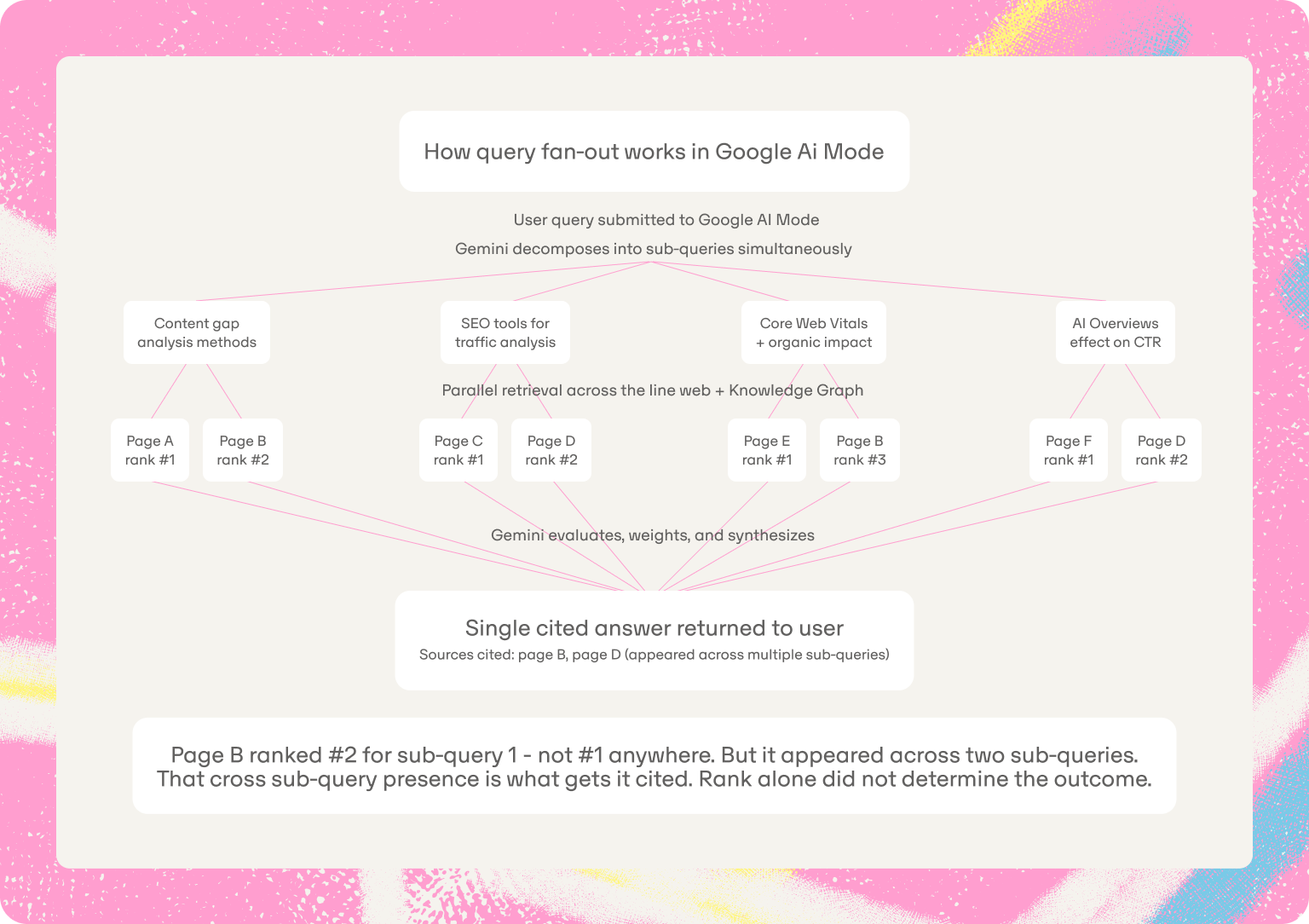

AI Mode runs a different process. When a user submits a query, Google's latest Gemini model analyzes the request, decomposes it into a set of related sub-queries, executes those sub-queries simultaneously across the live web and Google's Knowledge Graph, synthesizes the results, and returns a single cited answer. Google calls this query fan-out.

What that means in practice: the page that ranks position one for your primary keyword may not be cited at all if it does not answer the sub-questions AI Mode generates. A page that does not rank in the top five for any individual keyword but covers a topic cluster with precision may get cited across multiple sub-queries. Essentially, the retrieval logic is different.

Understanding the difference between prompts and search queries makes this concrete. A keyword is a compressed signal of intent. A prompt is a full expression of it, with context, constraints, and implied follow-on questions baked in. AI Mode is built to respond to the prompt, not just the keyword sitting inside it.

Here is the specific shift that catches most SEO teams. Classic SEO optimizes for the explicit query — you target the keyword a user types, and you rank for it or you do not. AI Mode optimizes for the full information need behind that query. The model maps the implied sub-questions, the follow-up questions the user will likely ask, and the contextual information needed for a complete answer. It retrieves sources that collectively satisfy that full need, not just the typed query.

A user asking "how to improve organic search traffic" in AI Mode might trigger sub-queries covering content gap analysis methods, comparison of SEO tools for traffic analysis, the effect of Core Web Vitals on organic performance, and how AI Overviews are changing click-through rates. A page targeting only the head keyword competes in one of those sub-queries. A page with precise topic depth competes in several. That compounding is where AI Mode visibility is won or lost.

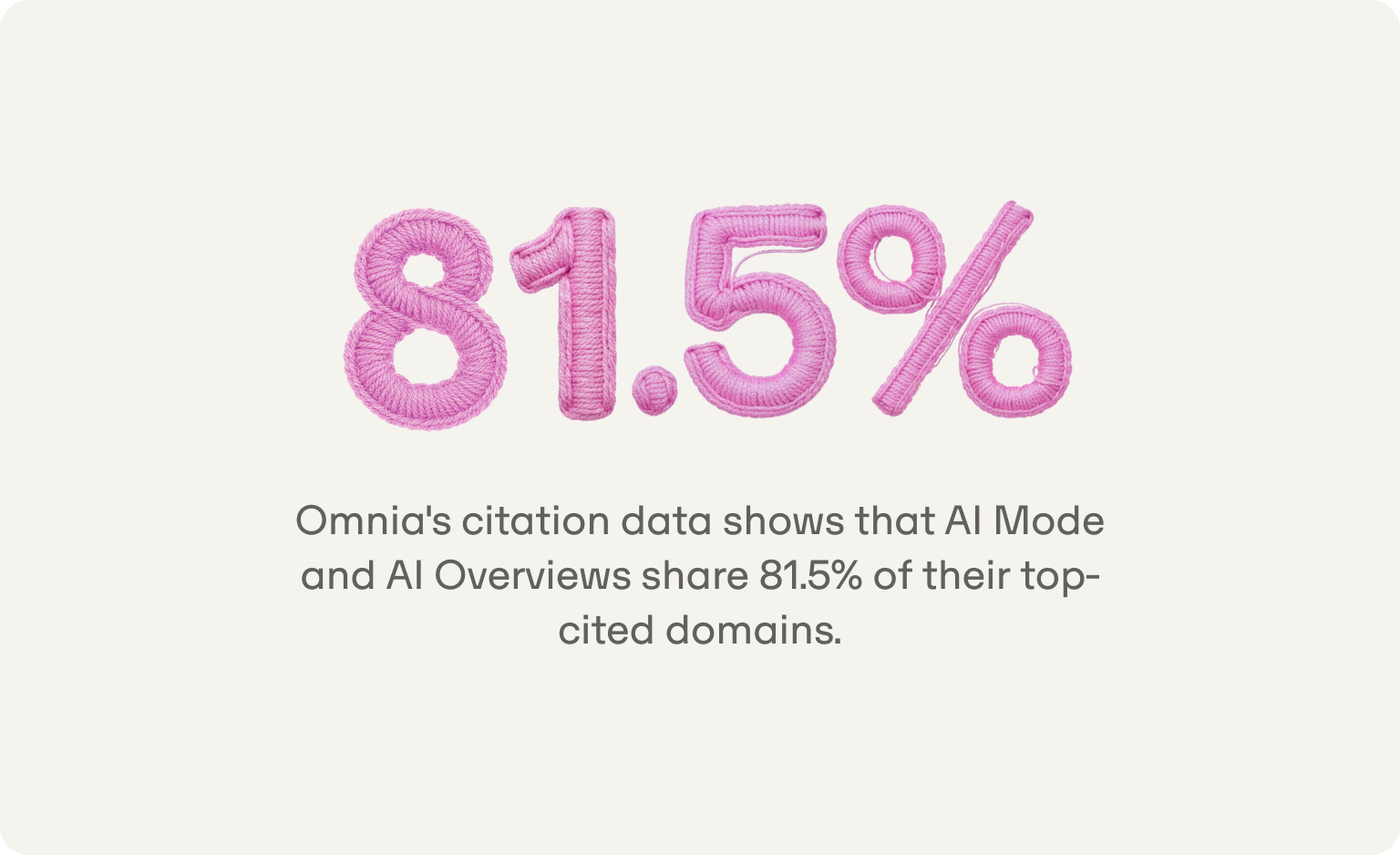

One clarification that matters for resource planning: Omnia's citation data shows that AI Mode and AI Overviews share 81.5% of their top-cited domains. They draw from the same source pool. Work you have already done for AI Overviews has a direct transfer effect into AI Mode.

If you have existing content optimized for Google AI Overviews, the structural overlap means you are not starting from scratch. ChatGPT, by contrast, shares only 52-55% of domains with Google's engines and has a fundamentally different citation fingerprint, pulling heavily from Wikipedia (see interactive graph below) and editorial content with almost no social platform presence. Winning on Google's AI surfaces does not guarantee winning on ChatGPT.

The ranking factors: what's the same, what's different, and what's new

Traditional SEO signals are the floor. AI Mode uses Google's core ranking systems — PageRank, the helpful content system, spam detection, and freshness — as the baseline for deciding which content is worth retrieving at all. Sites that perform well in classic search are more likely to appear in AI Mode for exactly this reason: credibility and relevance signals are shared infrastructure.

If your technical SEO is weak, your AI Mode visibility will suffer. Crawlability, indexation, page speed, and Core Web Vitals still matter. These are now necessary rather than sufficient.

Research from AirOps and Kevin Indig (Growth Memo), analyzing 16,851 queries across ChatGPT's full retrieval pipeline, found that a page at position 1 in AI retrieval has a 58% citation rate vs. 14% at position 10 — a 4x gap. Even a page with weak heading relevance at rank 1 (56% citation rate) outperforms a page with strong heading relevance at rank 6+ (26%). The research was conducted on ChatGPT's retrieval pipeline specifically, and the directional finding maps consistently to observed AI Mode citation behavior: being retrievable is the first optimization target. Content quality amplifies the citation signal. Without retrieval, there is nothing to amplify.

One further number worth internalizing before you adjust your strategy: Ahrefs found in December 2025 that only 13.7% of citations overlap between AI Overviews and AI Mode. If your GEO work so far has focused on AI Overviews appearances, assume very little of it transfers automatically into AI Mode citation behavior. The surfaces are related but not identical.

What's different: the off-page signal AI Mode weights more heavily than you'd expect

Research cited by Search Engine Journal found that branded web mentions — not backlinks, but raw mentions of your brand across the web — had a 0.67 correlation with AI Overview citation frequency. That is one of the strongest single-factor correlations identified in any AI citation study to date.

Lily Ray, VP of SEO Strategy and Research at Amsive, described this shift in her January 2026 industry review: AI search has moved third-party validation from a secondary SEO benefit to a primary visibility driver. The tactics, including digital PR, community presence, earned mentions — have always existed. What changed is their position in the hierarchy. She notes that Reddit, Quora, Facebook Groups, and LinkedIn are among the most heavily cited sites in AI search, alongside user-generated review content on platforms like G2. For AI visibility, being discussed in the places AI models retrieve from is as important as being published on your own domain.

The difference between owned and earned mentions is central to this. Owned content, including your blog and your website, establishes what you say about yourself. Earned mentions like third-party coverage, community discussions, review platforms, establish what the web says about you. AI models weigh the latter heavily when deciding which sources to trust. Omnia understands the importance of these elements and gathers information on how to pitch content so your business can improve in AI visibility.

If your off-page strategy is purely link acquisition, you are building for classic Google while AI Mode assigns weight to a signal you are not tracking.

What's new: retrieval-readiness as a distinct layer

This is the gap most SEO strategists do not have a mental model for yet. Even high-quality, well-ranking content can be invisible in AI Mode if it is not structured for AI retrieval.

AI Mode extracts specific sections of pages to answer specific sub-queries. If the answer to a question is buried three paragraphs into a section after contextual setup, the model may not surface it — even if the answer is technically present. The content that gets cited is the content where the direct answer appears first, in a clearly bounded unit of text, under a header that signals what question it answers.

The empirical gap here is sharper than most teams expect. Research presented at Tech SEO Connect 2025 found that traditional SEO metrics — backlinks, domain authority — predict only 4-7% of AI citation behavior. That is not a reason to abandon backlinks. It is evidence that citation behavior is driven by a different set of signals that classic SEO measurement does not capture.

The same research found that 44.2% of all LLM citations come from the first 30% of a page's text. That single finding has more practical implications for content restructuring than most tactical guides acknowledge.

On E-E-A-T: content that demonstrates genuine first-hand experience — original data, attributed expert perspectives, specific real-world examples — outperforms generic summaries even when the generic content covers the same information. Adding trusted citations increases AI Overview visibility by 132%. The difference between "studies suggest" and a specific research finding with a named source is the difference between content that looks credible and content that is credible.

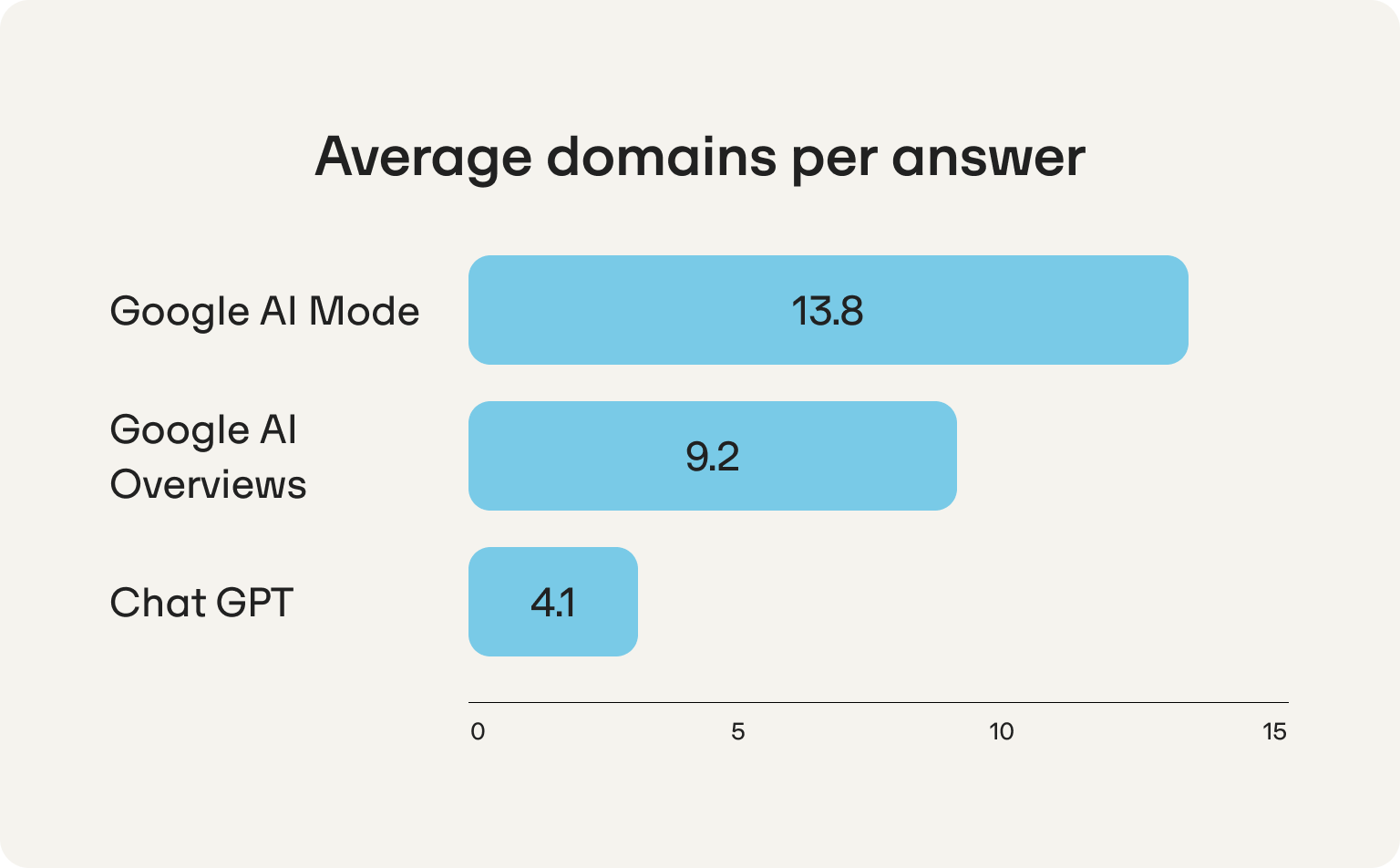

Omnia's citation data adds scale to this picture. AI Mode averages 13.8 unique domains per answer and 18.6 total citations. AI Overviews averages 9.2 domains. ChatGPT cites just 4.1 — and that number is declining. Getting cited by ChatGPT is more than 3x harder than getting cited by AI Mode, which means the citation budget math favors prioritizing AI Mode for teams deciding where to invest AI visibility efforts.

Where your current content is probably losing retrieval slots

The most common failure pattern for experienced SEO teams moving into AI Mode is optimizing one dimension correctly while leaving others untouched. These are the three gaps that appear most frequently.

Gap 1: Strong keyword targeting, shallow topic coverage

A page that ranks well for a primary keyword often does so because it is highly optimized for that specific query. That optimization can work against AI Mode visibility if it means the page is narrow — covering the head term in depth but not the fan-out sub-questions the model generates.

If your 10 best-ranking pages each cover one keyword thoroughly but do not address the 6-8 adjacent questions that naturally follow from that topic, you are leaving retrieval slots open for competitors who covered the topic with more precision — even if they rank lower than you on the primary term.

Gap 2: Well-written content, poor retrieval structure

Content written for human readers with narrative flow, contextual buildup before the payoff, and smooth transitions, is often poorly structured for AI extraction. The model does not read the whole piece and evaluate overall quality. It identifies the section most likely to answer a specific sub-query and extracts from there.

A well-argued section that delivers the key insight on the fifth sentence of a seven-sentence paragraph will lose a retrieval slot to a weaker page that puts the direct answer in sentence one, every time.

Gap 3: Strong on-site signals, weak branded presence off-site

If your brand is not extensively mentioned across the web in the context of your topic clusters, your AI Mode visibility will underperform relative to your classic search rankings. This is the gap that surprises most SEO strategists, because they have either underweighted it or tracked it as a vanity metric rather than a performance signal.

The 5-step system to rank in Google AI Mode

Step 1: Map your topic's fan-out questions before any content decision

Stop starting with keyword research for AI Mode optimization. Start with prompt research.

For each priority topic, run this process:

- Submit the topic as a query in AI Mode. Study the response — not for whether you are cited, but for what sub-questions the response addresses.

- List every distinct sub-question the AI Mode response covers. This is the partial fan-out map for that topic.

- Cross-reference that list against your existing content. Which sub-questions does your content explicitly answer? Which are gaps?

- Gaps where competitors are cited are your highest-priority optimization targets.

One finding from the AirOps/Indig research runs counter to traditional SEO intuition here: exhaustive fan-out coverage does not win. Pages covering 26-50% of fan-out sub-queries outperform pages covering 100%, and matching 3-4 subheadings actually drops citation rate by 6 percentage points compared to matching just one. When primary query relevance is held constant, broad coverage signals generalist content without depth.

The content that gets cited nails one question precisely. The fan-out mapping exercise is about knowing which question to nail and not about building one page that attempts to answer all of them.

The goal of this exercise is not to produce more content. It is to stop creating content that targets keywords you are already ranking for while leaving fan-out sub-queries unaddressed.

Step 2: Restructure existing pages for retrieval, not just readability

This is the highest-leverage, lowest-effort change most SEO teams can make. You do not need to rewrite content. You need to restructure it for AI-ready content delivery.

The AirOps/Indig research found that heading structure is the primary on-page citation lever — more impactful than word count, topical breadth, or body copy. Pages with headings that closely match the query are cited 41% of the time vs. 29% for weak matches. The mechanism is specific: the retrieval model evaluates query match at the heading level (H1-H4), not body copy. A page whose headings mirror the questions it answers gets retrieved and cited.

For each priority page, apply these changes in order:

- Rewrite every H2 and H3 as a direct statement of what that section answers. A header like "Content strategy considerations" retrieves nothing. "How to structure content for AI Mode citation" retrieves cleanly when the model encounters a sub-query about AI Mode content structure.

- Move the direct answer to the first sentence of every section. Context and elaboration come after. The model extracts the unit of text most relevant to a sub-query — if the answer is in sentence five, it may extract nothing from that section. Research from Tech SEO Connect 2025 puts a number on this: 44.2% of LLM citations come from the first 30% of a page's text.

- Add a FAQ section to every page that matters. FAQ structure maps directly to the question-format sub-queries that AI Mode's fan-out generates. Cover the 6-8 most predictable follow-up questions from your audience. Each one is a potential retrieval slot.

- Keep paragraphs focused. One idea per paragraph. AI extraction systems cannot cleanly pull half a paragraph — a paragraph making three related points returns only one of them, poorly.

Step 3: Build E-E-A-T into the body of content, not just the author bio

E-E-A-T signals that live only in your author bio or About page are weak. The signals that drive AI Mode retrieval are embedded in the content itself.

.png)

Three moves that materially change E-E-A-T signal strength:

- Replace "studies suggest" with named, primary sources. AI Mode evaluates the credibility of sources your content cites. Linking to original research rather than secondary summaries signals rigorous sourcing — and makes your page part of a citation chain rather than just its endpoint.

- Add original data wherever possible. Cyrus Shepard's analysis of 400 sites that held traffic through the 2024-2026 search upheaval, surfaced by Rand Fishkin at SparkToro, found that 92% of surviving sites had proprietary assets: something AI could not synthesize from elsewhere. That pattern holds in AI Mode citation behavior. A page with a unique dataset, an original analysis, or a first-hand finding gets cited because it is the only place that insight exists. Generic coverage of widely-written topics competes for a slot any of 50 pages could fill.

- Demonstrate experience through specificity. Specific, operational insight reads as experience. "Use header tags" has no E-E-A-T signal. "For pages targeting 3-5 related sub-queries, 4-5 H2 sections with one FAQ block consistently outperforms both longer pages with thin section coverage and shorter pages with no FAQ layer" reads as someone who has actually run this. The specificity is the signal.

Step 4: Build branded web presence as a systematic GEO signal

This is the part of AI Mode optimization that most SEO playbooks do not cover, because it does not map cleanly to traditional link building or content strategy. It is also one of the most directly correlated signals with AI citation behavior, and the gap between GEO and SEO is most visible here.

Build branded mentions systematically across these surfaces:

- Industry communities where your topic is actively discussed. Contribute substantively — not promotional summaries of your blog posts. Answer questions in depth. Engage with other contributors. Lily Ray's analysis notes that AI Overviews are particularly responsive to YouTube and LinkedIn content, while Perplexity draws more heavily from Reddit. Understanding which engine drives the most traffic to your site should shape where you invest community presence.

- Third-party publication mentions. Digital PR targeting topic-relevant outlets — not just high-DA domains — creates branded mentions in the context where you want to be cited. A mention on a domain that AI Mode frequently cites for your topic category carries more weight than a generic domain authority link. Distributing content to a wide range of publications can increase AI citations by up to 325% compared to publishing only on your own site, according to Stacker's December 2025 research.

- Review platforms and case study features. Domains with profiles on G2, Capterra, Trustpilot, Sitejabber, and similar platforms can have higher citation probability in ChatGPT than those without. Case studies on partner sites produce the same effect. Consistent, credible mentions across these surfaces teach AI models that your brand is a trusted reference on your topic.

- Collaborative content. Co-authored pieces with domain experts, expert roundup contributions, and guest articles on publications in your topic cluster create associations between your brand and the topical authority of those publications. These are source trust signals for AI that go beyond what any single page on your own domain can produce.

Step 5: Measure AI Mode visibility as its own signal and close the gap weekly

This is where most GEO strategies stall. Teams implement the tactics above and then measure success using classic SEO metrics — SERP rankings, organic clicks, impressions. Those metrics do not capture AI Mode citation behavior, and they do not tell you whether what you are doing is working.

The case for weekly prompt-based tracking rather than periodic snapshots: Omnia's data shows only 18.5% of AI Overview answers keep the same top-cited domain week over week. 30.4% are volatile — swapping in 4-6 different domains within a single month. ChatGPT is less stable still, with just 8.1% of answers consistently citing the same top domain. AI citation behavior is not a ranking position you hold. It is a share of voice you win or lose week by week. A single measurement tells you where you stood on a Tuesday. A trend line tells you whether you are building or eroding.

What to track:

Citation presence by prompt. Define 20-30 prompts directly relevant to your business — the questions your ICP actually submits in AI Mode, framed conversationally. Track weekly whether your brand is cited in AI Mode responses to those prompts. This is your cited inclusion rate — the primary metric.

Share of voice vs. competitors. For each prompt cluster where you are not cited, who is? That competitor's content structure is the benchmark. Study what they are doing differently and build to it. This is your competitive AI visibility gap — the gap that tells you where to focus next.

Citation source by page. When you are cited, which URL? That page's structure is your highest-performing retrieval template. Identify what makes it different from pages on the same domain that are not cited, and replicate the pattern.

Week-over-week citation trend. AI Mode citation patterns fluctuate as Google updates Gemini's retrieval behavior. A one-week snapshot is noise. A six-week trend line shows whether your visibility is building or eroding — and which specific topic clusters are moving. Tools built specifically for this, including AI mode tracking platforms, make this consistent rather than manual.

What not to track as a proxy for AI Mode performance: raw SERP impressions, organic click-through rates (these undercount due to zero-click behavior), and keyword ranking position. Measure citation share, not clicks.

Diagnosis only drives improvement if it produces an action. For each prompt where a competitor is cited instead of you, the question is not "how do we rank better" — it is "what does their content cover that ours does not, and how quickly can we close that gap."

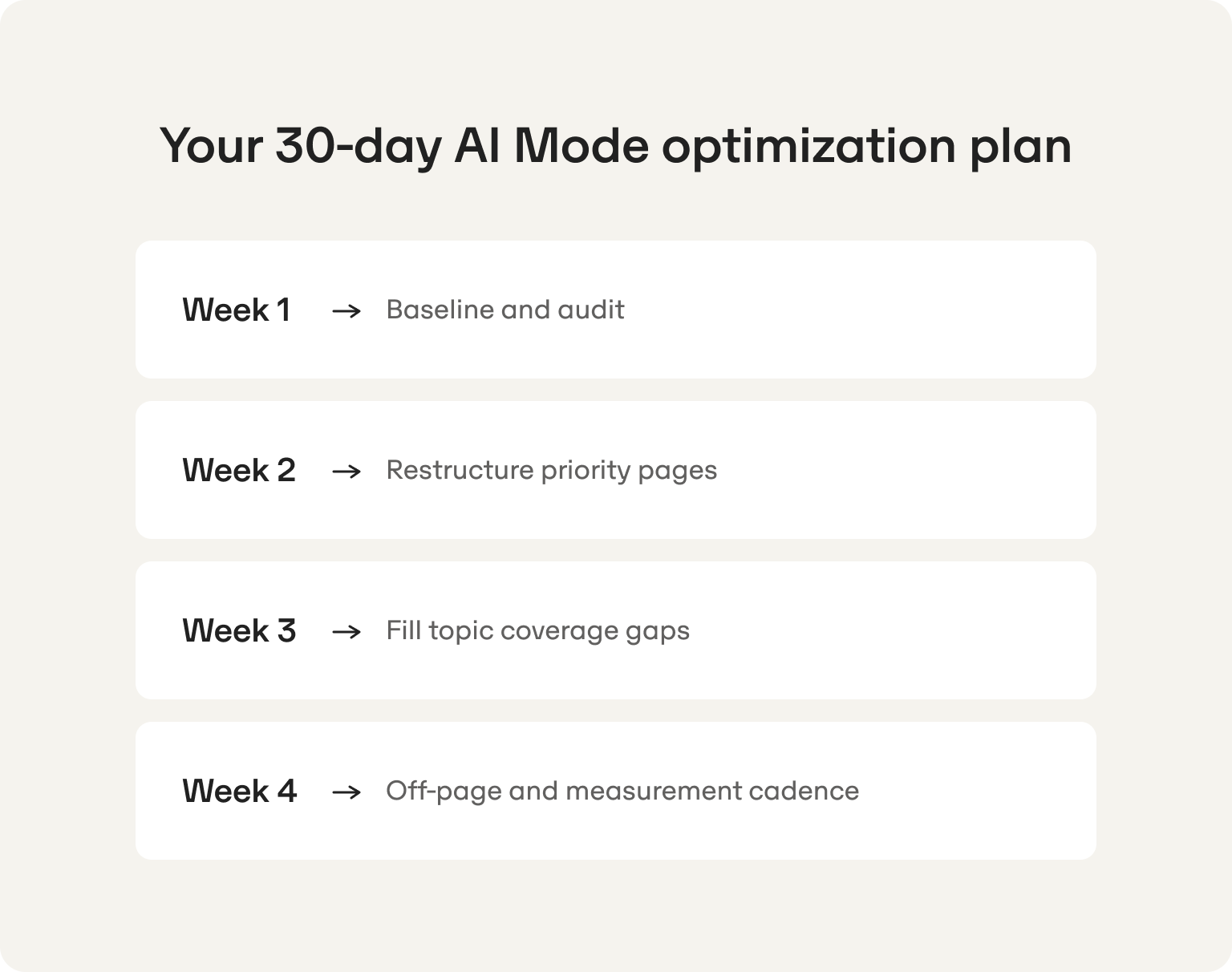

Your 30-day AI Mode optimization plan

Week 1: Baseline and audit

Day 1-2: Define your 30-40 priority prompts. These are the queries your target persona actually submits in AI Mode — framed conversationally, not as keywords. "What's the best way to improve organic traffic for a B2B startup" not "B2B organic traffic."

Day 3-4: Run each prompt through AI Mode. For every prompt, note: Are you cited? Who is? Which pages are cited? What sub-questions does the response address?

Day 5: Audit your 10 most commercially important pages against the retrieval structure criteria: direct answer in the first sentence of each section, headers that describe what the section answers, FAQ section present, one idea per paragraph.

Day 6-7: Prioritize your optimization list. Score each page by commercial importance, citation gap size, and how much restructuring is needed. Start with high commercial importance, large citation gap, and mostly structural fixes rather than full content rewrites.

Week 2: Restructure priority pages

Day 8-10: Restructure your top three priority pages. Rewrite headers, move answers to the top of each section, add or expand FAQ blocks. Restructure first — rewrite only if the content itself is the problem.

Day 11-12: Add original data or first-hand specific examples to each page. Even one proprietary observation materially changes the E-E-A-T signal.

Day 13-14: Audit and update all citations in those pages. Primary sources only. Replace secondary summaries with direct links to original research.

Week 3: Fill topic coverage gaps

Day 15-17: For the prompts where competitors are most consistently cited and you are absent, map the full fan-out question set. Brief new content targeting those coverage gaps. Prioritize topic clusters where you have existing domain authority — the goal is filling gaps, not entering new territory from scratch.

Day 18-21: Publish at least one new piece targeting a priority gap cluster. Apply the retrieval structure from day one of writing. The FAQ section is not optional.

Week 4: Off-page and measurement cadence

Day 22-24: Identify 10 community or publication touchpoints for substantive contribution. Write contributions that answer questions thoroughly, with no promotional content pointing back to your site.

Day 25-27: Identify 3-5 collaborative content or case study opportunities with partners in your topic clusters.

Day 28-30: Set up your weekly measurement cadence. Define the 30-40 prompts, establish a baseline citation rate, document which competitors are cited when you are not. This becomes your weekly GEO report — the feedback loop that tells you what to optimize next. If you want to run this without manual checking across four engines, how to monitor AI search visibility covers the tooling options in detail.

How Omnia helps

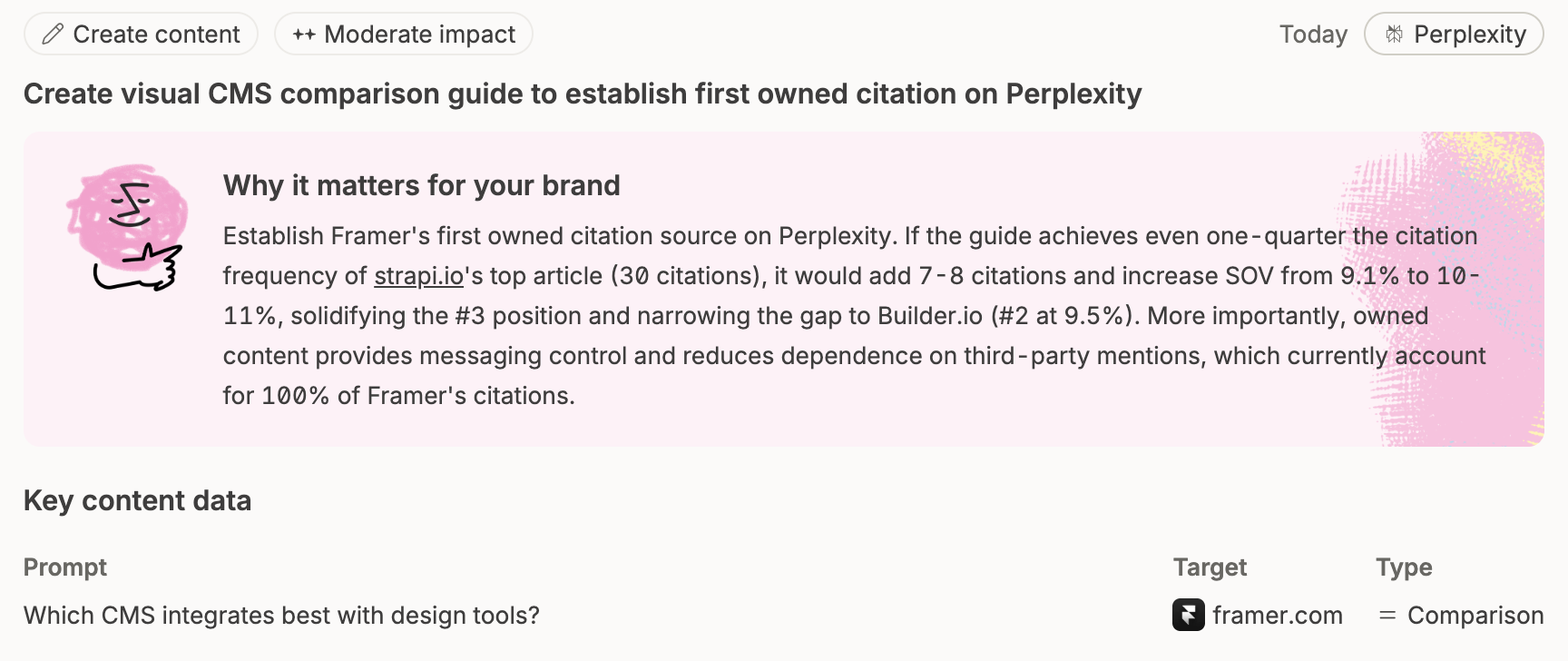

The system above requires two things that do not scale manually: knowing which prompts you are winning and losing across AI Mode, ChatGPT, Gemini, and Perplexity — and knowing exactly what content patterns are earning citations for competitors when yours are not.

Omnia monitors your citation presence across a defined prompt set, updated daily. It shows which competitors are cited for each prompt you are losing, extracts the content patterns and source structures those pages use, and surfaces the gaps between what you are publishing and what the AI is retrieving.

What Omnia does that a manual process cannot:

- Tracks AI Mode, ChatGPT, AI Overviews, and Perplexity citation presence in parallel — no manual checking across platforms or sampling by hand.

- Shows competitor citation patterns at the page level, which specific pages, and what structures they use.

- Generates prioritized insight tasks for each prompt where your visibility is below threshold: what content to create, where to publish it, which format to use, and why it matters for that specific prompt so the gap between data and action closes in the same session.

- Monitors week-over-week citation trajectory by topic cluster — so you know whether your optimization work is compounding or stalling before six months pass.

And as of April 2026, Omnia now runs directly inside your AI assistant via MCP (Model Context Protocol). If you work in Claude, ChatGPT, Cursor, or any compatible assistant, you can pull share of voice data, generate insights, analyze competitor citations, and manage your monitored prompts without leaving the conversation. You talk to your assistant. Your assistant talks to Omnia. The gap between insight and action closes in the same session.

Most tools show you a dashboard. Omnia closes the execution gap — and now it does it from wherever you already work.

Book a demo or explore Omnia to see how citation monitoring works in practice.

FAQs

If I'm already ranking position one in classic Google search, why am I not being cited in AI Mode?

Classic ranking and AI Mode citation use overlapping but non-identical signals. A page that ranks well for a primary keyword may be highly optimized for that single query and shallow on the surrounding topic cluster. AI Mode retrieves sources that satisfy the full information need behind a query — including the sub-questions it fans out into. A page that answers only the head term competes in one retrieval slot. A page that covers the topic precisely competes in several. High classic ranking is a prerequisite — it signals that your content is credible enough to retrieve. It does not guarantee citation if your page's topic coverage is narrow.

How is optimizing for AI Mode different from what I was already doing for AI Overviews?

AI Mode is a more intensive version of the same retrieval logic that powers AI Overviews, but the query fan-out is deeper and the synthesis is more elaborate. The optimization principles are the same — topic depth, E-E-A-T signals, structured formatting, branded web presence — but the bar is higher. AI Overviews often surface for simpler, more informational queries. AI Mode handles complex, multi-step queries where a user expects a research-grade response. The structural requirements are the same; the execution threshold is stricter. Ahrefs' December 2025 data showing only 13.7% citation overlap between the two surfaces confirms they should be treated as related but distinct optimization targets.

Do I need to create new content or can I restructure existing pages?

Both, but restructuring existing content is the faster win. Existing pages with strong domain authority and established E-E-A-T signals often fail AI Mode citation not because the content is wrong but because it is structured for human narrative reading rather than AI extraction. Rewriting headers, moving direct answers to the top of sections, and adding FAQ blocks can measurably change citation behavior on pages you have invested months building — usually within 2-4 weeks of the change being re-crawled. New content is necessary where you have genuine topic coverage gaps — sub-question clusters your existing pages do not address at all.

How do I know which of my pages are underperforming specifically in AI Mode vs. classic search?

The clearest diagnostic is prompt testing. Define the 20-30 queries most important to your business, framed conversationally as a user would submit them in AI Mode. Run each one and check whether your pages are cited. Cross-reference those results against your classic SERP rankings for related keywords. Pages that rank well in classic search but do not appear in AI Mode responses are your structural optimization priorities — they have the authority signal but not the retrieval structure. Omnia tracks this systematically across your full prompt set, with week-over-week trending, so you are not sampling manually every time.

How quickly can I expect to see AI Mode citation improvement after making structural changes?

Faster than classic SEO, but not immediate. Structural changes to existing pages — restructured headers, direct answers moved to section openers, FAQ sections added — typically show citation movement within 2-4 weeks of Google re-crawling the page. New content takes slightly longer to establish retrieval presence, typically 3-6 weeks. The compound effect — where topic depth across a cluster builds AI Mode authority on that topic — takes 6-10 weeks to become clearly measurable. The consistent pattern: structural fixes to existing high-authority pages produce the fastest visible lift. New content filling genuine coverage gaps produces the most durable lift.